SLIDE 1

Neural Networks

Mark van Rossum

School of Informatics, University of Edinburgh

January 15, 2018

1 / 28

Goals: Understand how (recurrent) networks behave Find a way to teach networks to do a certain computation (e.g. ICA) Network choices Neuron models: spiking, binary, rate (and its in-out relation). Use separate inhibitory neurons (Dale’s law)? Synaptic transmission dynamics?

2 / 28

Overview

Feedforward networks

Perceptron Multi-layer perceptron Liquid state machines Deep layered networks

Recurrent networks

Hopfield networks Boltzmann Machines

3 / 28

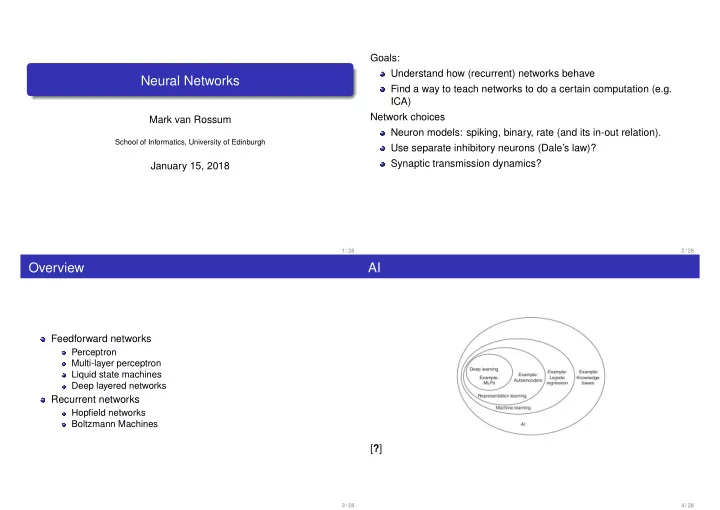

AI

[?]

4 / 28