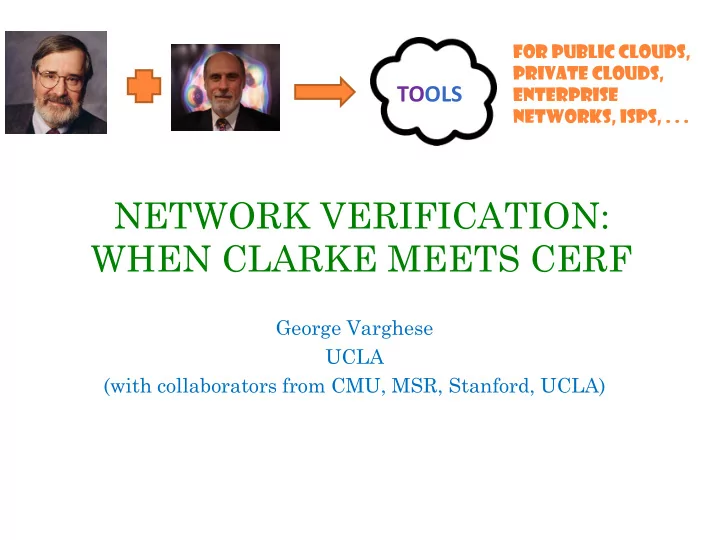

NETWORK VERIFICATION: WHEN CLARKE MEETS CERF

George Varghese UCLA (with collaborators from CMU, MSR, Stanford, UCLA)

1

FOR PUBLIC CLOUDS, PRIVATE CLOUDS, ENTERPRISE NETWORKS, ISPs, . . .

NETWORK VERIFICATION: WHEN CLARKE MEETS CERF George Varghese UCLA - - PowerPoint PPT Presentation

FOR PUBLIC CLOUDS, PRIVATE CLOUDS, TOOLS ENTERPRISE NETWORKS, ISPs, . . . NETWORK VERIFICATION: WHEN CLARKE MEETS CERF George Varghese UCLA (with collaborators from CMU, MSR, Stanford, UCLA) 1 Model and Terminology 1.8.* 1.2.* 1.2.*,

1

FOR PUBLIC CLOUDS, PRIVATE CLOUDS, ENTERPRISE NETWORKS, ISPs, . . .

1.

3

4

5

6

7

8

9

Packet Forwarding

Action

P1 P2

10

11

Scaling Network Verification Control Plane Verification

12

All Packets that A can possibly send to box 2 through box 1 All Packets that A can possibly send

Box 1 Box 2 Box 3 Box 4

A B T1(X,A) T2(T1(X,A)) T4(T1(X,A)) T3(T2(T1(X,A)) U T3(T4(T1(X,A))

13

All Packets that A can possibly send to box 4 through box 1

14

16

R5 R2 R1 R4 R3 X Z R5 R2 R1 R3 X Z

Y Y

R5 R2 X R1 R4 R3 X

X X X X X X

R5 R2 X R1 R4 R3

X X X X

R5 R2 X R1 R4 R3 X

X X X

Transform (Remove X Rule in R4

R5 R2 X R1 R4 R3 X

X X X X X X

i k l j e

22

23

24

25

Data Plane Scaling Control Plane Verification

M B1

B2

30

scope a field for faster verification KLEE assertion

Create symbolic attribute

32

33

Functional Description (RTL) Testbench & Vectors Functional Verification Logical Synthesis Static Timing Place & Route Design Rule Checking (DRC) Layout vs Schematic (LVS) Parasitic Extraction Manufacture & Validate

Policy Language

38

39