- pyu ,E )

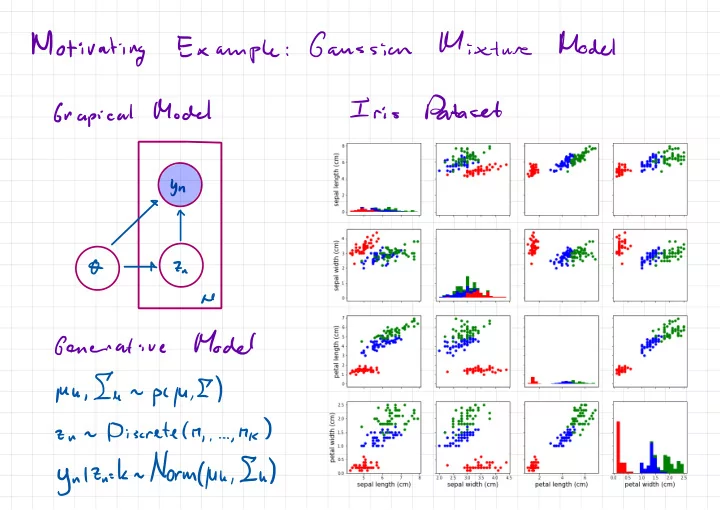

Motivating Gaussian Example : Dataset Iris Model Grapical bn - - PowerPoint PPT Presentation

Motivating Gaussian Example : Dataset Iris Model Grapical bn - - PowerPoint PPT Presentation

Mixture Model Motivating Gaussian Example : Dataset Iris Model Grapical bn O 7- a he Model Generative , In pyu ,E ) Mu - . ,hk ) Discrete ( M 2- un , . , . ynltn-kn.lt/orm4uh,Iu ) Models Motivating Hidden Mahar Example :

Motivating

Example : Hidden Mahar Models¢€yT

yi Yt & 7 , Za . z , t ← Goal : Posterior an Parameters £ t pi O ly ) = ply 10 ) p I O ) t qy

Can do viaBPG

intractable PCYIO ) = Idt pay , 7101 ply ) =/DO

pcyiolplo )=)

2-/dx

JK ) = = F :- Enix

- _

fdxrilxiflxl

- f

Es

÷

Ig ??

, fcxs ) X '- Mix

- I

- Ft

- S

Efts

I = Ef 's I f Ks ) ) = = I- f

EECES

- FI

& fix

' ))- FT I

is

= YEH It's.EE#Ef I = 's E )lsima.IT?lEs-F53=hjzsIEIlfCxt-F5I=o

"X

iss

X S- i

Self

- normalized

Importance

Sampling Problem : In- rder

Self

- normalized

Importance

Sampling 17kt =p # IZWs

is 8 ( X ' ) Unnennalized Importune Weight paly )- Phil

Wfld

) I s :-. = S Is s= , s WS =€ Ews

' f Ks IWs

:-. S '- s

flx

' ) 5--1Self

- normalized

Importance

Sampling Es ÷s.es?Ewsfk7wi--rgYyY,Es:-s?ws

Question

: Is Es unbiased ? ( i.e . EEE ' ] ? F ) Elf 's w ' f Ks ) ) = = ?El ¥1

. Ew1=*w

, = 's Jensen 's inequality Ely . K ) ) > cel EKD ) when y convex- f

MEhr

Variance- f

- FI)

- EH

}

, flat )- F

=/

dxgcxi

"E=

(lax

mail.nl/IF

'Default

choicefor

proposal : Likelihood Weighting Assume Bayes Net : j 1×7 = ply , Xi = pcylxlpcxl 2- = fdxpiy.tl- ply )

[

I not a random Variable ) qcx ) = pcxl Importance Weights : Likelihood Is =& WI

f Ks ) us = = = 5--1 . § .ws'spandan

ra ,Motivating

Problem : Hidden Markov Models§→€→

yi Yt & 7 , Ze . z , t ← Goal : Posterior an Parameters It pc O ly , =fat

PCO 't ' Y ) a Guess "futon

prior Will likelihood weighty wash ? " Cheah " using likelihoodI

a On PCO) 2- ~ pet , it 19 ) Ws ⇐ pcyi.it/7i:t,T )- 1

- f

- time

lying

.p(Xs=x

) = n(X=× ) 2*¥*y¥¥±

,III :

"II÷u

. in which X=x is visited with " frequency " h(X=x ) J/dx

' Mlxspcx ' IX ) . =fdx

' MCX ' I pcxlx ' I If you start with a sample x ' n next and then sample XIX ' ~ pl XIX ' ) this xn Mex )- Hastings

( I

,mix

, qcx ' Ix ) ) with probability C I- a

- Hastings

( l

i n ( × ) qkllx ) ) with probability ( l- d

- Hastings

- Hastings

- d)

- =

minfl.MY?,9gfIYY-j)pCx'lxlnCx

) = =- Hastings

in

( I

, n , × , qcx ' Ix ) ) . j ( x ' I 9 C XIX ' ) =main

( I

s ya , qcx ' Ix ) ) ply , X ' 7 gcxlx ' ) y Cx ) = ply , X ) =rain

( I

, pay ,xyqcx

' Ix ) )- Hastings

- Mtt

rain

( I

i pay ,× , qcx ' Ix ) ) = mmin

( I

,)

=mind

,- )

- Hastings

y

Continuous variables : Gaussian " ÷÷§\× .- f

- off

- r

- ff

- 82

- 82

- f

- f

Sampling

( Next Lecture ) Idea : Propose 1 variable at a time , holding- ther

- x. in

- Acceptance

)

= I(

Next Lecture ) Grapical Model Gibbs Sampler Steps 2- n ly , µ , E r pcznly.lu , E ) Y " µ , Ely , 't ~ pyu.Ely.tl 2- a Conditional Distributions : 2- n he pctn-hlyn.pe , E ) = plyn.7n-h.ME) Generative Modelpcyn.IQ/uu,Iunpc/u,E

) = p ' Yul 2- n=h , µ , E) pC7n=h ) 2- n- Discretely

?

pcynl Zu- l, µ