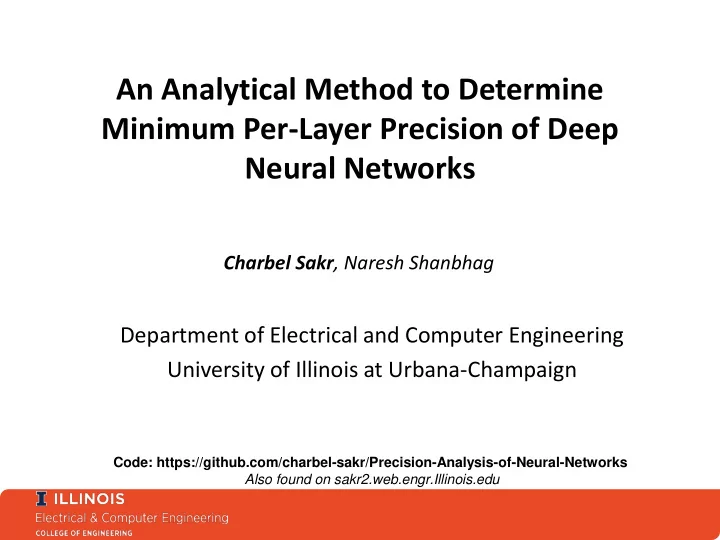

Minimum Per-Layer Precision of Deep Neural Networks Charbel Sakr , - PowerPoint PPT Presentation

An Analytical Method to Determine Minimum Per-Layer Precision of Deep Neural Networks Charbel Sakr , Naresh Shanbhag Department of Electrical and Computer Engineering University of Illinois at Urbana-Champaign Code:

An Analytical Method to Determine Minimum Per-Layer Precision of Deep Neural Networks Charbel Sakr , Naresh Shanbhag Department of Electrical and Computer Engineering University of Illinois at Urbana-Champaign Code: https://github.com/charbel-sakr/Precision-Analysis-of-Neural-Networks Also found on sakr2.web.engr.Illinois.edu

Machine Learning ASICs Eyeriss PuDianNao TPU [Sze’16, ISSCC] [Chen’15, ASPLOS] [Google’17, ISCA] AlexNet accelerator ML accelerator Tensorflow accelerator 16b fixed-point 16b fixed-point 8b fixed-point How are they choosing these precisions? Why is it working? Can it be determined analytically?

Current Approaches • Stochastic Rounding during training [Gupta, ICML’15 – Hubara , NIPS’16] → Difficulty of training in a discrete space No accuracy • Trial-and- error approach [Sung, SiPS’14] → Exhaustive search is expensive vs. precision understanding • SQNR based precision allocation [Lin, ICML’16] → Lack of precision/accuracy understanding Fixed-point Quantization

International Conference on Machine Learning (ICML) - 2017

Precision in Neural Networks Classification Output Quantization Mismatch Probability Floating-point Network Fixed-point Network 𝑞 𝑛 : “Mismatch Probability” [Sakr et al., ICML’17]

Second Order Bound on 𝒒 𝒏 • Input/Weight precision trade-off: → Optimal precision allocation by balancing the sum • Data dependence (compute once and reuse) → Derivatives obtained in last step of backprop → Only one forward-backward pass needed weight quantization noise gain activation quantization noise gain [Sakr et al., ICML’17]

Proof Sketch • For one input, when do we have a mismatch? → If FL network predicts label “ 𝑘 ” → But FX network predicts label “ 𝑗 ” where 𝑗 ≠ 𝑘 → This happens with some probability computed as follows: (Output re-ordering due to quantization) (Symmetry of quantization noise) → But we already know → Whose variance is → Applying Chebyshev + LTP yields the result [Sakr et al., ICML’17]

Tighter Bound on 𝒒 𝒏 • Mismatch probability decreases double exponentially with precision → Theoretically stronger than Theorem 1 → Unfortunately, less practical 𝑁 : Number of Classes 𝑇 : Signal to quantization noise ratio 𝑄1 & 𝑄2 : Correction factors [Sakr et al., ICML’17]

Per-layer Precision Assignment (i (activations) • Per-layer precision allows for more aggressive complexity reductions • Layer 1 Search space is huge: 2L (weights) dimensional grid • Example: 5 layer network, precision considered up to 16 bits → 16 10 ≈ one million millions design points • We need an analytical way to reduce the search space → maybe using the analysis of Sakr et al. Layer L key idea: equalization of reflected quantization noise variances

Fine-grained Precision Analysis [Sakr et al., ICML’17] per-layer quantization noise gains [Sakr et al., ICASSP’18]

Per-layer Precision Assignment Method equalization of quantization noise variances Step 1 : compute least quantization noise gain Step 2 : select precision to equalize all quantization noise variances Search space is reduced to only a one dimensional axis of reference precision min

Comparison with related works • Simplified but meaningful model of complexity → Computational cost → Total number of FAs used assuming folded MACs with bit growth allowed → Number of MACs is equal to the number of dot products computed → Number of FAs per MAC: → Representational cost → Total number of bits needed to represent weights and activations → High level measure of area and communications cost (data movement) • Other works considered → Stochastic quantization (SQ) [Gupta’15, ICML] → 784 – 1000 – 1000 – 10 (MNIST) → 64C5 – MP2 – 64C5 – MP2 – 64FC – 10 (CIFAR10) → BinaryNet (BN) [Hubara’16, NIPS] → 784 – 2048 – 2048 – 2048 – 10 (MNIST) & VGG (CIFAR10)

Precision Profiles – CIFAR-10 VGG-11 ConvNet on CIFAR-10: 32C3-32C3-MP2-64C3-64C3-MP2-128C3-128C3-256FC-256FC-10 Precision chosen such that 𝑞 𝑛 ≤ 1% • Weight precision requirements greater [Sakr et al., ICML’ 17] • Precision decreases with depth – more sensitivity to perturbations in early layers [Raghu et al., ICML’ 17]

Precision – Accuracy Trade-offs • Precision reduction is the greatest when using the proposed fine- grained precision assignment

Precision – Accuracy – Complexity Trade-offs • Best complexity reduction when the proposed precision assignment • Complexity even much smaller than a BinaryNet for same accuracy due to much higher network complexity

Conclusion & Future Work • Presented an analytical method to determine per-layer precision of neural networks • Method based on equalization of reflected quantization noise variances • Per-layer precision assignment reveals interesting properties of neural networks • Method leads to significant overall complexity reduction, e.g., reduction of minimum precision to 2 bits and lesser cost of implementation than BinaryNets • Future work: • Trade-offs between precision vs. depth vs. width • Trade-offs between precision and pruning

Thank you! Acknowledgment: This work was supported in part by Systems on Nanoscale Information fabriCs (SONIC), one of the six SRC STARnet Centers, sponsored by MARCO and DARPA.

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.