Midterm 2 Review and Minimum Spanning Trees

Tyler Moore

CSE 3353, SMU, Dallas, TX

March 28, 2013

Portions of these slides have been adapted from the slides written by Prof. Steven Skiena at SUNY Stony Brook, author

- f Algorithm Design Manual. For more information see http://www.cs.sunysb.edu/~skiena/

Administrivia

Midterm 2 next Tuesday, April 2

Covers Graph Algorithms and Recurrence Relations All material through Tuesday March 26 (including Kosaraju’s SCC algorithm, two vertex coloring algorithm, but NOT weighted algorithms) You are allowed notes on one side of a 3x5 index card Review today I will be traveling at a conference next week, so no normal office hours Come see me with any questions this week (extra office hours today 4-5pm and this Friday 1-2pm)

Next Fall I’m teaching CSE 5338 Security Economics

T/Th 11am-12:20pm Counts as upper-division CS elective, also can be taken as 7338 for 4+1 students and applied to M.S. in Security Engineering Learn to apply microeconomics and data analytics to security problems No security or economics background required Course webpage: http://lyle.smu.edu/~tylerm/courses/econsec/

2 / 37

HW2 review: Q1d and Q1e

There are several data structures you can use to represent a graph’s structure when doing traversal

We primarily studied dictionaries mapping a node to its children To identify paths, it is better to use a dictionary mapping a node to its parents

3 / 37

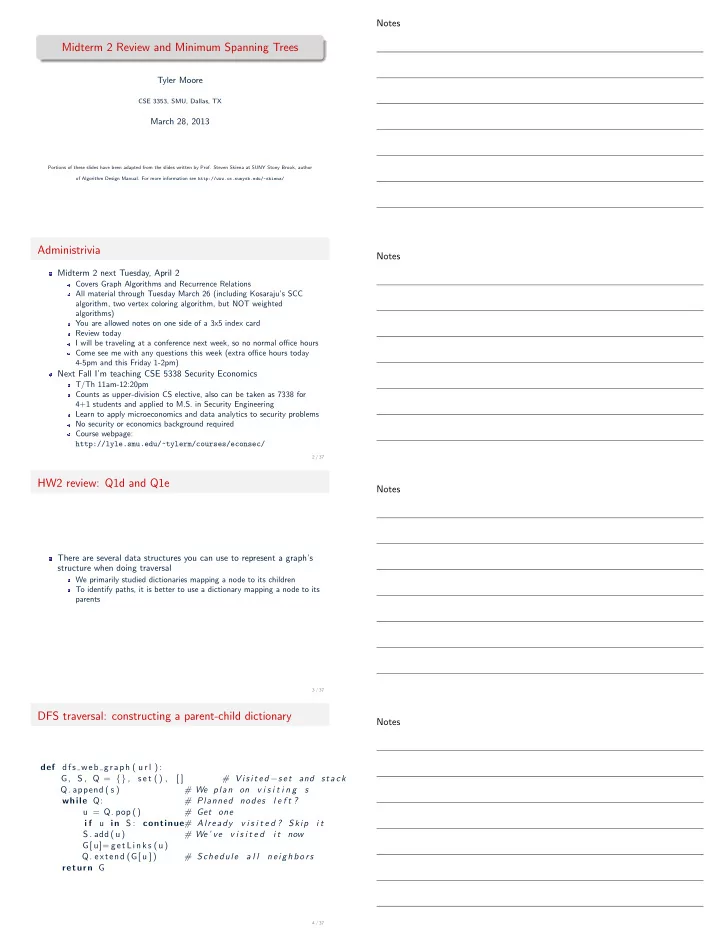

DFS traversal: constructing a parent-child dictionary

def dfs web graph ( u r l ) : G, S , Q = {} , s e t () , [ ] # V i s i t e d −s e t and stack

- Q. append ( s )

# We plan on v i s i t i n g s while Q: # Planned nodes l e f t ? u = Q. pop () # Get one i f u in S : continue# Already v i s i t e d ? Skip i t S . add (u) # We ’ ve v i s i t e d i t now G[ u]= getLinks (u)

- Q. extend (G[ u ] )

# Schedule a l l neighbors return G

4 / 37