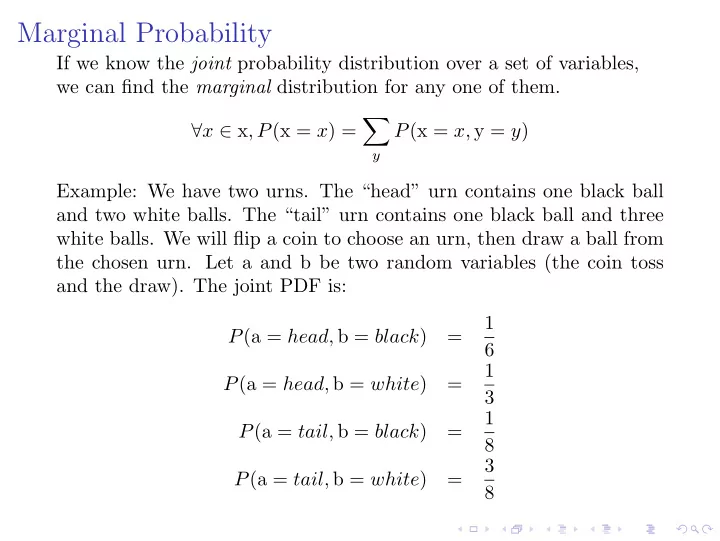

SLIDE 1 Marginal Probability

If we know the joint probability distribution over a set of variables, we can find the marginal distribution for any one of them. ∀x ∈ x, P(x = x) =

P(x = x, y = y) Example: We have two urns. The “head” urn contains one black ball and two white balls. The “tail” urn contains one black ball and three white balls. We will flip a coin to choose an urn, then draw a ball from the chosen urn. Let a and b be two random variables (the coin toss and the draw). The joint PDF is: P(a = head, b = black) = 1 6 P(a = head, b = white) = 1 3 P(a = tail, b = black) = 1 8 P(a = tail, b = white) = 3 8

SLIDE 2 Marginal Probability

The marginal probablity distribution for a is: P(a = head) =

P(a = head, b = y) = 1 6 + 1 3 = 1 2 P(a = tail) =

P(a = tail, b = y) = 1 8 + 3 8 = 1 2 The marginal probablity distribution for b is: P(b = black) =

P(b = black, a = y) = 1 6 + 1 8 = 7 24 P(b = white) =

P(b = white, a = y) = 1 3 + 3 8 = 17 24

SLIDE 3 Conditional Probability

P(y = y | x = x) = P(y = y, x = x) P(x = x) From the urns: P(b = black | a = head) = P(a = head, b = black) P(a = head) =

1 6 1 2

= 1 3 P(b = white | a = head) = P(a = head, b = white) P(a = head) =

1 3 1 2

= 2 3 P(b = black | a = tail) = P(a = tail, b = black) P(a = tail) =

1 8 1 2

= 1 4 P(b = white | a = tail) = P(a = tail, b = white) P(a = tail) =

3 8 1 2

= 3 4 The outcome of b is dependent on the outcome of a, so we cannot calculate P(a = a | b = b) as a conditional probability.

SLIDE 4 Chain Rule of Conditional Probability

Re-writing conditional probability: P(y = y | x = x) = P(y = y, x = x) P(x = x) P(y = y, x = x) = P(y = y | x = x) · P(x = x) More generally: P(An, . . . , A1) = P(An|An−1, . . . , A1) · P(An−1, . . . , A1) P

Ak

P

n−1

Ak

n−1

Ak

SLIDE 5 Chain Rule of Conditional Probability

Repeating this process with each final term creates the product: P

Ak

n

P Ak|P

k−1

Aj With four variables, the chain rule produces this product of conditional probabilities:

P(A4, A3, A2, A1) = P(A4 | A3, A2, A1) · P(A3 | A2, A1) · P(A2 | A1) · P(A1)

SLIDE 6

Chain Rule of Conditional Probability

Urn example:

P(a = head, b = black) = P(b = black | a = head)P(a = head) = 1 3 · 1 2 = 1 6 P(a = head, b = white) = P(b = white | a = head)P(a = head) = 2 3 · 1 2 = 1 3 P(a = tail, b = black) = P(b = black | a = tail)P(a = tail) = 1 4 · 1 2 = 1 8 P(a = tail, b = white) = P(b = white | a = tail)P(a = tail) = 3 4 · 1 2 = 3 8

SLIDE 7

Independence

Two random variables x and y are independent if their joint probability distribution is equal to the product of their marginal distributions: ∀x ∈ x, y ∈ y, p(x = x, y = y) = p(x = x)p(y = y)

SLIDE 8

Independence - Example

If we flip two coins, c and d:

P(c = head) = 1 2 P(c = tail) = 1 2 P(d = head) = 1 2 P(d = tail) = 1 2 P(c = head, d = head) = 1 4 P(c = head, d = tail) = 1 4 P(c = tail, d = head) = 1 4 P(c = tail, d = tail) = 1 4 P(c = head, d = head) = 1 4 P(c = head) = 1 2 P(h = head) = 1 2

Since P(c = c, d = d) = P(c = c)P(d = d) ∀c ∈ c, d ∈ d, (1) c and d are independent.

SLIDE 9

Independence - example

From the Urn example: P(a = head, b = black) = 1 6 P(a = head) = 1 2 P(b = black) = 7 24 Since 1 6 = 1 2 · 7 24 a and be are not independent.

SLIDE 10

Conditional Independence

Two random variables x and y are conditionally independent given a third random variable z if: ∀x ∈ x,y ∈ y, z ∈ z, p(x = x, y = y | z = z) = p(x = x | z = z)p(y = y | z = z) Change the Urn example so that we will flip a coin (a), then draw from both urns (b and c). The outcomes of b and c are conditionally independent of a. Notation: x⊥y means that x is independent of y x⊥y|z means that x and y are conditionally independent on z

SLIDE 11

Bayes Theorem and a-Priori Probability

In the Urn example, we could not calculate P(a = a | b = b) as a conditional probability, because b is dependent on a. However, we can calculate the a-prioi probabilities using Bayes Theorem: P(A|B) = P(B|A) P(A) P(B) Urn example:

P(a = head | b = black) = P(b = black | a = head) P(a = head) P(b = black) = 4 7 P(a = tail | b = black) = P(b = black | a = tail) P(a = tail) P(b = black) = 3 7 P(a = head | b = white) = P(b = white | a = head) P(a = head) P(b = white) = 8 17 P(a = tail | b = white) = P(b = white | a = tail) P(a = tail) P(b = white) = 9 17