M4500, February 10

Change of Bases

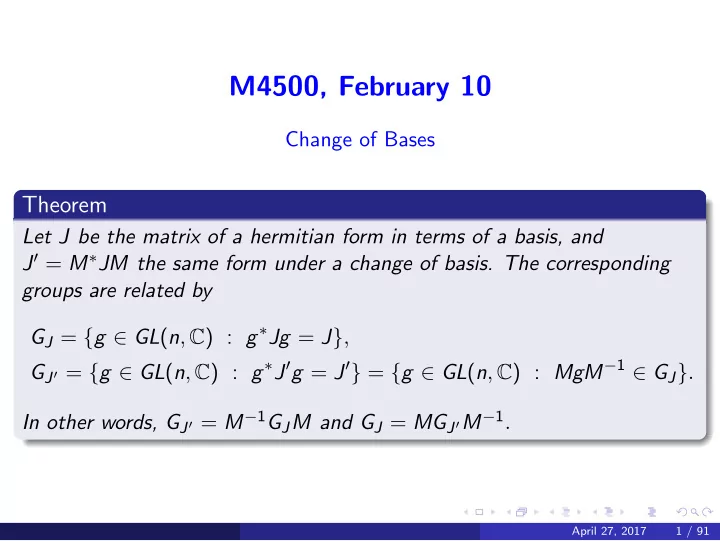

Theorem

Let J be the matrix of a hermitian form in terms of a basis, and J′ = M∗JM the same form under a change of basis. The corresponding groups are related by GJ = {g ∈ GL(n, C) : g∗Jg = J}, GJ′ = {g ∈ GL(n, C) : g∗J′g = J′} = {g ∈ GL(n, C) : MgM−1 ∈ GJ}. In other words, GJ′ = M−1GJM and GJ = MGJ′M−1.

April 27, 2017 1 / 91