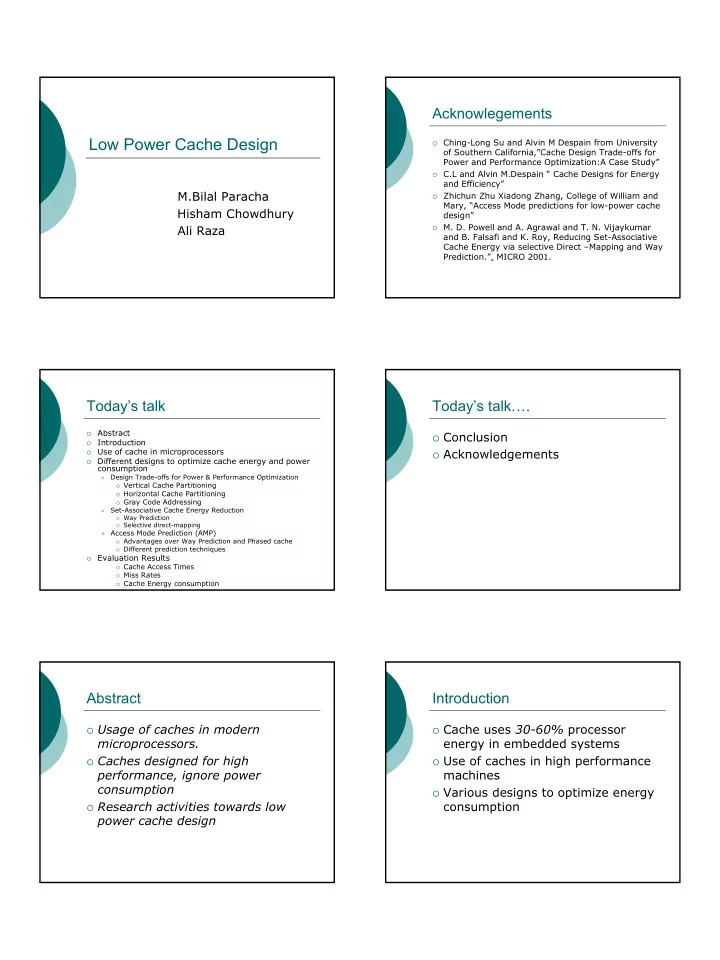

1 Low Power Cache Design

M.Bilal Paracha Hisham Chowdhury Ali Raza

Acknowlegements

Ching-Long Su and Alvin M Despain from University

- f Southern California,”Cache Design Trade-offs for

Power and Performance Optimization:A Case Study”

C.L and Alvin M.Despain “ Cache Designs for Energy

and Efficiency”

Zhichun Zhu Xiadong Zhang, College of William and

Mary, “Access Mode predictions for low-power cache design”

- M. D. Powell and A. Agrawal and T. N. Vijaykumar

and B. Falsafi and K. Roy, Reducing Set-Associative Cache Energy via selective Direct –Mapping and Way Prediction.”. MICRO 2001.

Today’s talk

- Abstract

- Introduction

- Use of cache in microprocessors

- Different designs to optimize cache energy and power

consumption

- Design Trade-offs for Power & Performance Optimization

Vertical Cache Partitioning Horizontal Cache Partitioning Gray Code Addressing

- Set-Associative Cache Energy Reduction

Way Prediction Selective direct-mapping

- Access Mode Prediction (AMP)

Advantages over Way Prediction and Phased cache Different prediction techniques

- Evaluation Results

Cache Access Times Miss Rates Cache Energy consumption

Today’s talk….

Conclusion Acknowledgements

Abstract

Usage of caches in modern

microprocessors.

Caches designed for high

performance, ignore power consumption

Research activities towards low

power cache design

Introduction

Cache uses 30-60% processor

energy in embedded systems

Use of caches in high performance

machines

Various designs to optimize energy