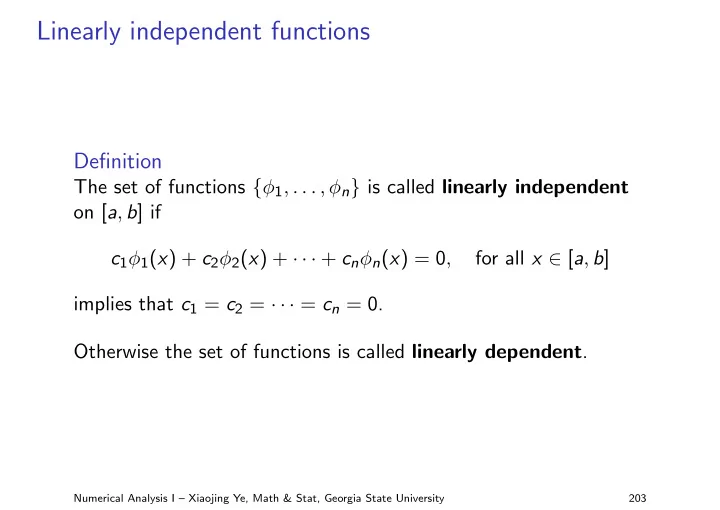

Linearly independent functions

Definition

The set of functions {φ1, . . . , φn} is called linearly independent

- n [a, b] if

c1φ1(x) + c2φ2(x) + · · · + cnφn(x) = 0, for all x ∈ [a, b] implies that c1 = c2 = · · · = cn = 0. Otherwise the set of functions is called linearly dependent.

Numerical Analysis I – Xiaojing Ye, Math & Stat, Georgia State University 203