10/17/19 1

Language models

Chapter 3 in Martin/Jurafsky

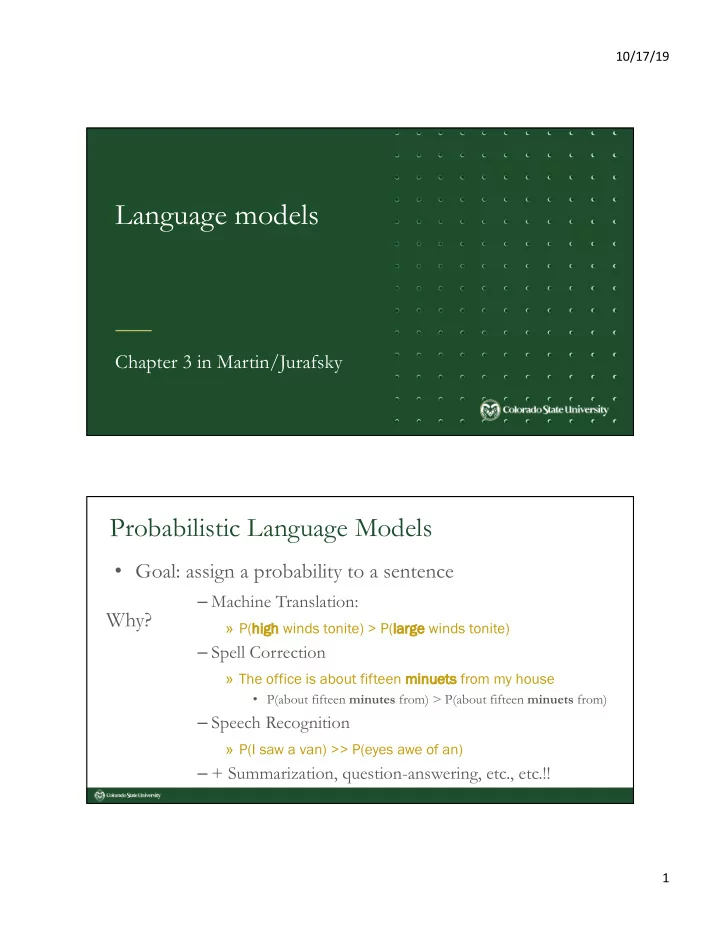

Probabilistic Language Models

- Goal: assign a probability to a sentence

– Machine Translation:

» P(hi high h winds tonite) > P(la large winds tonite)

– Spell Correction

» The office is about fifteen mi minu nuets from my house

- P(about fifteen minutes from) > P(about fifteen minuets from)

– Speech Recognition

» P(I saw a van) >> P(eyes awe of an)

– + Summarization, question-answering, etc., etc.!!