SLIDE 1

1

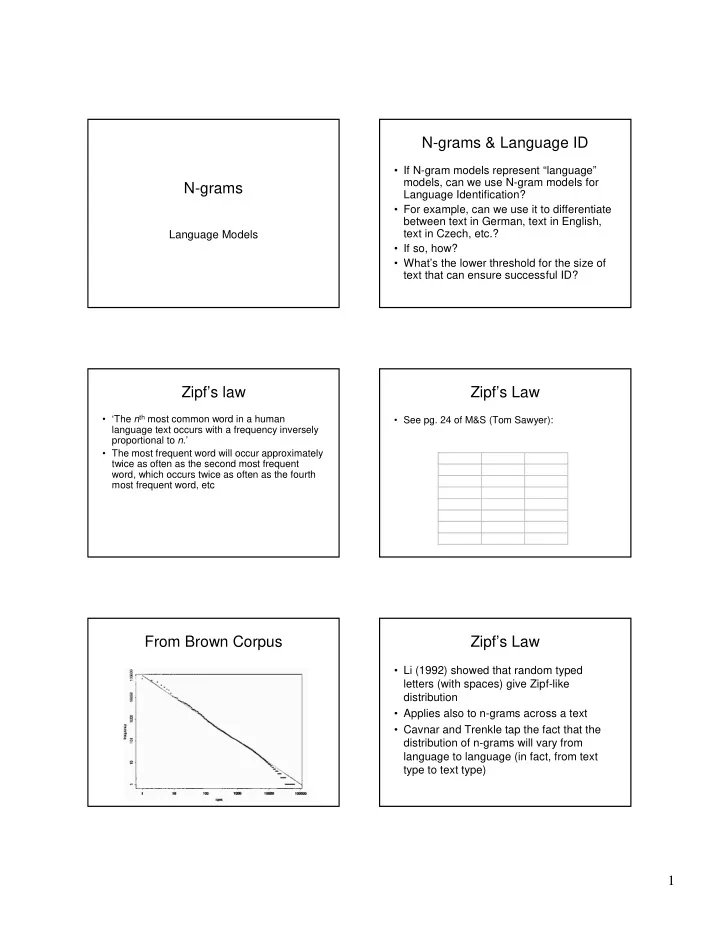

N-grams

Language Models

N-grams & Language ID

- If N-gram models represent “language”

models, can we use N-gram models for Language Identification?

- For example, can we use it to differentiate

between text in German, text in English, text in Czech, etc.?

- If so, how?

- What’s the lower threshold for the size of

text that can ensure successful ID?

Zipf’s law

- ‘The nth most common word in a human

language text occurs with a frequency inversely proportional to n.’

- The most frequent word will occur approximately

twice as often as the second most frequent word, which occurs twice as often as the fourth most frequent word, etc

Zipf’s Law

- See pg. 24 of M&S (Tom Sawyer):

Word Freq rank the 3332 1 and 2972 2 and 1775 3 he 877 10 but 410 20 be 294 30 there 222 40

From Brown Corpus Zipf’s Law

- Li (1992) showed that random typed

letters (with spaces) give Zipf-like distribution

- Applies also to n-grams across a text

- Cavnar and Trenkle tap the fact that the