& Kolkata Tier-2 Site Name :- IN-DAE-VECC-01 & - PowerPoint PPT Presentation

KOLKATA Tier-2@Alice Grid ALICE GRID & Kolkata Tier-2 Site Name :- IN-DAE-VECC-01 & IN-DAE-VECC-02 VO :- ALICE City:- KOLKATA Country :- INDIA Vikas Singhal VECC, Kolkata KOLKATA Tier-2@Alice Grid Events at LHC Luminosity : 10

KOLKATA Tier-2@Alice Grid ALICE GRID & Kolkata Tier-2 Site Name :- IN-DAE-VECC-01 & IN-DAE-VECC-02 VO :- ALICE City:- KOLKATA Country :- INDIA Vikas Singhal VECC, Kolkata

KOLKATA Tier-2@Alice Grid Events at LHC Luminosity : 10 34 cm -2 s -1 40 MHz – every 25 ns 20 events overlaying

KOLKATA Tier-2@Alice Grid The Grid Computing Model Lab m Uni x CMS ATLAS Uni a CERN Tier 1 Lab a UK USA France Tier 1 Tier3 Uni n Tier2 CERN physics LHC b department CERN Tier 0 Japan Italy Desktop Germany Scandinavia Lab b Lab c Uni y Uni b Tier 0 Centre at CERN

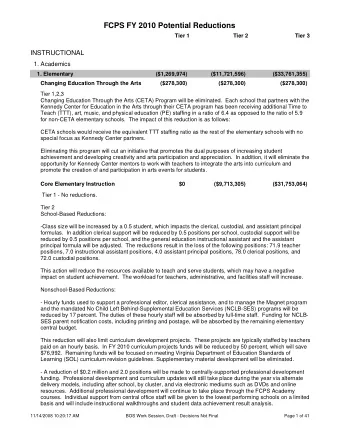

KOLKATA Tier-2@Alice Grid ALICE computing model RAW data delivered by DAQ undergo Calibration and Reconstruction which produce for each event 3 kinds of objects: 1. ESD object 2. AOD object 3. Tag object Online System This is done in Tier-0 site. Online Farm ~40 Gb/s Further reconstruction and calibration of RAW data will be done at Tier 1 and Tier 2. CERN Computer Tier 0 Center 10Gb/s Tier 1 Germany Regional Italy Regional France Regional APROC Taiwan Center Center Center 1-10 Gb/s The generation, reconstruction, storage and Tier 2 distribution of Monte-Carlo simulated data Kolkata Tier2 Center Tier2 Center Tier2 Center Tier2 Center will be the main task of Tier 1 and Tier 2. 155/622 Mb/s Tier 3 Institute Institute Institute Institute DPD (Derived Physics Data) objects will 100 - 1000 Physics data cache Mb/s be Processed in Tier 3 and Tier 4. Tier 4

ALICE Setup LHC Utilization -- ALICE HMPID TOF TRD TPC Size : 16 x 26 meters Weight : 10,000 tons PMD ITS Muon Arm PHOS Indian contribution to ALICE : PMD, Muon Arm

KOLKATA Tier-2@Alice Grid ALICE Collaboration ~ 1/2 ATLAS, CMS, ~ 2x LHCb ~1100 people 30 countries, 80 Institutes Total weight 10,000t Overall diameter 16.00m Overall length 25m Magnetic Field 0.4Tesla The ALICE collaboration & detector Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Data volumes • RAW data – 2.5 PB/year • Two distinct periods – • p+p (~7.5 months) and • Pb+Pb (~40 days) • Reconstructed and simulated data • 1.5PB – first level RAW filtering (ESDs) • 200TB – second level RAW filtering (AODs) • 1PB of simulated data • User generated data ~500TB • Total ~5 PB of data per year (without replicas) • Replication 2x RAW, 3x ESD/AODs, 2x user files Taken from L. Betev Slides in T1-T2 Meeting at Karlsruhe during Jan 2012

KOLKATA Tier-2@Alice Grid Processing • RAW data reconstruction ~10K CPU cores • MC processing ~15K CPU cores • User analysis ~7K CPU cores (450 distinct users) • ~40Mio jobs per year • ~ 1.3 job completed every second • ½ production, ½ user jobs • 200 Mio files per year Taken from L. Betev Slides in T1-T2 Meeting at KIT Taken from L. Betev Slides in T1-T2 Meeting at Karlsruhe during Jan 2012

KOLKATA Tier-2@Alice Grid KOLKATA TIER-2 @ ALICE

KOLKATA Tier-2@Alice Grid ALICE Sites on MONALISA Europe Africa South America 72 active computing sites Asia North America Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Why Tier 2 ? 1. Tier-2 is the lowest level to be accessible by the entire collaboration. 2. Each sub-detector of ALICE has to be associated with minimum Tier-2 because of large volume of calibration and simulated data. 3. PMD is one of the important sub-detectors of ALICE. 4. We are solely responsible for PMD – from conception to commissioning. Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Grid Site As per WLCG & Experiment Requirement WMS MyProxy VOMS…. Site BDII WNs (More and CREAM-CE More WNs) Disks (More and SE More (PureXrootD) Disks) LCG-UI Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid KOLKATA or General Site Central Local and Global Network / Fiber Line from Network Site BDII NFS Blade 64 HP Services SERVER bit Servers DELL WMS With Blade IBM CREAM-CE MyProxy PBS Etc… Enclosures SERVER 1U & 2U 32or64bit DPM DNS Servers Servers SERVER PureXrootD VO-BOX New SAN Box UI SERVER XrootD Old NAS Redirector Tier3 Older NAS Manage Cooling, UPS ment XrootD Disk Even Older DAS Fire Alarm, Server Server Access Control and Few Disks Arrays etc… cluster Tower (More and Servers More Arrays) HA Installation,DHCP Monitoring SERVER Server etc.. Server

KOLKATA Tier-2@Alice Grid Frontend component of Site & Installation VO-BOX Grid middleware meta-packages installed through YUM and configured through YAIM. Site BDII Middleware changed time to time like GLITE EMI. (follow manual) LCG-CE During Kolkata Site installation and configuration we experienced about RPM dependencies with JAVA, Security CREAM-CE packages etc. SE Community and mailing list helps a lot. For most of the problem we got the solution from mailing list. PURE XrootD Thanks to APROC, Taiwan for helping at each stage LCG-UI

KOLKATA Tier-2@Alice Grid Middleware installed on IN-DAE-VECC-02 Site 1.Installed SLC 5.8 (x86_64) operating system on x86_64 Machine. 2. Upgrading below middleware packages to EMI middleware. glite-VOBOX grid01.tier2-kol.res.in CREAM-CE (64bit) gridce02.tier2-kol.res.in glite-BDII Pure XROOTD Redirector as dcache-server.tier2-kol.res.in Storage Element For 79 Worker Nodes (476 core) glite-WN (64bit) wn045-wn123.internal.tier2-kol.res.in Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Backend Component of SITE Router & Switch Storage Server 2 networks, one Public Using NFS mounted Network and another Common shared space Private network. PBS Server Domain Name Server CE & PBS batch scheduler on a Server. Configured Firewall (through DNS server is critical component. iptables) and did NAT ing on it. We have 2 redundant Name servers Naamak & suchak for High Availability. TIER-3 Cluster Time Server Separate cluster for local users with Interactive and non interactive nodes. Configured NTP protocol Monitoring Server Installer Using Network installation and Configured MRTG (Network Traffic Automated configuration Quattor like tools. Monitoring) and cluster monitoring tool. Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Doing Preventive Maintenance Once in a Year Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Kolkata TIER-2 centre logical diagram Internet 300Mbps Router 1Gbps Fiber Backbone SINP 144.16.112.xx/27 Switch naamak suchak Installer grid01 Backup-server grid Grid-peer gridse001 gridce02 130 TB wn001 wn045 Backup dache-server Switch-1 wn002 Switch-2 Switch-1 wn046 Switch-2 25 Nodes DELL and HP Dell and 192.168.x.x (Stand by) 192.168.x.x (Stand by) Blade Server Computing Wipro Blades 4 – Xrootd Computing with Multi Nodes Nodes Cluster with Disk Servers Core Xeon 3.0 Consisting of 25 TB of GHz 230 TB of IBM As Tier-3 And HP SAN wn024 wn122 system wn025 wn123 IN-DAE-VECC-02 Site GRID-PEER Tier-3 with 64 bit machine cluster with 32 & 64 bit machine Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid ALICE Tier-2 Grid Started in 2002 CERN 512Kbps Ethernet Bandwidth Operating System › Scientific Linux 3.05 Middleware › Alice Environment with PBS as batch system Hardware (CPU, Disk) › 1xDuel Xeon,4GB Compute Node › 2xDuel Xeon,2GB WNs › 2x80GB Disk Space Bandwidth › 512Kbps Shared S. K. Pal & T. Samanta Vikas Singhal, VECC, INDIA Started in 2002.

KOLKATA Tier-2@Alice Grid From 2 Core to 700 Cores Started with ----2 Desktop Machine 2002 ----2 Tower Like Servers 2003 ----9 HP 1U Servers 2004 ----17 Wipro 1U Servers Single Core 2006 ----40 HP Blades Dual Core 2008 ----8 HP Blades Quad Core 2009 ----32 Dell Bladed Dual Processor Dual Core 2011 ----GPU Server with Tesla 2070 with 448Cores 2012 Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Kolkata Tier2 on Monalisa 2009 2007 Vikas Singhal, VECC, INDIA 2010 2011

KOLKATA Tier-2@Alice Grid From 512MB Disk to 300TB Disk Started with ----512MB in Desktop Machine 2002 ----40GB in Tower Like Servers as DAS 2003 ----400GB in HP MSA 500 2004 ----2TB Wipro NAS 2006 ----108TB HP EVA SAN 2008 ---- 25 TB i-scsi 2009 ----200TB IBM DS 5100 2011 ----2TB Hard disk in GPU Server 2012 Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid 2008 2006 Vikas Singhal, VECC, INDIA 2010 2012

KOLKATA Tier-2@Alice Grid From 128Kbps to 1Gbps Disk Started with ----128Kbps shared link 2002 ----512Kbps 2003 ----2Mbps Dedicated Link 2004 ----4Mbps from Bharti 2006 ----30Mbps from Reliance 2008 ----100Mbps from VSNL (ERNET) 2009 ----300 Mbps from NKN 2011 ----Upgrading with 1Gpbs 2012 Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Efficient Cooling Concept and Implementation Hot and Cool Air is separated. For air separation, Cold Air Containment is created. Cold Air Containment is least accessible Area. Cool only hardware racks, not human, walls etc. Human intervention to Cold Aisle Containment is restricted. All the management and monitoring of the server, storage is from outside Cold Aisle Containment. All the power and Ethernet cables are also from outside Cold Aisle Containment. Temperature gradient between Cold and Hot aisle is 5 o C Vikas Singhal, VECC, INDIA

KOLKATA Tier-2@Alice Grid Kolkata Tier-2 After renovation Vikas Singhal, VECC, INDIA

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.