2/21/19 1

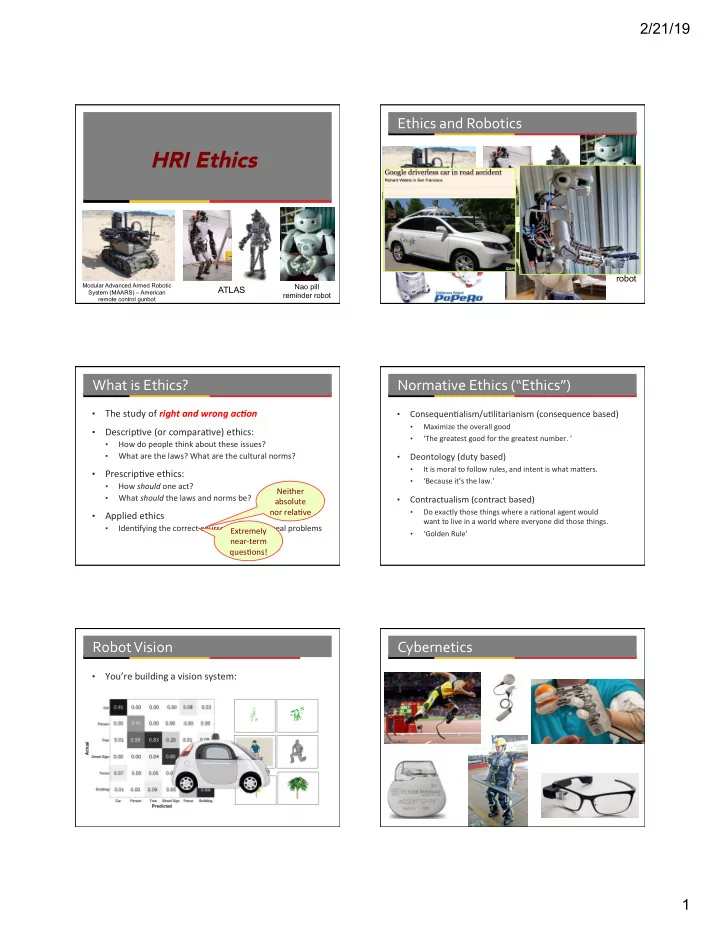

HRI Ethics

Modular Advanced Armed Robotic System (MAARS) – American remote control gunbot

ATLAS

Nao pill reminder robot

Ethics and Robotics

ATLAS

Nao pill reminder system

(MAARS) – American remote control gunbot

Robear transfer robot

What is Ethics?

- The study of right and wrong ac-on

- Descrip1ve (or compara1ve) ethics:

- How do people think about these issues?

- What are the laws? What are the cultural norms?

- Prescrip1ve ethics:

- How should one act?

- What should the laws and norms be?

- Applied ethics

- Iden1fying the correct course of ac1on for real problems

Neither absolute nor rela1ve Extremely near-term ques1ons!

Normative Ethics (“Ethics”)

- Consequen1alism/u1litarianism (consequence based)

- Maximize the overall good

- ‘The greatest good for the greatest number. ’

- Deontology (duty based)

- It is moral to follow rules, and intent is what maRers.

- ‘Because it’s the law.’

- Contractualism (contract based)

- Do exactly those things where a ra1onal agent would

want to live in a world where everyone did those things.

- ‘Golden Rule’

Robot Vision

- You’re building a vision system: