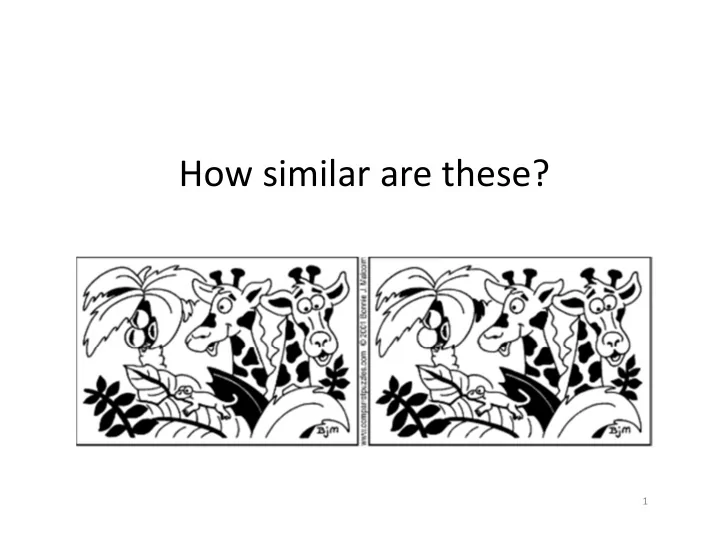

How similar are these?

1

How similar are these? 1 Whats the Problem? Finding similar items - - PowerPoint PPT Presentation

How similar are these? 1 Whats the Problem? Finding similar items with respect to some distance metric Two variants of the problem: Offline: extract all similar pairs of objects from a large collection Online: is this object

1

2

3

4

5

6

7

8

degree of similarity between doc i and j

Doc 1 to N

9

10

11

12

14

15

16

17

18

19

M00 = # rows where both elements are 0 Let: M11 = # rows where both elements are 1 M01 = # rows where A=0, B=1 M10 = # rows where A=1, B=0

20

h(A) = 4 h(B) = 3

Input matrix Permuted matrix 1 2 3 4 5 6 7 Row

M00 M00 M01 M11 M11 M00 M10

21

22

23

Comparison between two sets (original matrix) becomes comparison between two columns of minhash values (signature matrix)

Input Permutations Minhash signatures Similarities

The similarity between signatures of two columns is given by the fraction of hash functions in which they agree

24

25

h(x) = x mod 5 + 1 g(x) = (2x+1) mod 5 + 1 2 1 2 3 4 5 1 4 1 3 5 2 3 1

26

Initialization: set signatures to Apply all hash functions on each row

keep the minimum value

h(x) = x mod 5 + 1 g(x) = (2x+1) mod 5 + 1 Sig1 Sig2 h(1) = 2 2 g(1) = 4 4 h(2) = 3 2 3 g(2) = 1 4 1 h(3) = 4 2 3 g(3) = 3 3 1 h(4) = 5 2 3 g(4) = 5 3 1 h(5) = 1 2 1 g(5) = 2 3 1

27

28

Signature for the set of strings (can capture similarity)

Signatures falling into the same bucket are “similar” Set of strings

is about big This course is course is about about big data big data analytics

Another document

29

30 minhash

D1 D2 D3 D4 …. DN 1 3 3 2 2 3 2 7 7 5 5 7 k‐1 2 2 2 2 2 k 1 1 1 1 2

31 minhash

D1 D2 D3 D4 …. DN 1 3 3 2 2 3 2 7 7 5 5 7 k‐1 2 2 2 2 2 k 1 1 1 1 1 Hash function F(column) Buckets

32

33

34

Hash on all 12 minhash values – not similar Partition the 12 minhash values into two ‐ Still not similar Partition the 12 minhash values into four ‐ Potentially similar (>= 50% similar)

35

– Hash on the concatenated values (this has the “same” effect as ensuring all columns have the same values) – All documents with the same hash value will be bucketed together – Output all pairs within each bucket

36

Documents 1 and 3 potentially similar Documents 5 and 7 potentially dis‐similar Documents 1 and 3 potentially similar

are similar over at least 2k signatures Number of buckets is set to be as large as possible to minimize collision Candidate column pairs are those that hash to the same bucket for more than 1 set

37

38

39

40

41

Probability

to the same bucket Similarity s of two sets

Similarity s of two sets False negatives False positives

42

43

Probability

to the same bucket Similarity s of two sets t ~ (1/n)1/k t= 1/2 We have n = 16, k = 4

44

s 1 ‐ (1 – sk)n 0.2 0.006 0.3 0.047 0.4 0.186 0.5 0.470 0.6 0.802 0.7 0.975 0.8 0.9996

45

46

47

– If d(x, y) ≤ d1, then prob over all h in H that h(x) = h(y) is at least p1 – If d(x, y) ≥ d2, then prob over all h in H that h(x) = h(y) is at most p2

48

49

r, p2 r)‐sensitive.

50

– That is, for any p, if p is the probability that a member of H will declare (x, y) to be a candidate pair, then (1‐p) is the probability that it will not declare so. – (1‐p)b is the probability that the probability that none of the family h1, hb will declare x and y a candidate pair – 1 − (1 − p)b is the probability that at least one hi will declare (x, y) a candidate pair, and therefore that H’ will declare (x, y) to be a candidate pair.

51

(p1)r (p2)r 1‐(1‐p1)b 1‐(1‐p2)b

52

53

54

55

56

p p8 .2 0.000 .3 0.000 .4 0.000 .5 0.003 .6 0.016 .7 0.057 .8 0.167 .9 0.387

57

p 1‐(1‐p)8 .2 0.832 .3 0.942 .4 0.983 .5 0.996 .6 0.999 .7 0.999 .8 0.999 .9 0.999 ALL must match At least one match All 4 in each group must match. Enough to have one such group. At least one

each group match. All 4 groups must match

58

59

60