1

1

High Performance Computing, High Performance Computing, Computational Grid, and Numerical Libraries Computational Grid, and Numerical Libraries

Jack Dongarra I nnovative Computing Lab University of Tennessee

ht t p:/ / ht t p:/ / www.cs.ut k.edu/ ~dongarra www.cs.ut k.edu/ ~dongarra/

T h e 1 4 t h S ym p os iu m on C om p u t e r A r c h it e c t u r e a n d H ig h P e r f or m a n c e C om p u t in g V it o r ia / E S - B r a z il - O c t ob e r 2 8 -3 0 , 2 0 0 2 2

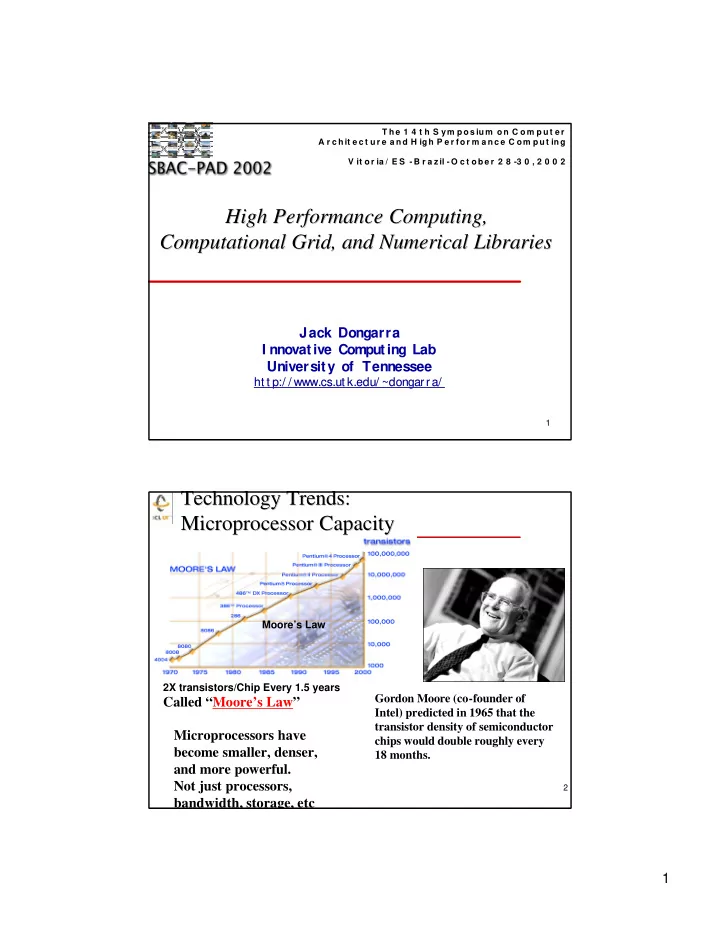

Technology Trends: Technology Trends: Microprocessor Capacity Microprocessor Capacity

2X transistors/Chip Every 1.5 years

Called “Moore’s Law”

Moore’s Law

Microprocessors have become smaller, denser, and more powerful. Not just processors, bandwidth, storage, etc

Gordon Moore (co-founder of Intel) predicted in 1965 that the transistor density of semiconductor chips would double roughly every 18 months.