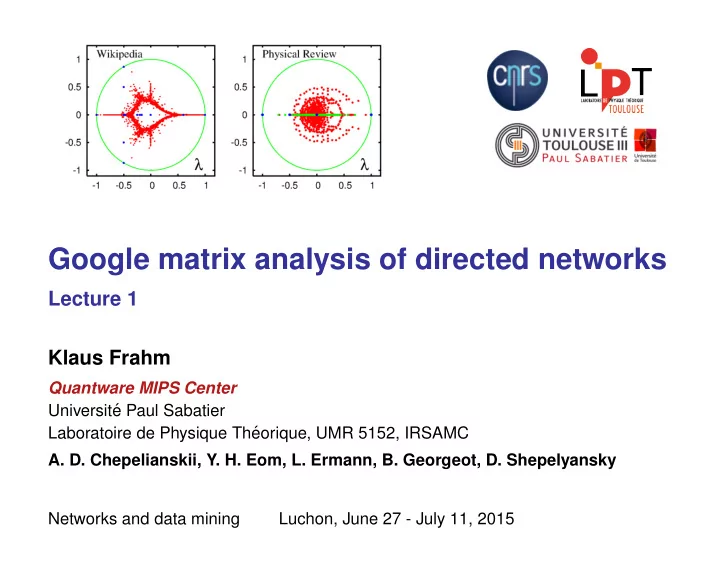

Google matrix analysis of directed networks

Lecture 1 Klaus Frahm

Quantware MIPS Center Universit´ e Paul Sabatier Laboratoire de Physique Th´ eorique, UMR 5152, IRSAMC

- A. D. Chepelianskii, Y. H. Eom, L. Ermann, B. Georgeot, D. Shepelyansky

Networks and data mining Luchon, June 27 - July 11, 2015