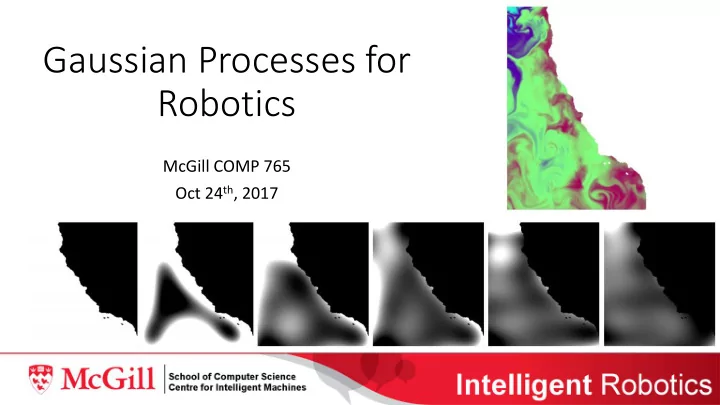

Gaussian Processes for Robotics

McGill COMP 765 Oct 24th, 2017

Gaussian Processes for Robotics McGill COMP 765 Oct 24 th , 2017 A - - PowerPoint PPT Presentation

Gaussian Processes for Robotics McGill COMP 765 Oct 24 th , 2017 A robot must learn Modeling the environment is sometimes an end goal: Space exploration Disaster recovery Environmental monitoring Other times, important

McGill COMP 765 Oct 24th, 2017

such as localization

generative model, also:

dataset directly to compute predictions of mean and variance at new points:

(intuitively: distance) between new point and training set

inference -> this can be expensive for large sets of high-dimensional data

represented efficiently with a tree

Lipschitz Constant. Optimization Theory and Applications, 1993.

work on behavior adaptation)

proposed (slight variations on those we’ve seen)

(top)

removed (bottom)

direct exploration and the dynamics model embedded in RL learning methods