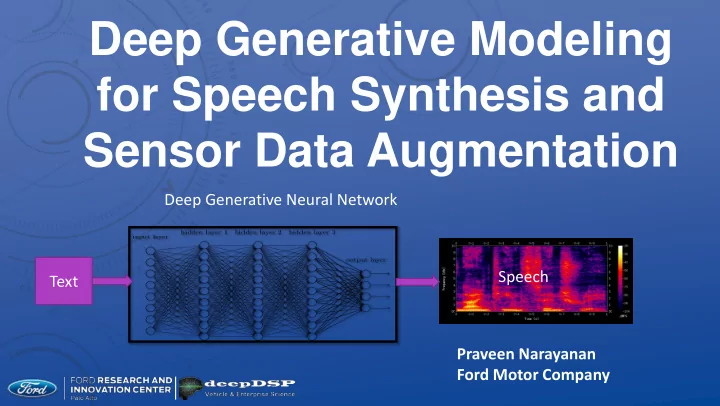

Deep Generative Modeling for Speech Synthesis and Sensor Data Augmentation

Praveen Narayanan Ford Motor Company Speech Text Deep Generative Neural Network

for Speech Synthesis and Sensor Data Augmentation Deep Generative - - PowerPoint PPT Presentation

Deep Generative Modeling for Speech Synthesis and Sensor Data Augmentation Deep Generative Neural Network Speech Text Praveen Narayanan Ford Motor Company PROJECT DESCRIPTION Use of DNNs increasingly prevalent as a solution for many data

Praveen Narayanan Ford Motor Company Speech Text Deep Generative Neural Network

=> Can we produce synthetic, realistic data?

representative

−A new research approach using DNN, came into vogue in the last three years −Examples: VAE, GAN, PixelRNN, Wavenet

2

Examples: Male vs female speech (voice conversion) Accented speech: English in different accents Multilanguage

− Rotations on point clouds − Generating data in adverse weather conditions

3

Hello Do you speak Mandarin ? Mandarin Parrot Accent Nǐ huì shuō pǔtōnghuà ma

models)

−Vanilla RNNs −Gated: LSTM, GRU, possibly bidirectional −Seq2seq + attention

−Wavenet, Bytenet, PixelRNN, PixelCNN

DRAW PixelRNN pix2pix [Goodfellow; Kingma and Welling; Rezende and Mohamed; Van den Oord et al]

Vanilla VAE

Semi Supervised VAE (SSL+conditioning, etc.)

Related

Blogs and helpers

−HMMs −DNNs

(Zen et al)

−Seq2seq + attention (Bahdanau style)

−Wavenet 1: fast training, slow generation −Wavenet 2: (a brilliancy) – two developments (100X over wavenet)

1) Inverse Autoregressive Flow – fast inference 2) Probability Density “Distillation” (as against estimation)

Speech Features Text DNN Speech Text to Speech spectrogram hello Seq2seq Attention RNN waveform Speech Deep Generative Neural Network

hello RNN h/eh/l/ow Text sequence Phoneme sequence RNN Speech Earlier models hello RNN Text sequence Speech frames Speech Tacotron Phoneme (‘token’/segment) > text Text=>phoneme needs another DNN Not totally “end-to-end”

I am not a small black cat je ne suis pas un petit chat noir Variable word length Word ordering different Attention weights Input and output words

Processed text sequence Output mel frames Sophisticated architecture

Built on top of Bahdanau Preprocessing of text Postprocessing of output ‘mel’ frames

Text Tacotron Mel Spectrogram

Training: <text/mel> pairs

Text DNN Speech Features ? Speech DNN Speech Features ? Speech Speech STFT Speech Features Text to Speech Speech to transformed speech Power & mel spectrogram Raw Audio

𝑁 = 1125 ln(1 + 𝑔 700) Linearly spaced bins in mel scale Bins closely spaced at lower frequencies (Kishore Prahllad, CMU)

Audio Linear Spectrogram Feature Generation VAE Network Mel Spectrogram Mel Spectrogram Training Speech data Mel Spectrogram Mel Spectrogram PostNet Linear Spectrogram Audio Postprocessing To recover audio Linear Mel 80 1025

Conv FilterBank Highway Mel 80 bins 1025 bins BiLSTM Processed frames 80 bins Linear PostNet Griffin Lim Audio Need to use a postprocessing DNN To recover audio waveform

Segmentation”

(Tacotron)

Pool (stride=1) convolutions (Lee et al)

GRU GRU

. 𝑦

Srivastava et al

“ground truth” “reconstructed”

Ground truth Reconstruction

DESIDERATA

Latent Reconstruction Input

Encoder Input Reconstruction Decoder Latent Layer

Training

Latent Layer Decoder Generation

Inference Sample N(0,I)

Ground Truth Reconstruction Original Image: 560 pixels Reconstructed from 20 latent variables 28X image compression advantage

Faces and poses that did not exist!

SPEECH ENCODINGS

Learn

Speaker 1 “hello” Speaker 2 “hello” Speaker 3 “hello”

Generate Z

Unique speaker “hello”

Train ASR

Spectrogram Audio VAE Spectrogram Griffin Lim Audio

F N N x1

Spectrogram nxNx1 Strided Conv Full Conn Strided Conv Full Conn

Z

Strided Deconv Full Conn Spectrogram

Encoder Decoder

N (0,1) Audio Griffin Lim Audio

𝜈 𝜏 𝜗 𝑎 = 𝜈 + 𝜗𝜏

Utterance: “Cat”

Ground Truth Rotations Reconstructed Rotations

Need larger Training set Images produced By data not in Training set

Data Encoder z Decoder (Generator) Recon Data 0/1 GAN D BProp Autoencoding Beyond Pixels

Truth GAN loss recon VAE (MSE) loss recon (Ground truth not shown)

L2 L1 GAN Ground Truth

LSTM Encoder Z LSTM Decoder Mel in Reconstruction Mel out Sketch-RNN

Ground Truth Reconstruction Simple network (LSTM)

Conv FilterBank Highway Mel 80 bins 1025 bins BiLSTM Processed frames 80 bins Linear CBHG Griffin Lim Audio

CBHG fake real L1 or L2 loss Backprop Mel (real) Linear (fake)

CBHG fake real Learned GAN loss Backprop

FC in Y direction Linear Spectrogram Reduced Linear Spectrogram Reduced Linear Spectrogram 1D conv in X direction Channels y direction Conv output Channels Y direction

CBHG GAN Discriminator False Fake (linear) Backprop Discriminator GAN Discriminator True Real Real (mel)