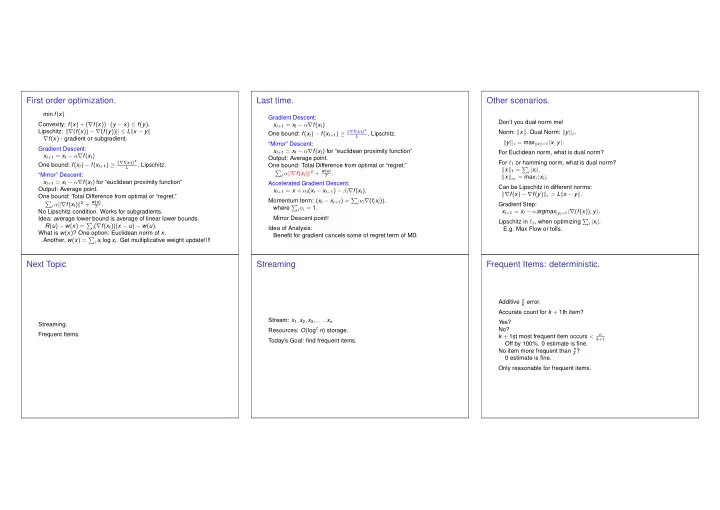

First order optimization.

min f(x) Convexity: f(x) + (∇f(x)) · (y − x) ≤ f(y). Lipschitz: ∇(f(x)) − ∇(f(y)) ≤ Lx − y ∇f(x) - gradient or subgradient. Gradient Descent: xt+1 = xt − α∇f(xt) One bound: f(xt) − f(xt+1) ≥ ∇f(xt)2

L

. Lipschitz. “Mirror” Descent: xt+1 = xt − α∇f(xt) for “euclidean proximity function” Output: Average point. One bound: Total Difference from optimal or “regret.”

- t α∇f(xt)2 + w(u)

T .

No Lipschitz condition. Works for subgradients. Idea: average lower bound is average of linear lower bounds. R(u) − w(x) =

i(∇f(xt))(x − u) − w(u).

What is w(x)? One option: Euclidean norm of x. Another, w(x) =

i xi log xi. Get multiplicative weight update!!!!

Last time.

Gradient Descent: xt+1 = xt − α∇f(xt) One bound: f(xt) − f(xt+1) ≥ ∇f(xt)2

L

. Lipschitz. “Mirror” Descent: xt+1 = xt − α∇f(xt) for “euclidean proximity function” Output: Average point. One bound: Total Difference from optimal or “regret.”

- t α∇f(xt)2 + w(u)

T .

Accelerated Gradient Descent: xt+1 = x + αi(xt − xt−1) − βi∇f(xt). Momentum term: (xt − xt+1) =

i νi∇(f(xi)).

where

i νi = 1.

Mirror Descent point! Idea of Analysis: Benefit for gradient cancels some of regret term of MD.

Other scenarios.

Don’t you dual norm me! Norm: x. Dual Norm: y∗. y∗ = maxx=1x, y. For Euclidean norm, what is dual norm? For ℓ1 or hamming norm, what is dual norm? x1 =

i |xi|.

x∞ = maxi |xi|. Can be Lipschitz in different norms: ∇f(x) − ∇f(y)∗ = Lx − y. Gradient Step: xt+1 = xt − αargmax|y|=1∇(f(x)), y. Lipschitz in ℓ1, when optimizing

i |xi|.

E.g. Max Flow or tolls.

Next Topic

Streaming. Frequent Items.

Streaming

Stream: x1, x2, x3, , . . . xn Resources: O(logc n) storage. Today’s Goal: find frequent items.

Frequent Items: deterministic.

Additive n

k error.

Accurate count for k + 1th item? Yes? No? k + 1st most frequent item occurs <

n k+1

Off by 100%. 0 estimate is fine. No item more frequent than n

k ?