1

Institute of Pharmacy and Food Chemistry University of Würzburg

Am Hubland D – 97074 Würzburg

Christoph Sotriffer

Empirical scoring functions for docking and virtual screening

Fundamentals, challenges and trends

Strasbourg Summer School on Chemoinformatics Strasbourg, June 23-27, 2014

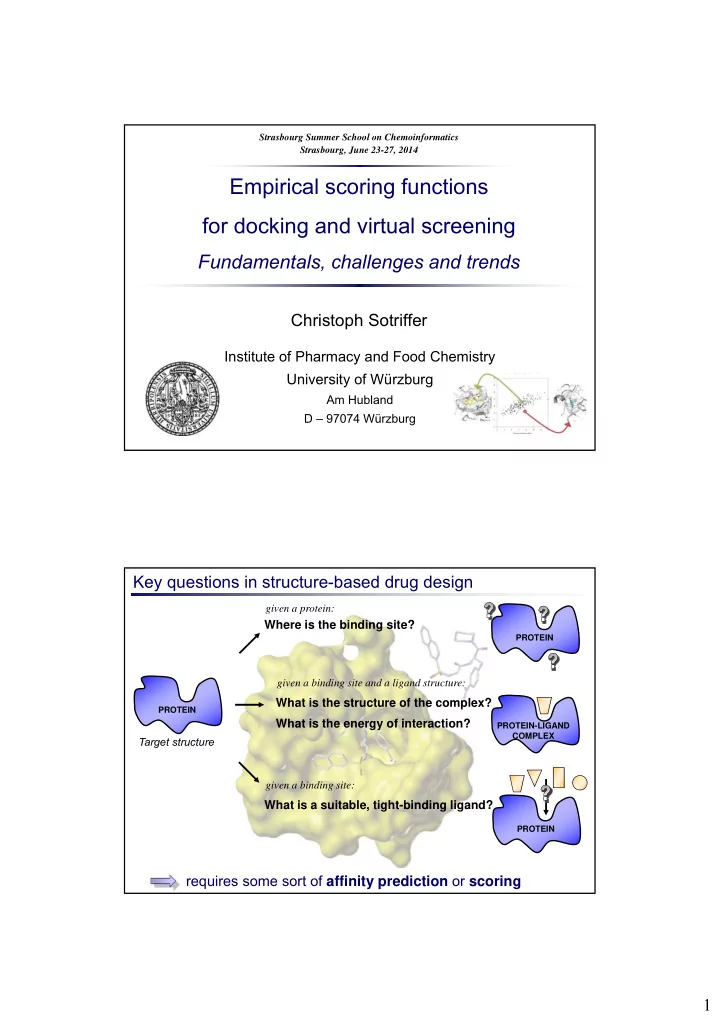

Key questions in structure-based drug design

PROTEIN PROTEIN

Where is the binding site?

given a protein: Target structure

What is the structure of the complex?

given a binding site and a ligand structure:

What is the energy of interaction?

PROTEIN-LIGAND COMPLEX

What is a suitable, tight-binding ligand?

given a binding site:

PROTEIN