- R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction

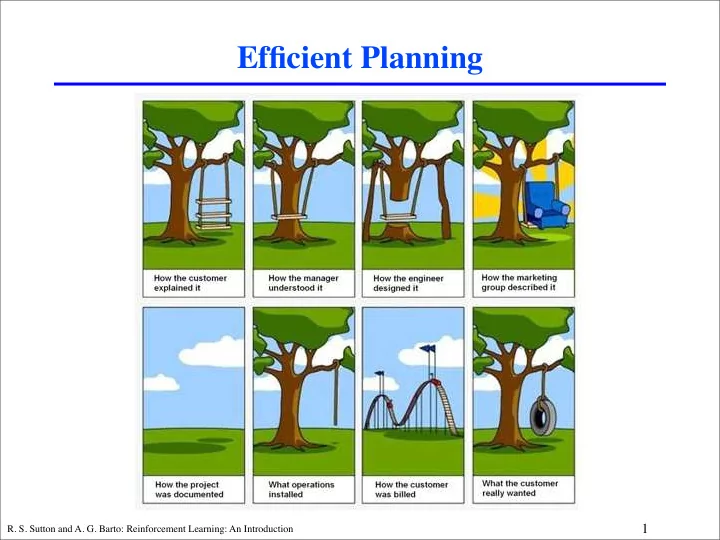

Efficient Planning

1

Efficient Planning 1 R. S. Sutton and A. G. Barto: Reinforcement - - PowerPoint PPT Presentation

Efficient Planning 1 R. S. Sutton and A. G. Barto: Reinforcement Learning: An Introduction Tuesday class summary: Planning: any computational process that uses a model to create or improve a policy Dyna framework: 2 R. S. Sutton and A. G.

1

2

3

4

5

6

7

Full backups (DP) Sample backups (one-step TD) Value estimated

V

!(s)

V*(s) Q!(a,s) Q*

(a,s)

s a s' r

policy evaluation

s a s' r

max value iteration

s a r s'

TD(0)

s,a a' s' r

Q-policy evaluation

s,a a' s' r

max Q-value iteration

s,a a' s' r

Sarsa

s,a a' s' r

Q-learning max

vπ v* qπ q*

8

b =1000

b =10,000

sample backups full backups

a0 Q(s0, a0)

9

10

i

11

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0.2 0.4 0.6 0.8 1

step−size / step−size decay RMS error

s a m p l e b a c k u p : T D ( ) , c

s t a n t s t e p − s i z e sample backup: TD(0), decaying step−size small backup

(normalized) 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 0.2 0.4 0.6 0.8 1

alpha / decay normalized RMS error sample backup: TD(0), constant α s a m p l e b a c k u p : T D ( ) , d e c a y i n g α small backup

r

l e f t

r

r i g h t

r a n d

t r a n s i t i

s

= +1 = -1

r = +1 r = +1

12

13

14

s0 p(s0|s, a)V (s0)

15

16

17

18

19

20

21

initialize V (s) arbitrarily for all s initialize U(s) = V (s) for all s initialize Q(s, a) = V (s) for all s, a initialize Nsa, Ns0

sa to 0 for all s, a, s0

loop {over episodes} initialize s repeat {for each step in the episode} select action a, based on Q(s, ·) take action a, observe r and s0 Nsa ← Nsa + 1; Ns0

sa ← Ns0 sa + 1

Q(s, a) ← ⇥ Q(s, a)(Nsa − 1) + r + γV (s0) ⇤ /Nsa V (s) ← maxb Q(s, b) p ← |V (s) − U(s)| if s is on queue, set its priority to p; otherwise, add it with priority p for a number of update cycles do remove top state ¯ s0 from queue ∆U ← U(¯ s0) − V (¯ s0) V (¯ s0) ← V U¯ s0) for all (¯ s, ¯ a) pairs with N ¯

s0 ¯ s¯ a > 0 do

Q(¯ s, ¯ a) ← Q(¯ s, ¯ a) + γN ¯

s0 ¯ s¯ a/N¯ s¯ a · ∆U

U(¯ s) ← maxb Q(¯ s, b) p ← |V (¯ s) − U(¯ s)| if s is on queue, set its priority to p; otherwise, add it with priority p end for end for s ← s0 until s is terminal end loop

22

0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 x 10

−6

0.1 0.15 0.2 0.25 0.3 0.35 0.4 0.45 0.5 0.55

PS, Moore & Atkeson P S , P e n g & W i l l i a m s PS, Wiering & Schmidhuber initial error value iteration PS, small backups

(avg. over first 105 obs)

23

24

25

26

27