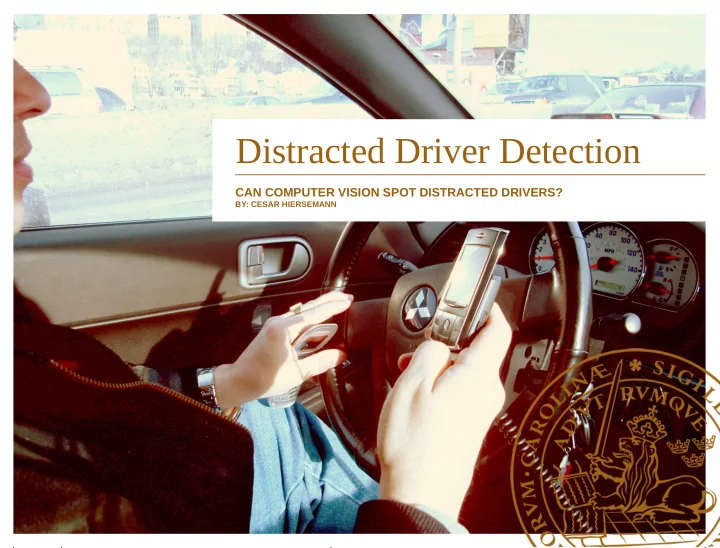

Distracted Driver Detection

CAN COMPUTER VISION SPOT DISTRACTED DRIVERS?

BY: CESAR HIERSEMANN

Distracted Driver Detection CAN COMPUTER VISION SPOT DISTRACTED - - PowerPoint PPT Presentation

Distracted Driver Detection CAN COMPUTER VISION SPOT DISTRACTED DRIVERS? BY: CESAR HIERSEMANN Image understanding is hard! Easy for humans, hard for computers Relevant XKCD (posted in 2014) http://xkcd.com/1425/ Outline

CAN COMPUTER VISION SPOT DISTRACTED DRIVERS?

BY: CESAR HIERSEMANN

http://xkcd.com/1425/

–

–

–

–

–

–

[1]: https://www.kaggle.com/c/state-farm-distracted-driver-detection

i=1 N

j=1 M

Driving safely

Talking right

Texting right

Texting left

Talking left

Drinking

Operating radio

Reaching back

Hair and makeup

Talking to passenger

[2]: Tensorflow Playground: http://playground.tensorflow.org/

–

Fourier/Laplace transform

–

Image analysis

–

Signal Processing

–

Gaussian Blur

–

Sharpening

–

Edge detection

[3]: VGG-16 network [http://arxiv.org/abs/1409.1556]

giant panda, panda, panda bear, coon bear, Ailuropoda melanoleuca

–

–

–

–

–

–

–

–

–

Train (blue) and validation (red) acc. (top) and logloss (bottom)