SLIDE 1

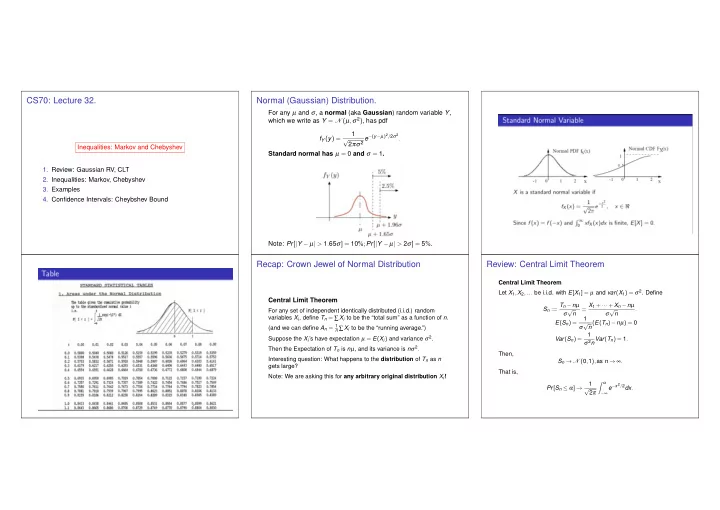

CS70: Lecture 32.

Inequalities: Markov and Chebyshev

- 1. Review: Gaussian RV, CLT

- 2. Inequalities: Markov, Chebyshev

- 3. Examples

- 4. Confidence Intervals: Cheybshev Bound