CS 6354: Memory Hierarchy III

5 September 2016

1

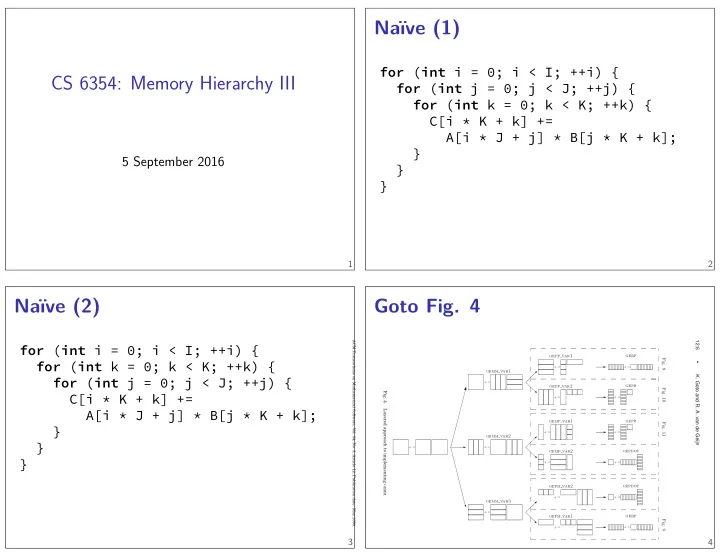

Naïve (1)

for (int i = 0; i < I; ++i) { for (int j = 0; j < J; ++j) { for (int k = 0; k < K; ++k) { C[i * K + k] += A[i * J + j] * B[j * K + k]; } } }

2

Naïve (2)

for (int i = 0; i < I; ++i) { for (int k = 0; k < K; ++k) { for (int j = 0; j < J; ++j) { C[i * K + k] += A[i * J + j] * B[j * K + k]; } } }

3

Goto Fig. 4

12:6

- K. Goto and R. A. van de Geijn

+:=

✲

gemm var1

+:=

❅

gepp var1

+:=

✲

gebp

+:=

gepp var2

+:=

✲ ✲

gepb

+:=

gemm var2

+:=

- ❅

gemp var1

+:=

✲

gepb

+:=

gemp var2

+:=

✲

gepdot

+:=

gemm var3

+:=

- ❅

gepm var2

+:=

✲

gepdot

+:=

gepm var1

+:=

✲ ✲ ✲

gebp

+:=

- Fig. 9

- Fig. 11

- Fig. 10

- Fig. 8

✲ ✲ ✲ ✲ ✲

- Fig. 4.

Layered approach to implementing GEMM.

ACM Transactions on Mathematical Software, Vol. 34, No. 3, Article 12, Publication date: May 2008.

4