Decision Making as Classification Bayes Classifiers Naive Bayes Classifiers

Cognitive Modeling

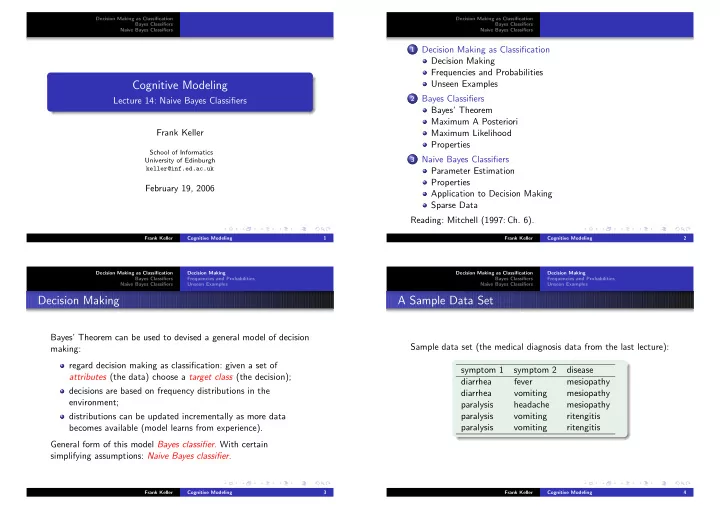

Lecture 14: Naive Bayes Classifiers Frank Keller

School of Informatics University of Edinburgh keller@inf.ed.ac.uk

February 19, 2006

Frank Keller Cognitive Modeling 1 Decision Making as Classification Bayes Classifiers Naive Bayes Classifiers

1 Decision Making as Classification

Decision Making Frequencies and Probabilities Unseen Examples

2 Bayes Classifiers

Bayes’ Theorem Maximum A Posteriori Maximum Likelihood Properties

3 Naive Bayes Classifiers

Parameter Estimation Properties Application to Decision Making Sparse Data Reading: Mitchell (1997: Ch. 6).

Frank Keller Cognitive Modeling 2 Decision Making as Classification Bayes Classifiers Naive Bayes Classifiers Decision Making Frequencies and Probabilities Unseen Examples

Decision Making

Bayes’ Theorem can be used to devised a general model of decision making: regard decision making as classification: given a set of attributes (the data) choose a target class (the decision); decisions are based on frequency distributions in the environment; distributions can be updated incrementally as more data becomes available (model learns from experience). General form of this model Bayes classifier. With certain simplifying assumptions: Naive Bayes classifier.

Frank Keller Cognitive Modeling 3 Decision Making as Classification Bayes Classifiers Naive Bayes Classifiers Decision Making Frequencies and Probabilities Unseen Examples

A Sample Data Set

Sample data set (the medical diagnosis data from the last lecture): symptom 1 symptom 2 disease diarrhea fever mesiopathy diarrhea vomiting mesiopathy paralysis headache mesiopathy paralysis vomiting ritengitis paralysis vomiting ritengitis

Frank Keller Cognitive Modeling 4