SLIDE 1

1

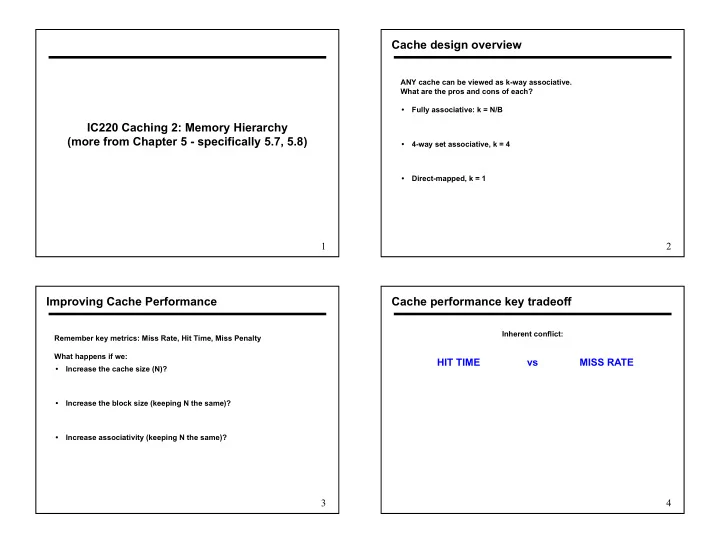

IC220 Caching 2: Memory Hierarchy (more from Chapter 5 - specifically 5.7, 5.8)

2

Cache design overview

ANY cache can be viewed as k-way associative. What are the pros and cons of each?

- Fully associative: k = N/B

- 4-way set associative, k = 4

- Direct-mapped, k = 1

3

Improving Cache Performance

Remember key metrics: Miss Rate, Hit Time, Miss Penalty What happens if we:

- Increase the cache size (N)?

- Increase the block size (keeping N the same)?

- Increase associativity (keeping N the same)?