Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 1 / 36

Bruno Gavranovi c SYCO2 Compositional Deep Learning December 18, - - PowerPoint PPT Presentation

Bruno Gavranovi c SYCO2 Compositional Deep Learning December 18, - - PowerPoint PPT Presentation

Bruno Gavranovi c SYCO2 Compositional Deep Learning December 18, 2018 1 / 36 Compositional Deep Learning Bruno Gavranovi c Faculty of Electrical Engineering and Computing (FER) University of Zagreb, Croatia bruno.gavranovic@fer.hr

Compositional Deep Learning

Bruno Gavranovi´ c

Faculty of Electrical Engineering and Computing (FER) University of Zagreb, Croatia bruno.gavranovic@fer.hr

December 18, 2018

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 2 / 36

Overview

Usage of rudimentary category theory

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 3 / 36

Overview

Usage of rudimentary category theory Neural networks

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 3 / 36

Overview

Usage of rudimentary category theory Neural networks

They’re compositional. You can stack layers and get better results They’re discovering (compositional) structures in data

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 3 / 36

Overview

Usage of rudimentary category theory Neural networks

They’re compositional. You can stack layers and get better results They’re discovering (compositional) structures in data

Work in Progress

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 3 / 36

Overview

Usage of rudimentary category theory Neural networks

They’re compositional. You can stack layers and get better results They’re discovering (compositional) structures in data

Work in Progress Experiments

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 3 / 36

Generative modelling - State of the art - 2018

We can generate completely realistic looking images

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 4 / 36

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 5 / 36

Space of all possible images

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 6 / 36

Space of all possible images

Natural images form a low dimensional manifold in its embedding space

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 6 / 36

Generative Adversarial Networks

0http://dl-ai.blogspot.com/2017/08/gan-problems.html

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 7 / 36

Generative Adversarial Networks

But we have minimal control over the network output!

0http://dl-ai.blogspot.com/2017/08/gan-problems.html

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 7 / 36

Claim

It’s possible to assign semantics to the network training procedure using the same schemas from Functorial Data Migration1

1

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 8 / 36

Claim

It’s possible to assign semantics to the network training procedure using the same schemas from Functorial Data Migration1 Functorial Data Migration Compositional Deep Learning F : C → − Set Para F is Fixed Learned

1

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 8 / 36

Functorial data migration

Categorical schema generated by a graph G and a path equivalence relation: C := (G, ≃)

Beatle

- Rock-and-roll

instrument

- Played

1https://arxiv.org/abs/1803.05316

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 9 / 36

Functorial data migration

Categorical schema generated by a graph G and a path equivalence relation: C := (G, ≃)

Beatle

- Rock-and-roll

instrument

- Played

A database instance is a functor F : C → Set Beatle Played George Lead guitar John Rhythm guitar Paul Bass guitar Ringo Drums Rock-and-roll instrument Bass guitar Drums Keyboard Lead guitar Rhythm guitar

1https://arxiv.org/abs/1803.05316

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 9 / 36

Functorial data migration

Categorical schema generated by a graph G and a path equivalence relation: C := (G, ≃)

Beatle

- Rock-and-roll

instrument

- Played

A database instance is a functor F : C → Set Beatle Played George Lead guitar John Rhythm guitar Paul Bass guitar Ringo Drums Rock-and-roll instrument Bass guitar Drums Keyboard Lead guitar Rhythm guitar In databases, we have sets of data and clear mappings between them

1https://arxiv.org/abs/1803.05316

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 9 / 36

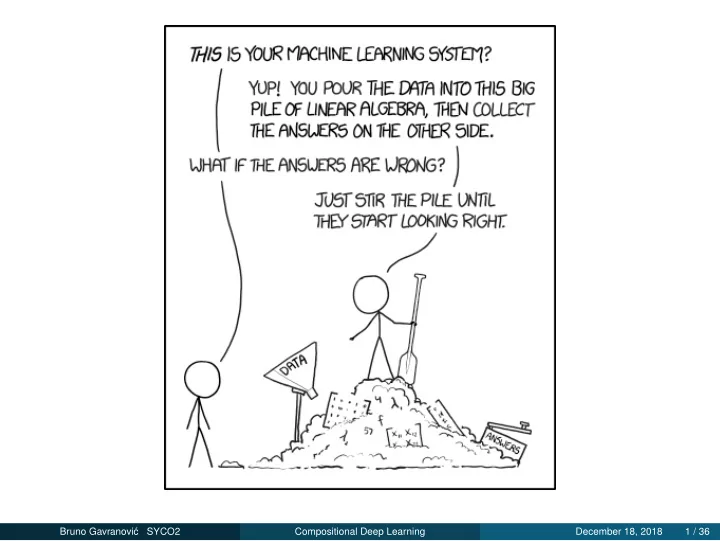

Neural networks

In machine learning all we have is plenty of data, but no known implementations of functions Input DataSample1 DataSample2 DataSample3 DataSample4 Output ExpectedOutput1 ExpectedOutput2 ExpectedOutput3 ExpectedOutput4

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 10 / 36

1https://arxiv.org/abs/1703.10593

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 11 / 36

1https://arxiv.org/abs/1703.10593

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 11 / 36

Style transfer

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 12 / 36

Style transfer

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 12 / 36

Style transfer

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 12 / 36

Style transfer

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 12 / 36

Style transfer

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 12 / 36

Style transfer

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 12 / 36

CycleGAN

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 13 / 36

Previous work

Backprop as Functor

Compositional perspective on supervised learning Category of learners Learn Category of differentiable parametrized functions Para

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 14 / 36

Previous work

Backprop as Functor

Compositional perspective on supervised learning Category of learners Learn Category of differentiable parametrized functions Para

The Simple Essence of Automatic Differentiation

Compositional, side-effect free way of performing mode-independent automatic differentiation

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 14 / 36

Category of differentiable parametrized functions

Para:

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of differentiable parametrized functions

Para: Objects a, b, c, ... are Euclidean spaces

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of differentiable parametrized functions

Para: Objects a, b, c, ... are Euclidean spaces For each two objects a, b, we specify a set Para(a, b) whose elements are differentiable functions of type P × A → B.

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of differentiable parametrized functions

Para: Objects a, b, c, ... are Euclidean spaces For each two objects a, b, we specify a set Para(a, b) whose elements are differentiable functions of type P × A → B. For every object a, we specify an identity morphism ida ∈ Para(a, a), a function of type 1 × A → A, which is just a projection

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of differentiable parametrized functions

Para: Objects a, b, c, ... are Euclidean spaces For each two objects a, b, we specify a set Para(a, b) whose elements are differentiable functions of type P × A → B. For every object a, we specify an identity morphism ida ∈ Para(a, a), a function of type 1 × A → A, which is just a projection For every three objects a, b, c and morphisms f ∈ Para(A, B) and g ∈ Para(B, C) one specifies a morphism g ◦ f ∈ Para(A, C) in the following way:

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of differentiable parametrized functions

Para: Objects a, b, c, ... are Euclidean spaces For each two objects a, b, we specify a set Para(a, b) whose elements are differentiable functions of type P × A → B. For every object a, we specify an identity morphism ida ∈ Para(a, a), a function of type 1 × A → A, which is just a projection For every three objects a, b, c and morphisms f ∈ Para(A, B) and g ∈ Para(B, C) one specifies a morphism g ◦ f ∈ Para(A, C) in the following way:

- : (Q × B → C) × (P × A → B) → ((P × Q) × A → C)

(1)

- (g, f) = λ((p, q), a) → g(q, f(p, a))

(2)

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of differentiable parametrized functions

Para: Objects a, b, c, ... are Euclidean spaces For each two objects a, b, we specify a set Para(a, b) whose elements are differentiable functions of type P × A → B. For every object a, we specify an identity morphism ida ∈ Para(a, a), a function of type 1 × A → A, which is just a projection For every three objects a, b, c and morphisms f ∈ Para(A, B) and g ∈ Para(B, C) one specifies a morphism g ◦ f ∈ Para(A, C) in the following way:

- : (Q × B → C) × (P × A → B) → ((P × Q) × A → C)

(1)

- (g, f) = λ((p, q), a) → g(q, f(p, a))

(2) I J

Q P A C

Note: Coherence conditions are valid only up to isomorphism! We can consider equivalence classes of morphisms or a consider Para

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 15 / 36

Category of learners

Learn: Let A and B be sets. A supervised learning algorithm, or simply learner, A → B is a tuple (P, I, U, r) where P is a set, and I, U, and r are functions of types: P : P, I : P × A → B, U : P × A × B → P, r: P × A × B → A. Update: UI(p, a, b) := p − ε∇pEI(p, a, b) Request rI(p, a, b) := fa 1 αB ∇aEI(p, a, b)

- ,

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 16 / 36

Many overlapping notions

The update function UI(p, a, b) := p − ε∇pEI(p, a, b) is computing two different things.

It’s calcuating the gradient pg = ∇pEI(p, a, b) It’s computing the parameter update by the rule of stochastic gradient descent: (p, pg) → p − εpg.

Request function r in itself encodes the computation of ∇aEI. Inside both r and U is embedded a notion of a cost function, which is fixed for all learners.

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 17 / 36

Many overlapping notions

The update function UI(p, a, b) := p − ε∇pEI(p, a, b) is computing two different things.

It’s calcuating the gradient pg = ∇pEI(p, a, b) It’s computing the parameter update by the rule of stochastic gradient descent: (p, pg) → p − εpg.

Request function r in itself encodes the computation of ∇aEI. Inside both r and U is embedded a notion of a cost function, which is fixed for all learners. Problem: These concepts are not separated into abstractions that reuse and compose well!

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 17 / 36

The Simple Essence of Automatic Differentiation

“Category of differentiable functions” is tricky to get right in a computational setting!

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 18 / 36

The Simple Essence of Automatic Differentiation

“Category of differentiable functions” is tricky to get right in a computational setting! Implementing an efficient composable differentiation framework is more art than science

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 18 / 36

The Simple Essence of Automatic Differentiation

“Category of differentiable functions” is tricky to get right in a computational setting! Implementing an efficient composable differentiation framework is more art than science Chain rule isn’t compositional (g ◦ f)′(x) = g′(f(x)) · f ′(x)

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 18 / 36

The Simple Essence of Automatic Differentiation

“Category of differentiable functions” is tricky to get right in a computational setting! Implementing an efficient composable differentiation framework is more art than science Chain rule isn’t compositional (g ◦ f)′(x) = g′(f(x)) · f ′(x)

Derivative of the composition can’t be expressed only as a composition of derivatives!

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 18 / 36

The Simple Essence of Automatic Differentiation

“Category of differentiable functions” is tricky to get right in a computational setting! Implementing an efficient composable differentiation framework is more art than science Chain rule isn’t compositional (g ◦ f)′(x) = g′(f(x)) · f ′(x)

Derivative of the composition can’t be expressed only as a composition of derivatives!

You need to store output of every function you evaluate

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 18 / 36

The Simple Essence of Automatic Differentiation

“Category of differentiable functions” is tricky to get right in a computational setting! Implementing an efficient composable differentiation framework is more art than science Chain rule isn’t compositional (g ◦ f)′(x) = g′(f(x)) · f ′(x)

Derivative of the composition can’t be expressed only as a composition of derivatives!

You need to store output of every function you evaluate Every deep learning framework has a carefully crafted implementation of side-effects

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 18 / 36

The Simple Essence of Automatic Differentiation

Automatic differentiation - category D of differentiable functions

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 19 / 36

The Simple Essence of Automatic Differentiation

Automatic differentiation - category D of differentiable functions Morphism A → B is a function of type a → b × (a ⊸ b)

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 19 / 36

The Simple Essence of Automatic Differentiation

Automatic differentiation - category D of differentiable functions Morphism A → B is a function of type a → b × (a ⊸ b) Composition: g ◦ f = λa → let(b, f ′) = f(a), (c, g′) = g(b) in(c, g′ ◦ f ′)

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 19 / 36

The Simple Essence of Automatic Differentiation

Automatic differentiation - category D of differentiable functions Morphism A → B is a function of type a → b × (a ⊸ b) Composition: g ◦ f = λa → let(b, f ′) = f(a), (c, g′) = g(b) in(c, g′ ◦ f ′) Structure for splitting and joining wires

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 19 / 36

The Simple Essence of Automatic Differentiation

Automatic differentiation - category D of differentiable functions Morphism A → B is a function of type a → b × (a ⊸ b) Composition: g ◦ f = λa → let(b, f ′) = f(a), (c, g′) = g(b) in(c, g′ ◦ f ′) Structure for splitting and joining wires Generalization to more than just linear maps

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 19 / 36

The Simple Essence of Automatic Differentiation

Automatic differentiation - category D of differentiable functions Morphism A → B is a function of type a → b × (a ⊸ b) Composition: g ◦ f = λa → let(b, f ′) = f(a), (c, g′) = g(b) in(c, g′ ◦ f ′) Structure for splitting and joining wires Generalization to more than just linear maps

Forward-mode automatic differentiation Reverse-mode automatic differentiation Backpropagation - DDual→+

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 19 / 36

BackpropFunctor + SimpleAD

BackpropFunctor doesn’t mention categorical differentiation

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 20 / 36

BackpropFunctor + SimpleAD

BackpropFunctor doesn’t mention categorical differentiation SimpleAD doesn’t talk about learning itself

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 20 / 36

BackpropFunctor + SimpleAD

BackpropFunctor doesn’t mention categorical differentiation SimpleAD doesn’t talk about learning itself Both are talking about similar concepts

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 20 / 36

BackpropFunctor + SimpleAD

BackpropFunctor doesn’t mention categorical differentiation SimpleAD doesn’t talk about learning itself Both are talking about similar concepts For each P × A → B in Hom(a, b) in Para, we’d like to specify a set of functions of type P × A → B × ((P × A) ⊸ B) instead of just P × A → B

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 20 / 36

BackpropFunctor + SimpleAD

BackpropFunctor doesn’t mention categorical differentiation SimpleAD doesn’t talk about learning itself Both are talking about similar concepts For each P × A → B in Hom(a, b) in Para, we’d like to specify a set of functions of type P × A → B × ((P × A) ⊸ B) instead of just P × A → B Separate the structure needed for parametricity and structure needed for composable differentiability

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 20 / 36

BackpropFunctor + SimpleAD

BackpropFunctor doesn’t mention categorical differentiation SimpleAD doesn’t talk about learning itself Both are talking about similar concepts For each P × A → B in Hom(a, b) in Para, we’d like to specify a set of functions of type P × A → B × ((P × A) ⊸ B) instead of just P × A → B Separate the structure needed for parametricity and structure needed for composable differentiability Solution: ?

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 20 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃)

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃)

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃)

Horse

- Zebra

- f

g

f . g = idh g . f = idz

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃) Learn a functor P : C → Para

Horse

- Zebra

- f

g

f . g = idh g . f = idz

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃) Learn a functor P : C → Para

Start with a functor Free(G) → Para

Horse

- Zebra

- f

g

f . g = idh g . f = idz

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃) Learn a functor P : C → Para

Start with a functor Free(G) → Para Iteratively update it using samples from your datasets The learned functor will also preserve ≃

Horse

- Zebra

- f

g

f . g = idh g . f = idz

R64×64×3

- R64×64×3

- P f

P g Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

Main result

Specify the semantics of your datasets with a categorical schema C := (G, ≃) Learn a functor P : C → Para

Start with a functor Free(G) → Para Iteratively update it using samples from your datasets The learned functor will also preserve ≃

Novel regularization mechanism for neural networks.

Horse

- Zebra

- f

g

f . g = idh g . f = idz

R64×64×3

- R64×64×3

- P f

P g Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 21 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Every morphism in Para is a function parametrized by some P

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Every morphism in Para is a function parametrized by some P Initializing P randomly => “initializing” a morphism

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Every morphism in Para is a function parametrized by some P Initializing P randomly => “initializing” a morphism

Get data samples da, db, ... corresponding to every object in C and in every iteration:

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Every morphism in Para is a function parametrized by some P Initializing P randomly => “initializing” a morphism

Get data samples da, db, ... corresponding to every object in C and in every iteration:

For every morphism (f : A → B) in the transitive reduction of morphisms in C, find Pf and minimize the distance between (Pf)(da) and the corresponding image manifold

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Every morphism in Para is a function parametrized by some P Initializing P randomly => “initializing” a morphism

Get data samples da, db, ... corresponding to every object in C and in every iteration:

For every morphism (f : A → B) in the transitive reduction of morphisms in C, find Pf and minimize the distance between (Pf)(da) and the corresponding image manifold For all path equations from A → B where f = g, compute both f(Ra) and g(Ra). Calculate the distance d = ||f(Ra) − g(Ra)||. Minimize d and update all parameters of f and g.

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

R64×64×3

- R64×64×3

- P f

P g

Start with a functor Free(G) → Para

Specify how it acts on objects Start with randomly initialized morphisms

Every morphism in Para is a function parametrized by some P Initializing P randomly => “initializing” a morphism

Get data samples da, db, ... corresponding to every object in C and in every iteration:

For every morphism (f : A → B) in the transitive reduction of morphisms in C, find Pf and minimize the distance between (Pf)(da) and the corresponding image manifold For all path equations from A → B where f = g, compute both f(Ra) and g(Ra). Calculate the distance d = ||f(Ra) − g(Ra)||. Minimize d and update all parameters of f and g.

The path equation regularization term forces the optimization procedure to select functors which preserve the path equivalence relation and, thus, C

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 22 / 36

Some possible schemas

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 23 / 36

Some possible schemas

This procedure generalizes several existing network architectures

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 23 / 36

Some possible schemas

This procedure generalizes several existing network architectures But it also allows us to ask, what other interesting schemas are possible?

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 23 / 36

Some possible schemas

Latent sp.

- Image

- f

no equations

Figure: GAN

Horse

- Zebra

- f

g

f . g = idh g . f = idz

Figure: CycleGAN

A

- B

- C

- f

g h f . h = f . g

Figure: Equalizer

A

- B × C

- f

g

f . g = idA g . f = idB×C

Figure: Product

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 24 / 36

Equalizer schema

A

- B

- C

- f

g h

f . h = f . g

Given two networks h, g : B → C, find a subset B′ ⊆ B such that B′ = {b ∈ B | h(b) = g(b)}

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 25 / 36

Consider two sets of images

Left: Background of color X with a circle with fixed size and position of color Y Right: Background of color Z

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 26 / 36

Product schema

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 27 / 36

Product schema

A

- B × C

- f

g

f . g = idA g . f = idB×C

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 27 / 36

Product schema

A

- B × C

- f

g

f . g = idA g . f = idB×C

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 27 / 36

Product schema

A

- B × C

- f

g

f . g = idA g . f = idB×C

Same learning algorithm can learn to remove both types of objects

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 27 / 36

Experiments

CelebA dataset of 200K images of human faces

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 28 / 36

Experiments

CelebA dataset of 200K images of human faces Conveniently, there is a “glasses” annotation

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 28 / 36

Experiments

PC :=

R32×32×3

- R32×32×3×R100

- P f

P g

f . g = idH g . f = idZ

Collection of neural networks with total 40m parameters 7h training on a GeForce GTX 1080 Successful results

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 29 / 36

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 30 / 36

Experiments

Figure: Same image, different Z vector

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 31 / 36

Experiments

Figure: Same Z vector, different image

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 32 / 36

Experiments

Figure: Top row: original image, bottom row: Removed glasses

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 33 / 36

Conclusions

Specify a collection of neural networks which are closed under composition

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 34 / 36

Conclusions

Specify a collection of neural networks which are closed under composition Specify composition invariants

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 34 / 36

Conclusions

Specify a collection of neural networks which are closed under composition Specify composition invariants Given the right data and parametrized functions of sufficient complexity, it’s possible to train them with the right inductive bias

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 34 / 36

Conclusions

Specify a collection of neural networks which are closed under composition Specify composition invariants Given the right data and parametrized functions of sufficient complexity, it’s possible to train them with the right inductive bias Common language to talk about semantics of data and training procedure

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 34 / 36

Future work

This is still rough around the edges

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 35 / 36

Future work

This is still rough around the edges What other schemas can we think of?

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 35 / 36

Future work

This is still rough around the edges What other schemas can we think of? Can we quantify type of informaton we’re giving to the network using these schemas?

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 35 / 36

Future work

This is still rough around the edges What other schemas can we think of? Can we quantify type of informaton we’re giving to the network using these schemas? Do data migration functors make sense in the context of neural networks?

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 35 / 36

Future work

This is still rough around the edges What other schemas can we think of? Can we quantify type of informaton we’re giving to the network using these schemas? Do data migration functors make sense in the context of neural networks? Can game-theoretic properties of Generative Adversarial Networks be expressed categorically?

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 35 / 36

Future work

This is still rough around the edges What other schemas can we think of? Can we quantify type of informaton we’re giving to the network using these schemas? Do data migration functors make sense in the context of neural networks? Can game-theoretic properties of Generative Adversarial Networks be expressed categorically? Coding these ideas in Idris

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 35 / 36

Thank you!

Bruno Gavranovi´ c Faculty of Electrical Engineering and Computing University of Zagreb bruno.gavranovic@fer.hr Feel free to drop me an email with any questions!

Bruno Gavranovi´ c SYCO2 Compositional Deep Learning December 18, 2018 36 / 36