SLIDE 1

Best rank-one approximation

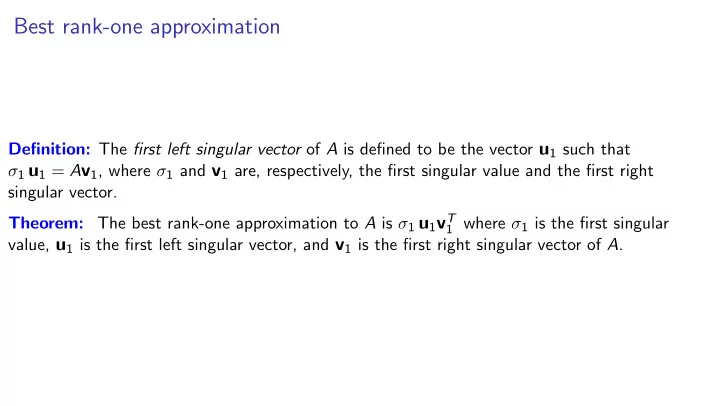

Definition: The first left singular vector of A is defined to be the vector u1 such that σ1 u1 = Av1, where σ1 and v1 are, respectively, the first singular value and the first right singular vector. Theorem: The best rank-one approximation to A is σ1 u1vT

1 where σ1 is the first singular

value, u1 is the first left singular vector, and v1 is the first right singular vector of A.

SLIDE 2 Best rank-one approximation: example

Example: For the matrix A = 1 4 5 2

- , the first right singular vector is v1 ⇡

.78 .63

first singular value σ1 is about 6.1. The first left singular vector is u1 ⇡ .54 .84

σ1 u1 = Av1. We then have ˜ A = σ1 u1vT

1

⇡ 6.1 .54 .84 ⇥ .78 .63 ⇤ ⇡ 2.6 2.1 4.0 3.2

A ˜ A ⇡ 1 4 5 2

2.1 4.0 3.2

1.56 1.93 1.00 1.23

- so the squared Frobenius norm of A ˜

A is 1.562 + 1.932 + 12 + 1.232 ⇡ 8.7 ||A ˜ A||2

F = ||A||2 F σ2 1 ⇡ 8.7. X

SLIDE 3 The closest one-dimensional affine space

In trolley-line problem, line must go through origin: closest one-dimensional vector space. Perhaps line not through origin is much closer. An arbitrary line (one not necessarily passing through the

- rigin) is a one-dimensional affine space.

Given points a1, . . . , am,

I choose point ¯

a and translate each of the input points by subtracting ¯ a: a1 ¯ a, . . . , am ¯ a

I find the one-dimensional vector space closest to these translated points, and then translate

that vector space by adding back ¯

a.

Best choice of ¯

a is the centroid of the input points, the vector ¯ a = 1

m (a1 + · · · + am).

(Proof is lovely–maybe we’ll see it later.) Translating the points by subtracting off the centroid is called centering the points.

SLIDE 4 The closest one-dimensional affine space

In trolley-line problem, line must go through origin: closest one-dimensional vector space. Perhaps line not through origin is much closer. An arbitrary line (one not necessarily passing through the

- rigin) is a one-dimensional affine space.

Given points a1, . . . , am,

I choose point ¯

a and translate each of the input points by subtracting ¯ a: a1 ¯ a, . . . , am ¯ a

I find the one-dimensional vector space closest to these translated points, and then translate

that vector space by adding back ¯

a.

Best choice of ¯

a is the centroid of the input points, the vector ¯ a = 1

m (a1 + · · · + am).

(Proof is lovely–maybe we’ll see it later.) Translating the points by subtracting off the centroid is called centering the points.

SLIDE 5 The closest one-dimensional affine space

In trolley-line problem, line must go through origin: closest one-dimensional vector space. Perhaps line not through origin is much closer. An arbitrary line (one not necessarily passing through the

- rigin) is a one-dimensional affine space.

Given points a1, . . . , am,

I choose point ¯

a and translate each of the input points by subtracting ¯ a: a1 ¯ a, . . . , am ¯ a

I find the one-dimensional vector space closest to these translated points, and then translate

that vector space by adding back ¯

a.

Best choice of ¯

a is the centroid of the input points, the vector ¯ a = 1

m (a1 + · · · + am).

(Proof is lovely–maybe we’ll see it later.) Translating the points by subtracting off the centroid is called centering the points.

SLIDE 6

Politics revisited

We center the voting data, and find the closest one-dimensional vector space Span {v1}. Now projection along v gives better spread. Look at coordinate representation in terms of v: Which of the senators to the left of the origin are Republican? >>> {r for r in senators if is_neg[r] and is_Repub[r]} {’Collins’, ’Snowe’, ’Chafee’} Similarly, only three of the senators to the right of the origin are Democrat.

SLIDE 7

Visualization revisited

We now can turn a bunch of high-dimensional vectors into a bunch of numbers, plot the numbers on number line. Dimension reduction What about turning a bunch of high-dimensional vectors into vectors in R2 or R3 or R10?

SLIDE 8 Closest 1-dimensional vector space (trolley-line-location problem):

I input: Vectors a1, . . . am I output: Orthonormal basis {v1} for dim-1 vector space V1 that minimizes

P

i(distance from ai to V1)2

We saw: P

i(distance from ai to Span {v1})2 = ||A||2 F kAv1k2

Therefore: Best vector v1 is the unit vector that maximizes kAv1k. Closest k-dimensional vector space:

I input: Vectors a1, . . . am, integer k I output: Orthonormal basis {v1, . . . , vk} for dim-k vector space Vk that minimizes

P

i(distance from ai to Vk)2

Let v1, . . . , vk be an orthonormal basis for a subspace V

a?V

1

= a1 akV

1

. . .

a?V

m

= am akV

m

By the Pythagorean Theorem, ka?V

1

k2 = ka1k2

1 k2

. . . ka?Vk2 = kamk2

m k2

SLIDE 9 Closest 1-dimensional vector space (trolley-line-location problem):

I input: Vectors a1, . . . am I output: Orthonormal basis {v1} for dim-1 vector space V1 that minimizes

P

i(distance from ai to V1)2

We saw: P

i(distance from ai to Span {v1})2 = ||A||2 F kAv1k2

Therefore: Best vector v1 is the unit vector that maximizes kAv1k. Closest k-dimensional vector space:

I input: Vectors a1, . . . am, integer k I output: Orthonormal basis {v1, . . . , vk} for dim-k vector space Vk that minimizes

P

i(distance from ai to Vk)2

Let v1, . . . , vk be an orthonormal basis for a subspace V

a?V

1

= a1 akV

1

. . .

a?V

m

= am akV

m

By the Pythagorean Theorem, ka?V

1

k2 = ka1k2

1 k2

. . . ka?Vk2 = kamk2

m k2

SLIDE 10 Thus For an orthonormal basis v1, . . . , vk of V, X

i

(dist from ai to V)2 = kAk2

F

Therefore choosing a k-dimensional space V minimizing the sum of squared distances to V is equivalent to choosing k orthonormal vectors v1, . . . , vk to maximize kAv1k2 + · · · + kAvkk2. How to choose such vectors? A greedy algorithm.

SLIDE 11 Closest dimension-k vector space

Computational Problem: closest low-dimensional subspace:

I input: Vectors a1, . . . am and positive integer k I output: basis for dim-k vector space Vk that minimizes P i(distance from ai to Vk)2

Algorithm for one dimension: choose unit-norm vector v that maximizes kAvk Natural generalization of this algorithm in which an orthonormal basis is sought. Algorithm: In ith iteration, select unit vector v that maximizes kAvk among those vectors

- rthogonal to all previously selected vectors

- v1 = norm-one vector v maximizing kAvk,

- v2 = norm-one vector v orthog. to v1 that

maximizes kAvk,

- v3 = norm-one vector v orthog. to v1 and v2

that maximizes kAvk, and so on. def find right singular vectors(A): for i = 1, 2, . . . , min{m, n}

vi =arg max{||Av|| : ||v|| = 1, v is orthog. to v1, v2, . . . vi1}

until Av = 0 for every vector v orthogonal to v1, . . . , vi Define σi = kAvik. return [v1, v2, . . . , vr] r = number of iterations.

SLIDE 12

Closest dimension-k vector space

Computational Problem: closest low-dimensional subspace:

I input: Vectors a1, . . . am and positive integer k I output: basis for dim-k vector space Vk that minimizes P i(distance from ai to Vk)2

Algorithm: In ith iteration, select vector v that maximizes kAvk among those vectors or- thogonal to all previously selected vectors. ) v1, . . . , vk Theorem: For each k 0, the first k right singular vectors span the k-dimensional space Vk that minimizes P

i(distance from ai to Vk)2.

Proof: by induction on k. The case k = 0 is trivial. Assume the theorem holds for k = q 1. We prove it for k = q. Suppose W is a q-dimensional space. Let wq be a unit vector in W that is orthogonal to

v1, . . . , vq1. (Why is there such a vector?) Let w1, . . . , wq1 be vectors such that w1, . . . , wq

form an orthonormal basis for W. (Why are there such vectors?) By choice of vq, kAvqk kAwqk. By the induction hypothesis, Span {v1, . . . , vq1} is the (q 1)-dimensional space minimizing sum of squared distances, so kAv1k2 + · · · + kAvq1k2 kAw1k2 + · · · + kAwq1k2.

SLIDE 13 def find right singular vectors(A): for i = 1, 2, . . . , min{m, n}

vi =arg max{||Av|| : ||v|| = 1, v is orthog. to v1, v2, . . . vi1}

until Av = 0 for every vector v orthogonal to v1, . . . , vi Define σi = kAvik. return [v1, v2, . . . , vr] r = number of iterations. Proposition: The singular values σ1, . . . , σr are positive and in nonincreasing order. Proof: σi = kAvik and norm of a vector is

- nonnegative. Algorithm stops before it would

choose a vector vi such that kAvik is zero, so singular values are positive. First right singular vector is chosen most freely, followed by second, etc. QED Proposition: Right singular vectors are orthonormal. Proof: In iteration i, vi is chosen from among vectors that have norm one and are orthogonal to v1, . . . , vi1. QED Theorem: Let A be an m ⇥ n matrix, and let a1, . . . , am be its rows. Let v1, . . . , vr be its right singular vectors, and let σ1, . . . , σr be its singular values. For k = 1, 2, . . . , r, Span {v1, . . . , vk} is the k-dimensional vector space V that minimizes (distance from a1 to V)2 + · · · + (distance from am to V)2 Proposition: Left singular vectors u1, . . . , ut are orthonormal. (See text for proof.)

SLIDE 14 Closest k-dimensional affine space

Use the centering technique: Find the centroid ¯

a of the input points a1, . . . , am, and subtract it from each of the input

- points. Then find a basis v1, . . . , vk for the k-dimensional vector space closest to

a1 ¯ a, . . . , am ¯

- a. The k-dimensional affine space closest to the original points a1, . . . , am is

{¯

a + v : v 2 Span {v1, . . . , vk}}

SLIDE 15 Deriving the Singular Value Decomposition

Let A be an m ⇥ n matrix. We have defined a procedure to obtain

v1, . . . , vr

the right singular vectors

σ1, . . . , σr the singular values positive

u1, . . . , ur

the left singular vectors

- rthonormal by Proposition

such that σi ui = Avi for i = 1, . . . , r. Express equations using matrix-matrix multiplication: 2 6 6 6 6 4 A 3 7 7 7 7 5 2 4 v1 · · ·

vr

3 5 = 2 6 6 6 6 4 σ1u1 · · · σrur 3 7 7 7 7 5 We rewrite equation as 2 6 6 6 6 4 A 3 7 7 7 7 5 2 4 v1 · · ·

vr

3 5 = 2 6 6 6 6 4

u1

· · ·

ur

3 7 7 7 7 5 2 6 4 σ1 ... σr 3 7 5

SLIDE 16

Deriving the Singular Value Decomposition

We rewrite equation as 2 6 6 6 6 4 A 3 7 7 7 7 5 2 4 v1 · · ·

vr

3 5 = 2 6 6 6 6 4

u1

· · ·

ur

3 7 7 7 7 5 2 6 4 σ1 ... σr 3 7 5 Assume number r of singular values is n. Then the rightmost matrix is square and its columns are orthonormal, so it is an orthogonal matrix, so its inverse is its transpose. Multiplying both sides of equation, we obtain 2 6 6 6 6 4 A 3 7 7 7 7 5 = 2 6 6 6 6 4

u1

· · ·

un

3 7 7 7 7 5 2 6 4 σ1 ... σn 3 7 5 2 6 4

vT

1

. . .

vT

n

3 7 5

SLIDE 17

Deriving the Singular Value Decomposition

Assume number r of singular values is n. Then the rightmost matrix is square and its columns are orthonormal, so it is an orthogonal matrix, so its inverse is its transpose. Multiplying both sides of equation, we obtain 2 6 6 6 6 4 A 3 7 7 7 7 5 = 2 6 6 6 6 4

u1

· · ·

un

3 7 7 7 7 5 2 6 4 σ1 ... σn 3 7 5 2 6 4

vT

1

. . .

vT

n

3 7 5 A = UΣV T where U and V are column-orthogonal and Σ is diagonal with positive diagonal elements. called the (compact) singular value decomposition (SVD) of A.

SLIDE 18

Existence of SVD

Lemma: Each row of A lies in the span of the right singular vectors. Proof .... Let V = Span {v1, . . . , vr}. By termination condition, Av = 0 for every vector v orthogonal to V. For each row ai, write ai = a||V

i

+ a?V

i

. def find right singular vectors(A): for i = 1, 2, . . . , min{m, n}

vi =arg max{||Av|| : ||v|| = 1, v is orthog. to v1, v2, . . . vi1}

σi = ||Avi|| until Av = 0 for every vector v orthogonal to v1, . . . , vi let r be the final value of the loop variable i. return [v1, v2, . . . , vr] = D

ai, a?V

i

E = D

a||V

i

+ a?V

i

, a?V

i

E = D

a||V

i

, a?V

i

E + D

a?V

i

, a?V

i

E = 0 + ka?V

i

k2 so a?V

i

= 0. Shows ai = a||V

i

, which shows that ai lies in V. QED

SLIDE 19

Existence, continued

2 6 6 6 4

aT

1

aT

2

. . .

aT

m

3 7 7 7 5 = 2 6 6 6 4 ha1, v1i · · · ha1, vri ha2, v1i · · · ha2, vri . . . ham, v1i · · · ham, vri 3 7 7 7 5 2 6 4

vT

1

. . .

vT

r

3 7 5 The jth column of the first matrix on the right-hand side is 2 6 6 6 4 ha1, vji ha2, vji . . . ham, vji 3 7 7 7 5 which is Avj, which is σjuj A = UΣV T

SLIDE 20 The Singular Value Decomposition

The (compact) SVD of a matrix A is the factorization of A as A = UΣV T where U and V are column-orthogonal and Σ is diagonal with positive diagonal elements. In general, Σ is allowed to have zero diagonal elements. Different flavors of SVD A = UΣV T of an m ⇥ n matrix:

I traditional: U is m ⇥ m, V T is n ⇥ n, and Σ is m ⇥ n I reduced, or thin:

I If m n then U is m ⇥ n, V T is n ⇥ n. I If m n then U is m ⇥ m, V T is m ⇥ n.

I compact: thin, but omit zero singular values.

We never use the traditional SVD; we mostly use compact SVD.

SLIDE 21

Properties of the SVD

I Row space of A = row space of V T I Col space of A = col space of U.

SLIDE 22

SVD of the transpose

We can go from the SVD of A to the SVD of AT. 2 4 A 3 5 = 2 4 U 3 5 2 4 Σ 3 5 2 4 V T 3 5 Define ¯ U = V and ¯ V = U. Then 2 6 6 6 6 4 AT 3 7 7 7 7 5 = 2 6 6 6 6 4 ¯ U 3 7 7 7 7 5 2 4 Σ 3 5 2 4 ¯ V T 3 5

SLIDE 23

Best rank-k approximation in terms of the singular value decomposition

Start by writing SVD of A: 2 6 6 6 6 4 A 3 7 7 7 7 5 = 2 6 6 6 6 4 U 3 7 7 7 7 5 2 6 6 6 6 6 4 σ1 ... σr 3 7 7 7 7 7 5 2 6 6 6 6 4 V T 3 7 7 7 7 5 Replace σk+1, . . . , σn with zeroes. We obtain 2 6 6 6 6 4 ˜ A 3 7 7 7 7 5 = 2 6 6 6 6 4 U 3 7 7 7 7 5 2 6 6 6 6 6 4 σ1 ... σk 3 7 7 7 7 7 5 2 6 6 6 6 4 V T 3 7 7 7 7 5 This gives the same approximation as before.

SLIDE 24

Computing SVD

I I derived the SVD assuming a procedure to solve this problem:

arg max{||Av|| : kvk = 1, v is orthog. to v1, v2, . . . vi1}

I Later we give a procedure to approximately solve this problem. I The most efficient method for computing the SVD is beyond the scope of the course.

SLIDE 25

Example: Senators

First center the data. Then find first two right singular vectors v1 and v2. Projecting onto these gives two coordinates. To find singular vectors,

I make a matrix A whose rows are the centered versions of vectors I find SVD of A using svd module.

>>> U, Sigma, V = svd.factor(A)

I first two columns of V are first two right singular vectors.

SLIDE 26

Example: Senators, two principal components

SLIDE 27

Example: Senators, two principal components