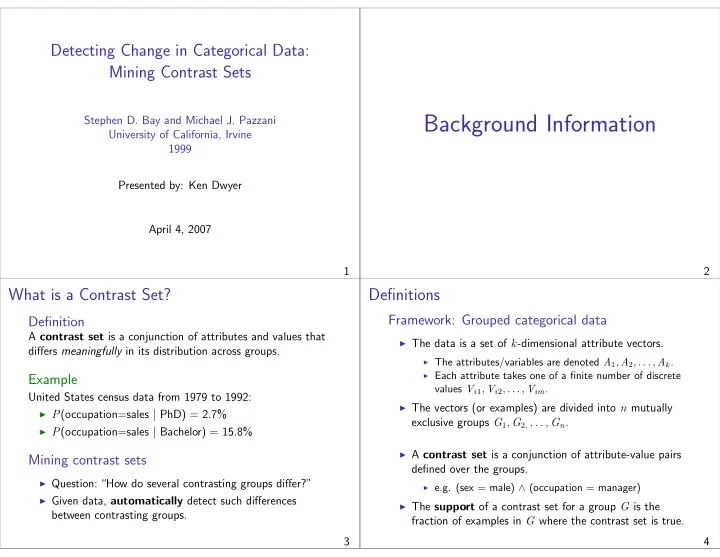

Detecting Change in Categorical Data: Mining Contrast Sets

Stephen D. Bay and Michael J. Pazzani University of California, Irvine 1999 Presented by: Ken Dwyer April 4, 2007 1

Background Information

2

What is a Contrast Set?

Definition

A contrast set is a conjunction of attributes and values that differs meaningfully in its distribution across groups.

Example

United States census data from 1979 to 1992:

◮ P(occupation=sales | PhD) = 2.7% ◮ P(occupation=sales | Bachelor) = 15.8%

Mining contrast sets

◮ Question: “How do several contrasting groups differ?” ◮ Given data, automatically detect such differences

between contrasting groups. 3

Definitions

Framework: Grouped categorical data

◮ The data is a set of k-dimensional attribute vectors.

◮ The attributes/variables are denoted A1, A2, . . . , Ak. ◮ Each attribute takes one of a finite number of discrete

values Vi1, Vi2, . . . , Vim.

◮ The vectors (or examples) are divided into n mutually

exclusive groups G1, G2, . . . , Gn.

◮ A contrast set is a conjunction of attribute-value pairs

defined over the groups.

◮ e.g. (sex = male) ∧ (occupation = manager)

◮ The support of a contrast set for a group G is the

fraction of examples in G where the contrast set is true. 4