SLIDE 1

HELSINGIN YLIOPISTO HELSINGFORS UNIVERSITET UNIVERSITY OF HELSINKI

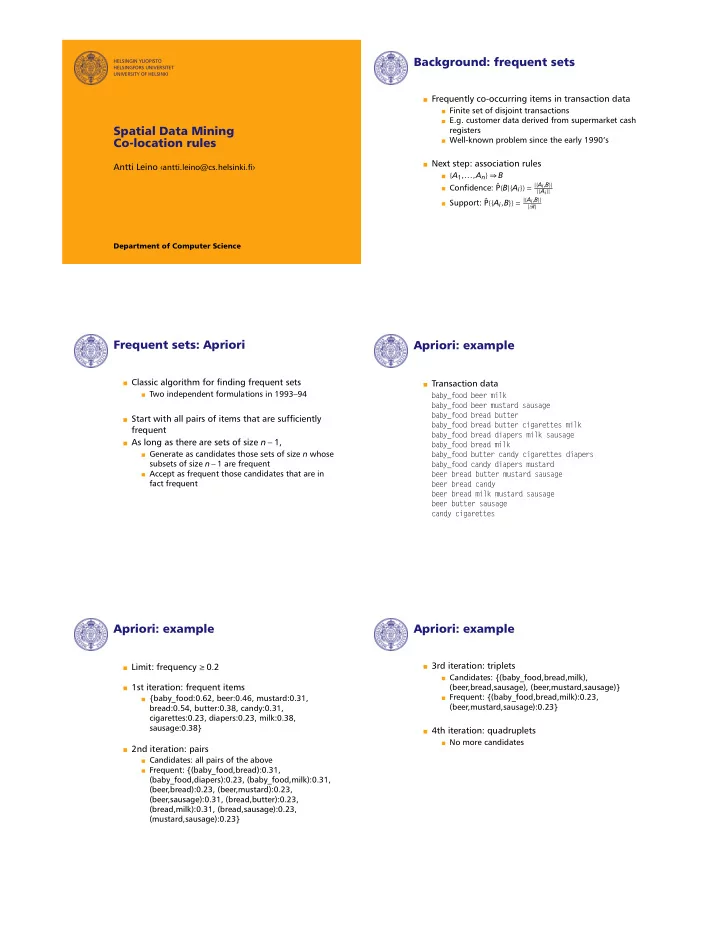

Spatial Data Mining Co-location rules

Antti Leino antti.leino@cs.helsinki.

Department of Computer Science

Background: frequent sets

Frequently co-occurring items in transaction data

Finite set of disjoint transactions E.g. customer data derived from supermarket cash registers Well-known problem since the early 1990's

Next step: association rules

{A1,...,An} ⇒ B

Condence: ˆ P(B|{Ai}) = |{Ai,B}|

|{Ai}|

Support: ˆ P({Ai,B}) = |{Ai,B}|

|R|