1

1

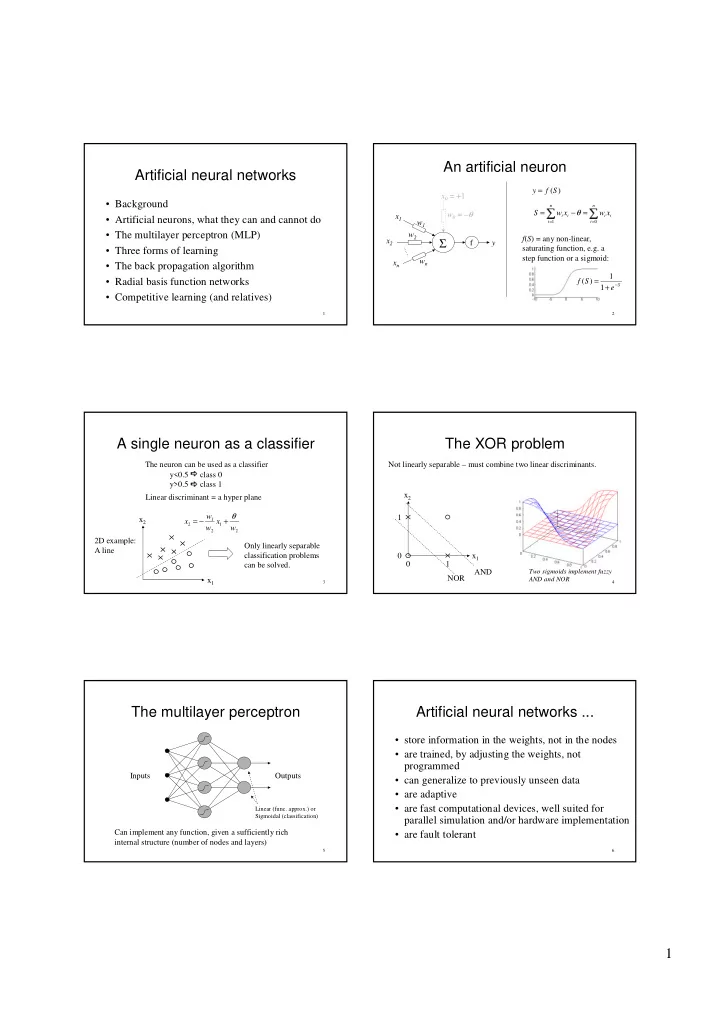

Artificialneuralnetworks

- Background

- Artificialneurons,whattheycanandcannotdo

- Themultilayerperceptron(MLP)

- Threeformsoflearning

- Thebackpropagationalgorithm

- Radialbasisfunctionnetworks

- Competitivelearning(andrelatives)

2

Anartificialneuron

) (S f y =

✁= =

= − =

n i n i i i i i

x w x w S

1

θ f(S)=any non-linear, saturatingfunction,e.g.a stepfunctionorasigmoid: x0 =+1

Σ

f x1 x2 xn w0 =–θ w1 w2 wn y

S

e S f

−

+ = 1 1 ) (

3

Asingleneuronasaclassifier

2 1 2 1 2

w x w w x θ + − = x1 x2 Theneuroncanbeusedasaclassifier y<0.5

✂class0 y>0.5

✂class1 Onlylinearlyseparable classificationproblems canbesolved. Lineardiscriminant=ahyper plane 2Dexample: Aline

4

TheXORproblem

Notlinearlyseparable– mustcombinetwolineardiscriminants. x1 x2 1 1 NOR AND

Twosigmoidsimplementfuzzy ANDandNOR

5

Themultilayerperceptron

Inputs Outputs Canimplementanyfunction,givenasufficientlyrich internalstructure(numberofnodesandlayers)

Linear(func.approx.)or Sigmoidal(classification)

6

Artificialneuralnetworks...

- storeinformationintheweights,notinthenodes

- aretrained,byadjustingtheweights,not

programmed

- cangeneralizetopreviouslyunseendata

- areadaptive

- arefastcomputationaldevices,wellsuitedfor

parallelsimulationand/orhardwareimplementation

- arefaulttolerant