10-12-2019 1

Dan Jensen IPH, KU

Monitoring and data filtering – DLM II

Outline

Summary of Monday’s lesson Examples of when classical methods will suffice, and when they will not

- Break (10 minutes)

Defining variance components for the DLM

- Break

Exercises (incl. Mandatory Report 3)

Summary of Monday’s lesson

Summary of Monday’s lesson

DLM: Dynamic Linear Model

- Two equations: System eq. & observation eq.

- Updated with each new observation using the Kalman filter

- 8 lines of math - Bayesian updating

- The Adaptive coefficient (AC) determines how much the

parameter vector should be adjusted

- The AC serves the same purpose in the DLM as lambda serves

in the EWMA. However, the AC is affected by both the

- bservational variance and the system variance

The DLM can be used for e.g.:

- Alarm systems (remember the ”normality hypothesis”)

- Decision support (look into the future with current knowlegde)

- Effect estimation (strip away the noise - see an effect of the change?)

The forecast errors can be standardized

- If the model is appropriate for the data, the standardized forecast

errors should follow a standard normal distribution (mean = 0, SD = 1)

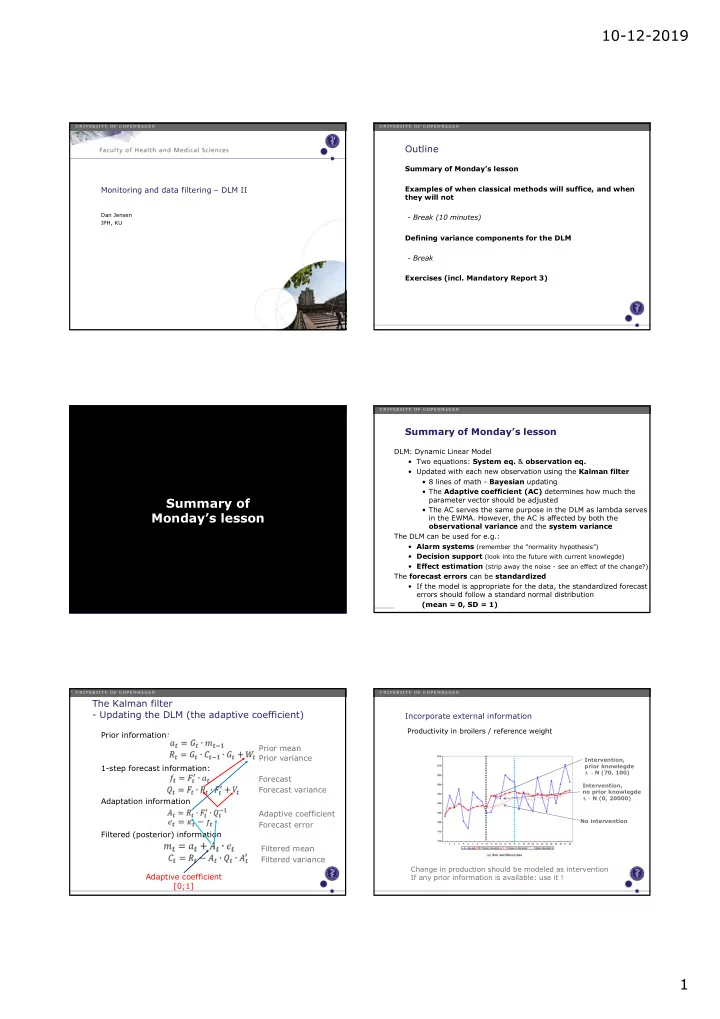

The Kalman filter

- Updating the DLM (the adaptive coefficient)

Prior information: 1-step forecast information: Adaptation information Filtered (posterior) information Prior mean Prior variance Forecast Forecast variance Adaptive coefficient Forecast error Filtered mean Filtered variance Adaptive coefficient [0;1] Incorporate external information

Productivity in broilers / reference weight Change in production should be modeled as intervention If any prior information is available: use it !

Intervention, prior knowlegde Δ ~ N (70, 100) Intervention, no prior knowlegde Δ ~ N (0, 20000) No intervention