1

Lecture 1: Introduction

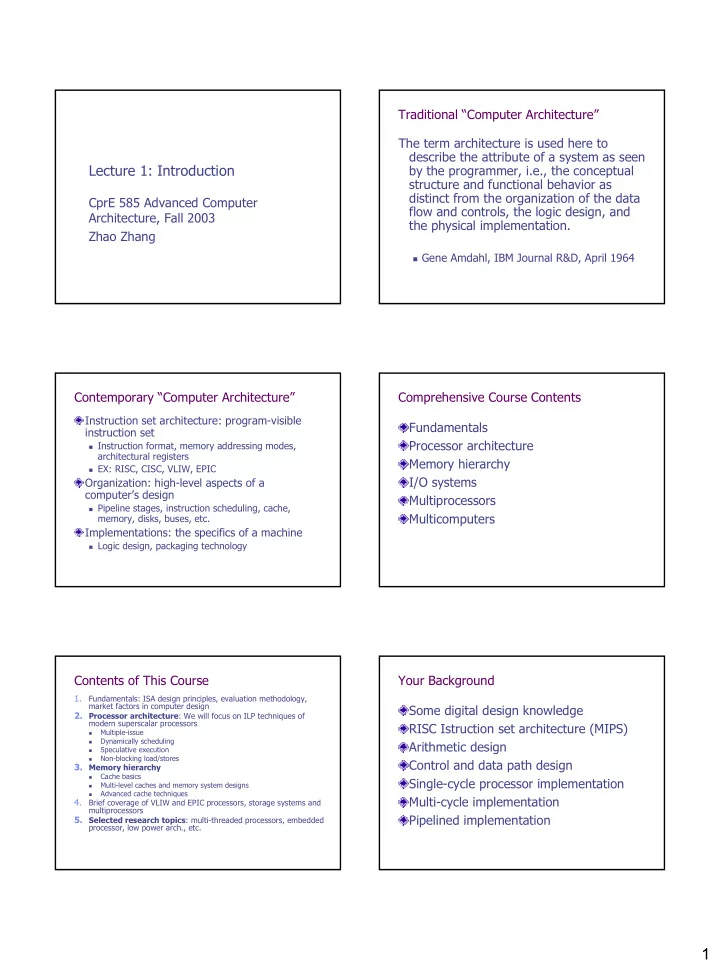

CprE 585 Advanced Computer Architecture, Fall 2003 Zhao Zhang Traditional “Computer Architecture” The term architecture is used here to describe the attribute of a system as seen by the programmer, i.e., the conceptual structure and functional behavior as distinct from the organization of the data flow and controls, the logic design, and the physical implementation.

Gene Amdahl, IBM Journal R&D, April 1964

Contemporary “Computer Architecture”

Instruction set architecture: program-visible instruction set

Instruction format, memory addressing modes,

architectural registers

EX: RISC, CISC, VLIW, EPIC

Organization: high-level aspects of a computer’s design

Pipeline stages, instruction scheduling, cache,

memory, disks, buses, etc.

Implementations: the specifics of a machine

Logic design, packaging technology

Comprehensive Course Contents Fundamentals Processor architecture Memory hierarchy I/O systems Multiprocessors Multicomputers Contents of This Course

1.

Fundamentals: ISA design principles, evaluation methodology, market factors in computer design

- 2. Processor architecture: We will focus on ILP techniques of

modern superscalar processors

- Multiple-issue

- Dynamically scheduling

- Speculative execution

- Non-blocking load/stores

- 3. Memory hierarchy

- Cache basics

- Multi-level caches and memory system designs

- Advanced cache techniques

4.

Brief coverage of VLIW and EPIC processors, storage systems and multiprocessors

- 5. Selected research topics: multi-threaded processors, embedded