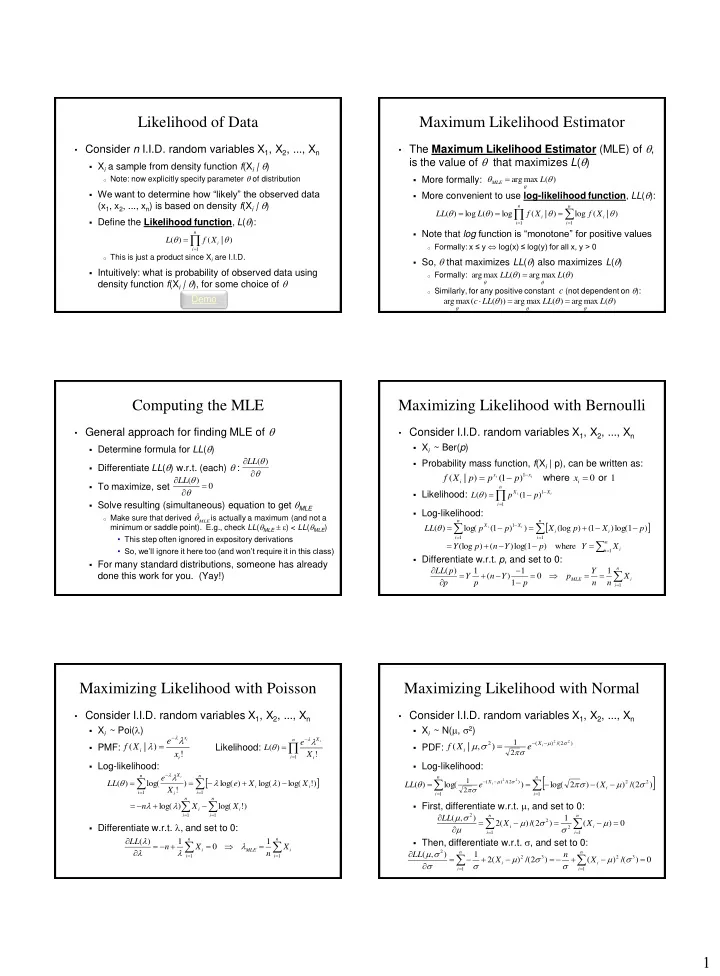

1 Likelihood of Data

- Consider n I.I.D. random variables X1, X2, ..., Xn

- Xi a sample from density function f(Xi | )

- Note: now explicitly specify parameter of distribution

- We want to determine how “likely” the observed data

(x1, x2, ..., xn) is based on density f(Xi | )

- Define the Likelihood function, L():

- This is just a product since Xi are I.I.D.

- Intuitively: what is probability of observed data using

density function f(Xi | ), for some choice of

n i i

X f L

1

) | ( ) (

Demo

Maximum Likelihood Estimator

- The Maximum Likelihood Estimator (MLE) of ,

is the value of that maximizes L()

- More formally:

- More convenient to use log-likelihood function, LL():

- Note that log function is “monotone” for positive values

- Formally: x ≤ y log(x) ≤ log(y) for all x, y > 0

- So, that maximizes LL() also maximizes L()

- Formally:

- Similarly, for any positive constant c (not dependent on ):

) ( max arg

L

MLE

n i i n i i

X f X f L LL

1 1

) | ( log ) | ( log ) ( log ) ( ) ( max arg ) ( max arg

L LL ) ( max arg ) ( max arg )) ( ( max arg

L LL LL c

Computing the MLE

- General approach for finding MLE of

- Determine formula for LL()

- Differentiate LL() w.r.t. (each) :

- To maximize, set

- Solve resulting (simultaneous) equation to get MLE

- Make sure that derived is actually a maximum (and not a

minimum or saddle point). E.g., check LL(MLE ) < LL(MLE)

- This step often ignored in expository derivations

- So, we’ll ignore it here too (and won’t require it in this class)

- For many standard distributions, someone has already

done this work for you. (Yay!)

) ( LL ) ( LL

MLE

ˆ

Maximizing Likelihood with Bernoulli

- Consider I.I.D. random variables X1, X2, ..., Xn

- Xi ~ Ber(p)

- Probability mass function, f(Xi | p), can be written as:

- Likelihood:

- Log-likelihood:

- Differentiate w.r.t. p, and set to 0:

1 ) 1 ( ) | (

1

- r

where

i x x i

x p p p X f

i i

n i X X

i i

p p L

1 1

) 1 ( ) (

n i i i n i X X

p X p X p p LL

i i

1 1 1

) 1 log( ) 1 ( ) (log ) ) 1 ( log( ) (

n i i

X Y p Y n p Y

1

where ) 1 log( ) ( ) (log

n i i MLE

X n n Y p p Y n p Y p p LL

1

1 1 1 ) ( 1 ) (

Maximizing Likelihood with Poisson

- Consider I.I.D. random variables X1, X2, ..., Xn

- Xi ~ Poi(l)

- PMF:

Likelihood:

- Log-likelihood:

- Differentiate w.r.t. l, and set to 0:

! ) | (

i x i

x e X f

i

l l

l

n i i X

X e L

i

1

! ) ( l

l

n i i i n i i X

X X e X e LL

i

1 1

) ! log( ) log( ) log( ) ! log( ) ( l l l

l

n i i MLE n i i

X n X n LL

1 1

1 1 ) ( l l l l

n i i n i i

X X n

1 1

) ! log( ) log(l l

Maximizing Likelihood with Normal

- Consider I.I.D. random variables X1, X2, ..., Xn

- Xi ~ N(m, 2)

- PDF:

- Log-likelihood:

- First, differentiate w.r.t. m, and set to 0:

- Then, differentiate w.r.t. , and set to 0:

) 2 /( ) ( 2

2 2

2 1

) , | (

m

m

i

X i

e X f

n i i n i X

X e LL

i

1 2 2 1 ) 2 /( ) (

) 2 /( ) ( ) 2 log( ) log( ) (

2 2

2 1

m

m

) ( 1 ) 2 /( ) ( 2 ) , (

1 2 1 2 2

n i i n i i

X X LL m m m m ) /( ) ( ) 2 /( ) ( 2 1 ) , (

1 3 2 1 3 2 2

n i i n i i