SLIDE 1

1

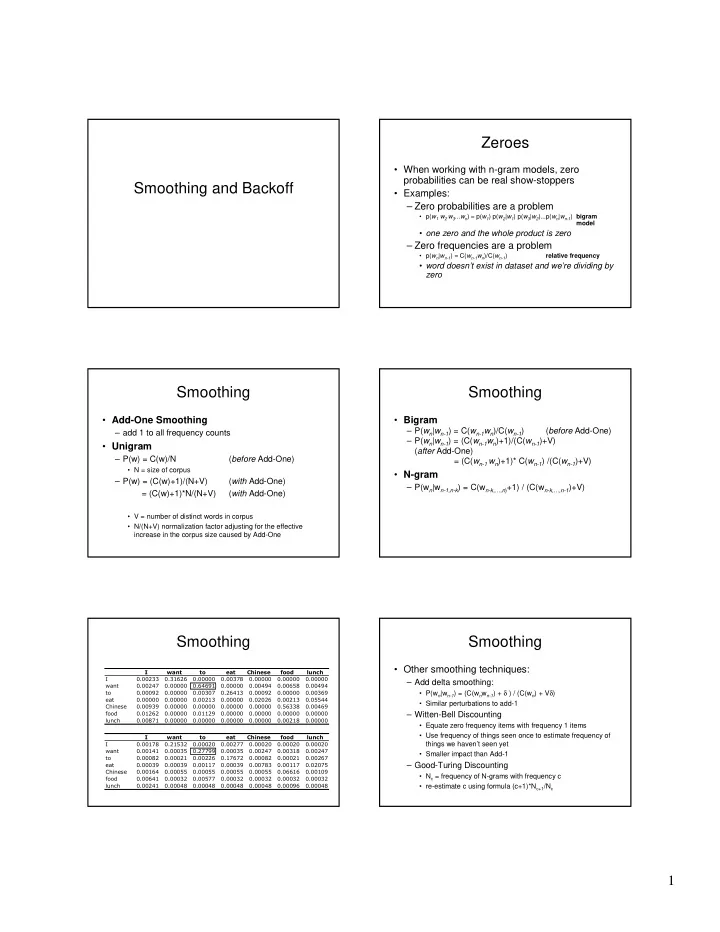

Smoothing and Backoff Zeroes

- When working with n-gram models, zero

probabilities can be real show-stoppers

- Examples:

– Zero probabilities are a problem

- p(w1 w2 w3...wn) ≈ p(w1) p(w2|w1) p(w3|w2)...p(wn|wn-1) bigram

model

- one zero and the whole product is zero

– Zero frequencies are a problem

- p(wn|wn-1) = C(wn-1wn)/C(wn-1)

relative frequency

- word doesn’t exist in dataset and we’re dividing by

zero

Smoothing

- Add-One Smoothing

– add 1 to all frequency counts

- Unigram

– P(w) = C(w)/N (before Add-One)

- N = size of corpus

– P(w) = (C(w)+1)/(N+V) (with Add-One) = (C(w)+1)*N/(N+V) (with Add-One)

- V = number of distinct words in corpus

- N/(N+V) normalization factor adjusting for the effective

increase in the corpus size caused by Add-One

Smoothing

- Bigram

– P(wn|wn-1) = C(wn-1wn)/C(wn-1) (before Add-One) – P(wn|wn-1) = (C(wn-1wn)+1)/(C(wn-1)+V) (after Add-One) = (C(wn-1 wn)+1)* C(wn-1) /(C(wn-1)+V)

- N-gram

– P(wn|wn-1,n-k) = C(wn-k,…,n)+1) / (C(wn-k,…,n-1)+V)

Smoothing

- Smoothing

- Other smoothing techniques:

– Add delta smoothing:

- P(wn|wn-1) = (C(wnwn-1) + δ ) / (C(wn) + Vδ)

- Similar perturbations to add-1

– Witten-Bell Discounting

- Equate zero frequency items with frequency 1 items

- Use frequency of things seen once to estimate frequency of

things we haven’t seen yet

- Smaller impact than Add-1

– Good-Turing Discounting

- Nc = frequency of N-grams with frequency c

- re-estimate c using formula (c+1)*Nc+1/Nc