Memory Access Scheduler

Matthew Cohen, Alvin Lin 6.884 – Complex Digital Systems May 6th, 2005

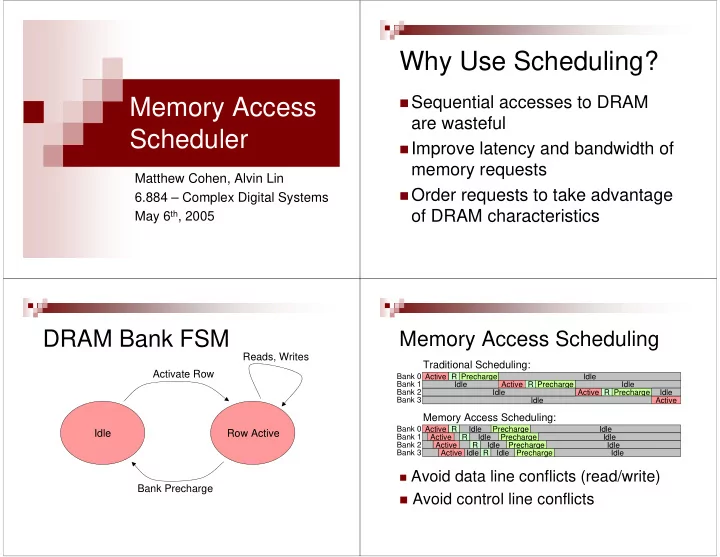

Why Use Scheduling?

Sequential accesses to DRAM

are wasteful

Improve latency and bandwidth of

memory requests

Order requests to take advantage

- f DRAM characteristics

DRAM Bank FSM

Idle Row Active Activate Row Bank Precharge Reads, Writes

Memory Access Scheduling

Active

Traditional Scheduling:

Bank 0 R Precharge Idle Active R Precharge Active R Precharge Active Idle Idle Bank 1 Idle Idle Idle Bank 2 Bank 3

Memory Access Scheduling:

Bank 0 Bank 1 Bank 2 Bank 3 Active R Precharge Active Active Active R R R Precharge Precharge Precharge Idle Idle Idle Idle Idle Idle Idle Idle Idle

Avoid data line conflicts (read/write)

Avoid control line conflicts