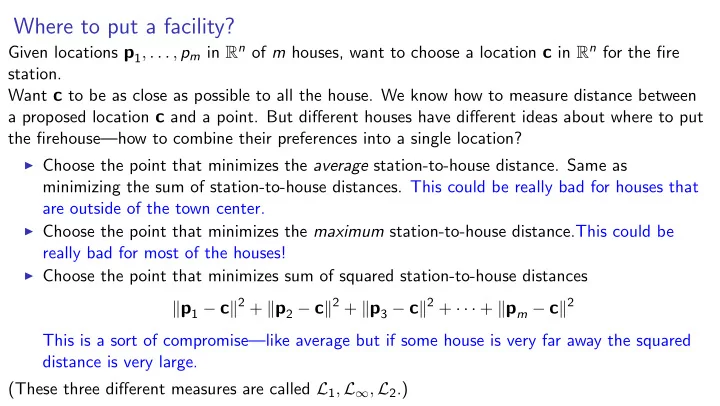

SLIDE 1 Where to put a facility?

Given locations p1, . . . , pm in Rn of m houses, want to choose a location c in Rn for the fire station. Want c to be as close as possible to all the house. We know how to measure distance between a proposed location c and a point. But different houses have different ideas about where to put the firehouse—how to combine their preferences into a single location?

◮ Choose the point that minimizes the average station-to-house distance. Same as

minimizing the sum of station-to-house distances. This could be really bad for houses that are outside of the town center.

◮ Choose the point that minimizes the maximum station-to-house distance.This could be

really bad for most of the houses!

◮ Choose the point that minimizes sum of squared station-to-house distances

p1 − c2 + p2 − c2 + p3 − c2 + · · · + pm − c2 This is a sort of compromise—like average but if some house is very far away the squared distance is very large. (These three different measures are called L1, L∞, L2.)

SLIDE 2 Putting a facility in the location that minimizes sum of squared distances

Given locations p1, . . . , pm in Rn of m houses, want to choose a location c in Rn for the fire station so as to minimize sum of squared distances p1 − c2 + p2 − c2 + · · · + pm − c2

◮ Question:How to find this location? ◮ Answer: c = 1 m (p1 + p2 + · · · + pm)

Called the centroid of p1, . . . , pm. It is the average for vectors. In fact, for i = 1, . . . , n, entry i

- f the centroid is the average of entry i of all the points.

Centroid ¯

p satisfies the equation m¯ p =

i pi.

Therefore

i(pi − ¯

p) equals the zero vector.

SLIDE 3 Proving that the centroid minimizes the sum of squared distances

Let q be any point. We show that the sum of squared q-to-datapoint distances is at least the sum of squared ¯

p-to-datapoint distances.

For i = 1, . . . , m, pi − q2 = pi − ¯

p + ¯ p − q2

= pi − ¯

p + ¯ p − q, pi − ¯ p + ¯ p − q

= pi − ¯

p, pi − ¯ p + pi − ¯ p, ¯ p − q + ¯ p − q, pi − ¯ p + ¯ p − q, ¯ p − q

= pi − ¯

p2 + pi − ¯ p, ¯ p − q + ¯ p − q, pi − ¯ p + ¯ p − q2

Summing over i = 1, . . . , m,

pi − q2 =

pi − ¯

p2 +

pi − ¯

p, ¯ p − q +

¯

p − q, pi − ¯ p +

¯

p − q2

=

pi − ¯

p2 +

(pi − ¯

p), ¯ p − q

p − q,

(pi − ¯

p)

¯

p − q2

SLIDE 4 Proving that the centroid minimizes the sum of squared distances

Let q be any point. We show that the sum of squared q-to-datapoint distances is at least the sum of squared ¯

p-to-datapoint distances.

Summing over i = 1, . . . , m,

pi − q2 =

pi − ¯

p2 +

pi − ¯

p, ¯ p − q +

¯

p − q, pi − ¯ p +

¯

p − q2

=

pi − ¯

p2 +

(pi − ¯

p), ¯ p − q

p − q,

(pi − ¯

p)

¯

p − q2

=

pi − ¯

p2 + 0, ¯ p − q + ¯ p − q, 0 +

¯

p − q2

=

pi − ¯

p2 + 0 + 0 +

¯

p − q2

= sum of squared ¯

p-to-datapoint distances + squared ¯ p-to-q distance

SLIDE 5

k-means clustering using Lloyd’s Algorithm

k-means clustering: Given data points (vectors) p1, . . . , pm in Rn, select k centers c1, . . . , ck so as to minimize the sum of squared distances of data points to the nearest centers. That is, define the function f (x, [c1, . . . , ck]) = min{x − ci2 : i ∈ {1, . . . , k}}. This function returns the squared distance from x to whichever of c1, . . . , ck is nearest. The goal of k-means clustering is to select points c1, . . . , ck so as to minimize f (p1, [c1, . . . , ck]) + f (p2, [c1, . . . , ck]) + · · · + f (pm, [c1, . . . , ck]) The purpose is to partition the data points into k groups (called clusters).

SLIDE 6 k-means clustering using Lloyd’s Algorithm

Select k centers to minimize sum of squared distances of data points to nearest centers. Combines two ideas:

- 1. Assign each data point to the nearest center.

- 2. Choose the centers so as to be close to data points.

Suggests an algorithm. Start with k centers somewhere, perhaps randomly chosen. Then repeatedly perform the following steps:

- 1. Assign each data point to nearest center.

- 2. Move each center to be as close as possible to the data points assigned to it This means let

the new location of the center be the centroid of the assigned points.

SLIDE 7

Orthogonalization

[9] Orthogonalization

SLIDE 8

Finding the closest point in a plane

Goal: Given a point b and a plane, find the point in the plane closest to b.

SLIDE 9

Finding the closest point in a plane

Goal: Given a point b and a plane, find the point in the plane closest to b. By translation, we can assume the plane includes the origin. The plane is a vector space V. Let {v1, v2} be a basis for V. Goal: Given a point b, find the point in Span {v1, v2} closest to b. Example:

v1 = [8, −2, 2] and v2 = [4, 2, 4] b = [5, −5, 2]

point in plane closest to b: [6, −3, 0].

SLIDE 10

Closest-point problem in higher dimensions

Goal: An algorithm that, given a vector b and vectors v1, . . . , vn, finds the vector in Span {v1, . . . , vn} that is closest to b. Special case: We can use the algorithm to determine whether b lies in Span {v1, . . . , vn}: If the vector in Span {v1, . . . , vn} closest to b is b itself then clearly b is in the span; if not, then

b is not in the span. Let A =

v1 · · ·

vn

. Using the linear-combinations interpretation of matrix-vector multiplication, a vector in Span {v1, . . . , vn} can be written Ax. Thus testing if b is in Span {v1, . . . , vn} is equivalent to testing if the equation Ax = b has a solution. More generally: Even if Ax = b has no solution, we can use the algorithm to find the point in {Ax : x ∈ Rn} closest to b. Moreover: We hope to extend the algorithm to also find the best solution x.

SLIDE 11

High-dimensional projection onto/orthogonal to

For any vector b and any vector a, define vectors b||a and b⊥a so that

b = b||a + b⊥a

and there is a scalar σ ∈ R such that

b||a = σ a and b⊥a is orthogonal to a

Definition: For a vector b and a vector space V, we define the projection of b onto V (written

b||V) and the projection of b orthogonal to V (written b⊥V) so that b = b||V + b⊥V

and b||V is in V, and b⊥V is orthogonal to every vector in V. b b||V b⊥V

projection onto V projection orthogonal toV

b = +

SLIDE 12

High-Dimensional Fire Engine Lemma

Definition: For a vector b and a vector space V, we define the projection of b onto V (written

b||V) and the projection of b orthogonal to V (written b⊥V) so that b = b||V + b⊥V

and b||V is in V, and b⊥V is orthogonal to every vector in V. One-dimensional Fire Engine Lemma: The point in Span {a} closest to b is b||a and the distance is b⊥a. High-Dimensional Fire Engine Lemma: The point in a vector space V closest to b is b||V and the distance is b⊥V.

SLIDE 13 Finding the projection of b orthogonal to Span {a1, . . . , an}

High-Dimensional Fire Engine Lemma: Let b be a vector and let V be a vector space. The vector in V closest to b is b||V. The distance is b⊥V. Suppose V is specified by generators v1, . . . , vn Goal: An algorithm for computing b||V in this case.

◮ input: vector b, vectors v1, . . . , vn ◮ output: projection of b onto Span {v1, . . . , vn}

We already know how to solve this when n = 1: def project_along(b, v): return (0 if v.is_almost_zero() else (b*v)/(v*v))*v Let’s try to generalize....

SLIDE 14

project onto(b, vlist)

def project_along(b, v): return (0 if v.is_almost_zero() else (b*v)/(v*v))*v ⇓ def project_onto(b, vlist): return sum([project_along(b, v) for v in vlist]) Reviews are in.... “Short, elegant, .... and flawed” “Beautiful—if only it worked!” “A tragic failure.”

SLIDE 15

Failure of project onto

v1 v2 b

Try it out on vector b and vlist = [v1, v2] in R2, so v = Span {v1, v2}. In this case, b is in Span {v1, v2}, so b||V = b. The algorithm tells us to find the projection of

b along v1 and the projection of b along v2.

The sum of these projections should be equal to

b|| ... but it’s not.

SLIDE 16

Failure of project onto

v1 v2 b

Try it out on vector b and vlist = [v1, v2] in R2, so v = Span {v1, v2}. In this case, b is in Span {v1, v2}, so b||V = b. The algorithm tells us to find the projection of

b along v1 and the projection of b along v2.

The sum of these projections should be equal to

b|| ... but it’s not.

SLIDE 17

Failure of project onto

v1 v2 b

Try it out on vector b and vlist = [v1, v2] in R2, so v = Span {v1, v2}. In this case, b is in Span {v1, v2}, so b||V = b. The algorithm tells us to find the projection of

b along v1 and the projection of b along v2.

The sum of these projections should be equal to

b|| ... but it’s not.

SLIDE 18

Failure of project onto

v1 v2 b

Try it out on vector b and vlist = [v1, v2] in R2, so v = Span {v1, v2}. In this case, b is in Span {v1, v2}, so b||V = b. The algorithm tells us to find the projection of

b along v1 and the projection of b along v2.

The sum of these projections should be equal to

b|| ... but it’s not.

SLIDE 19 What went wrong with project onto?

Suppose we run the algorithm on b and vlist = [v1, . . . , vn]. Let V denote Span {v1, . . . , vn}. For each vector vi ∈ vlist, the vector returned by project along(b, vi) is σivi where σi is b·vi

vi·vi (or 0, if vi = 0).

The vector returned by project onto(b, vlist) is the sum σ1v1 + σ2v2 + · · · + σnvn. Let ˆ

b denote the returned vector. Want to check that ˆ b is b||V ...

◮ Is ˆ

b in V? It is a linear combination of v1, . . . , vn, so YES.

◮ If ˆ

b were b||V then b − ˆ b would be b⊥V so b − ˆ b would be orthogonal to all vectors in V.

In particular, it would be orthogonal to the generators v1, . . . , vn. Is it? To check, calculate the inner product of b − ˆ

b with each of v1, . . . , vn.

Consider, for example, the generator v1.

b, v1

b, v1 − ˆ

b, v1

b, v1 − σ1v1 + σ2v2 + · · · + σnvn, v1 Is this zero? Expand the last inner product—get σ1v1, v1 but also cross-terms like σ2v1, v1 and σnvn, v1. The cross-terms keep the algorithm from working correctly.

SLIDE 20

How to repair project onto?

Don’t change the procedure. Fix the spec. Require that vlist consists of mutually orthogonal vectors: the ith vector in the list is orthogonal to the jth vector in the list for every i = j.