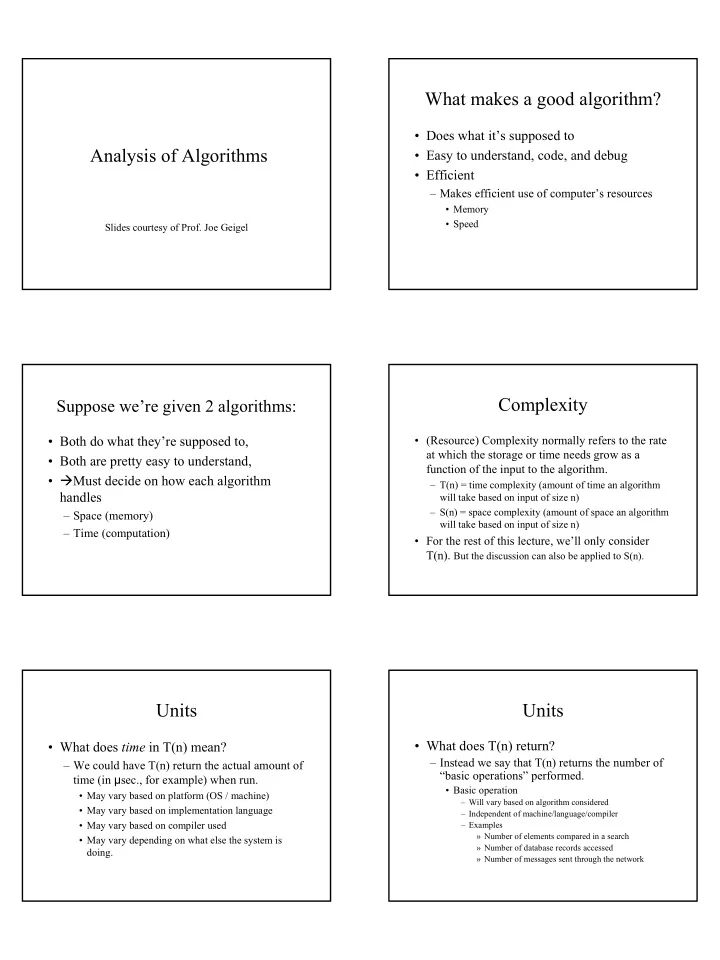

Analysis of Algorithms

Slides courtesy of Prof. Joe Geigel

What makes a good algorithm?

- Does what it’s supposed to

- Easy to understand, code, and debug

- Efficient

– Makes efficient use of computer’s resources

- Memory

- Speed

Suppose we’re given 2 algorithms:

- Both do what they’re supposed to,

- Both are pretty easy to understand,

- Must decide on how each algorithm

handles

– Space (memory) – Time (computation)

Complexity

- (Resource) Complexity normally refers to the rate

at which the storage or time needs grow as a function of the input to the algorithm.

– T(n) = time complexity (amount of time an algorithm will take based on input of size n) – S(n) = space complexity (amount of space an algorithm will take based on input of size n)

- For the rest of this lecture, we’ll only consider

T(n). But the discussion can also be applied to S(n).

Units

- What does time in T(n) mean?

– We could have T(n) return the actual amount of time (in µsec., for example) when run.

- May vary based on platform (OS / machine)

- May vary based on implementation language

- May vary based on compiler used

- May vary depending on what else the system is

doing.

Units

- What does T(n) return?

– Instead we say that T(n) returns the number of “basic operations” performed.

- Basic operation

– Will vary based on algorithm considered – Independent of machine/language/compiler – Examples » Number of elements compared in a search » Number of database records accessed » Number of messages sent through the network