1

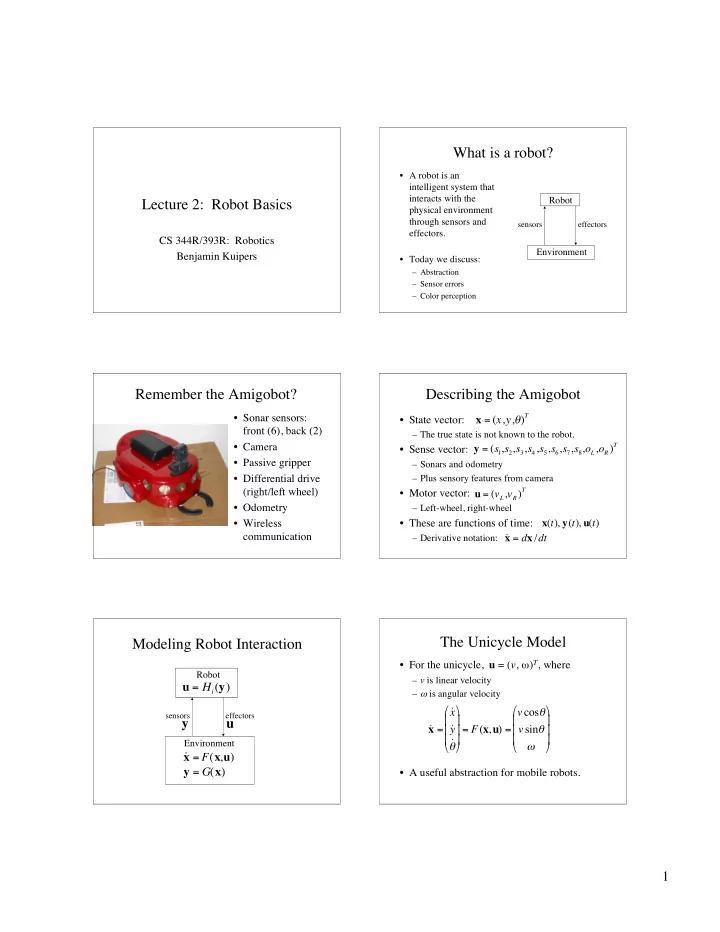

Lecture 2: Robot Basics

CS 344R/393R: Robotics Benjamin Kuipers

What is a robot?

- A robot is an

intelligent system that interacts with the physical environment through sensors and effectors.

- Today we discuss:

– Abstraction – Sensor errors – Color perception

Robot Environment

sensors effectors

Remember the Amigobot?

- Sonar sensors:

front (6), back (2)

- Camera

- Passive gripper

- Differential drive

(right/left wheel)

- Odometry

- Wireless

communication

Describing the Amigobot

- State vector:

– The true state is not known to the robot.

- Sense vector:

– Sonars and odometry – Plus sensory features from camera

- Motor vector:

– Left-wheel, right-wheel

- These are functions of time:

– Derivative notation:

x = (x,y,)

T

y = (s

1,s2,s3,s4,s5,s6,s7,s8,oL,oR)T

u = (vL,vR)

T

x(t), y(t), u(t) ˙ x = dx /dt

Modeling Robot Interaction

Robot Environment

sensors effectors

˙ x = F(x,u) y = G(x) u = Hi(y)

y u

The Unicycle Model

- For the unicycle, u = (v, ω)T, where

– v is linear velocity – ω is angular velocity

- A useful abstraction for mobile robots.

˙ x = ˙ x ˙ y ˙

- = F(x,u) =