Lecture 2:

Performance Limitations & Performance Metrics

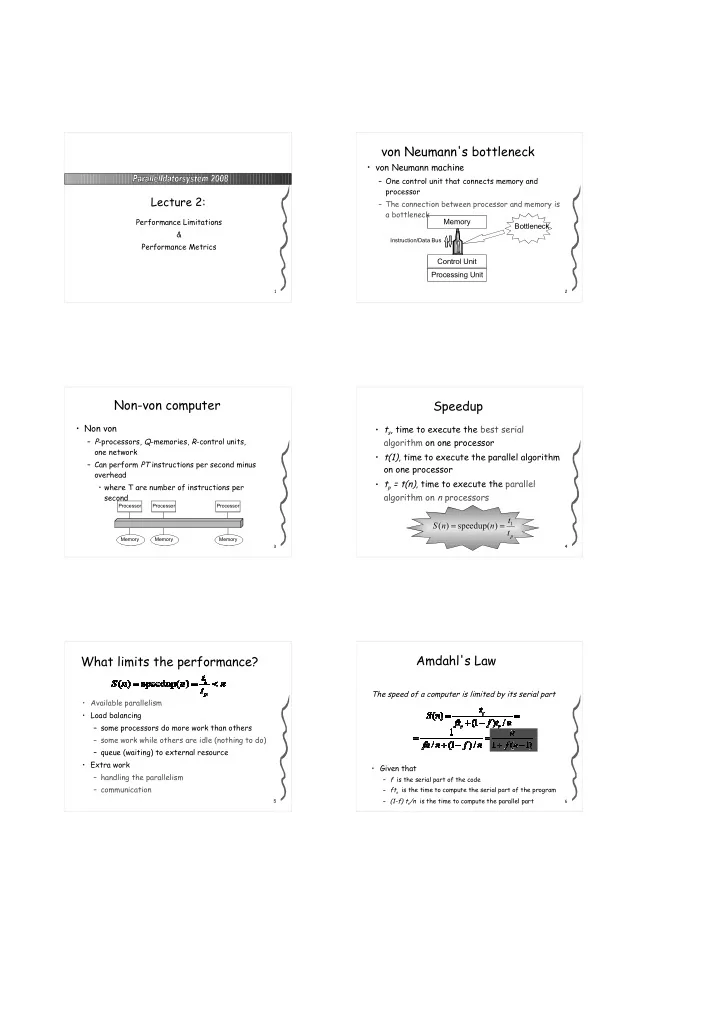

2von Neumann's bottleneck

- von Neumann machine

– One control unit that connects memory and processor – The connection between processor and memory is a bottleneck Memory Control Unit Processing Unit

Instruction/Data Bus

Bottleneck

3Non-von computer

- Non von

– P-processors, Q-memories, R-control units,

- ne network

– Can perform PT instructions per second minus

- verhead

- where T are number of instructions per

second

Processor Memory Processor Memory Processor Memory 4Speedup

- ts, time to execute the best serial

algorithm on one processor

- t(1), time to execute the parallel algorithm

- n one processor

- tp = t(n), time to execute the parallel

algorithm on n processors

pt t n n S

1) speedup( ) ( = =

5What limits the performance?

- Available parallelism

- Load balancing

– some processors do more work than others – some work while others are idle (nothing to do) – queue (waiting) to external resource

- Extra work

– handling the parallelism – communication

6Amdahl's Law

The speed of a computer is limited by its serial part

- Given that

– f is the serial part of the code – fts is the time to compute the serial part of the program – (1-f) ts/n is the time to compute the parallel part