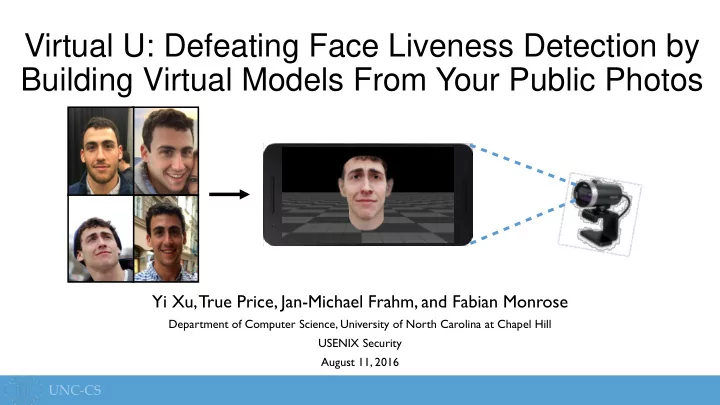

SLIDE 1 Virtual U: Defeating Face Liveness Detection by Building Virtual Models From Your Public Photos

Yi Xu, True Price, Jan-Michael Frahm, and Fabian Monrose

Department of Computer Science, University of North Carolina at Chapel Hill USENIX Security August 11, 2016

SLIDE 2 Face Authentication: Convenient Security

image source

SLIDE 3 Evolution of Adversarial Models

- Attack: Still-image Spoofing

SLIDE 4 Evolution of Adversarial Models

- Attack: Still-image Spoofing

- Defense: Liveness Detection

SLIDE 5 Evolution of Adversarial Models

- Attack: Still-image Spoofing

- Defense: Liveness Detection

- Attack:Video Spoofing

SLIDE 6 Evolution of Adversarial Models

- Attack: Still-image Spoofing

- Defense: Liveness Detection

- Attack:Video Spoofing

- Defense: Motion Consistency

SLIDE 7 Evolution of Adversarial Models

- Attack: Still-image Spoofing

- Defense: Liveness Detection

- Attack:Video Spoofing

- Defense: Motion Consistency

- Attack: 3D-Printed Masks

SLIDE 8

Virtual U: A New Attack

We introduce a new VR-based attack on face authentication systems solely using publicly available photos of the victim

SLIDE 9

Virtual U: A New Attack

Input Web Photos Image-based Texturing

❸

Gaze Correction

❹

Viewing with Virtual Reality System

❻

Landmark Extraction

❶

3D Model Reconstruction

❷

Expression Animation

❺

SLIDE 10

Leveraging Social Media

SLIDE 11

Landmark Extraction

SLIDE 12 3D Face Model

Expression Variation (e.g., frowning-to-smiling) Identity Variation (e.g., thin-to-heavyset)

SLIDE 13 3D Face Model

Expression Variation (e.g., frowning-to-smiling) Identity Variation (e.g., thin-to-heavyset)

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞 𝑇 𝐵𝑗𝑒 𝐵𝑓𝑦𝑞

SLIDE 14 𝑇

3D Face Model

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞

Reprojection

SLIDE 15

3D Face Model

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞

Pose 𝛽𝑗𝑒 𝛽𝑓𝑦𝑞

SLIDE 16

3D Face Model

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞

Pose 𝛽𝑗𝑒 𝛽𝑓𝑦𝑞

SLIDE 17

3D Face Model

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞

Pose 𝛽𝑗𝑒 𝛽𝑓𝑦𝑞

SLIDE 18

3D Face Model

SLIDE 19

3D Face Model

SLIDE 20

3D Face Model

SLIDE 21

3D Face Model

Pose 𝛽𝑓𝑦𝑞 Pose 𝛽𝑓𝑦𝑞 Pose 𝛽𝑓𝑦𝑞 Pose 𝛽𝑓𝑦𝑞 𝛽𝑗𝑒

SLIDE 22

Multi-Image Modeling

Single image Multiple images

SLIDE 23

Texturing

Direct T exturing 2D Poisson Editing

SLIDE 24

Texturing

Direct T exturing 2D Poisson Editing 3D Poisson Editing

SLIDE 25 Gaze Correction

R G B G B R

SLIDE 26

Gaze Correction

SLIDE 27

Virtual U: A New Attack

Input Web Photos Image-based Texturing

❸

Gaze Correction

❹

Viewing with Virtual Reality System

❻

Landmark Extraction

❶

3D Model Reconstruction

❷

Expression Animation

❺

SLIDE 28

Expression Animation

Smiling Laughing Blinking Raising Eyebrows 𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞

SLIDE 29

VR Display

Authentication Device Printed Marker VR System

SLIDE 30

VR Display

SLIDE 31

Experiments

Motion-based liveness detection Interaction-based liveness detection Texture-based liveness detection BioID KeyLemon Mobius TrueKey 1U

* *

SLIDE 32 Experiments

- 20 participants

- Aged 24 to 44

- 14 males, 6 females

- Various ethnicities

- Two tests

- Indoor photo of the subject in the same environment as registration

- Publicly accessible photos

- Anywhere from 3 to 27 photos per person

- Low-, medium-, and high-quality

- Potentially strong changes in appearance over time

SLIDE 33 Experiments

BioID KeyLemon Mobius TrueKey 1U

Indoor Image (Single frontal image)

100% 100% 100% 100% 100%

Online

85% 1.6 80% 1.5 70% 1.3 55% 1.7 0%

SLIDE 34 Observations

- Medium- and high-resolution photos work best

- Photos from professional photographers (weddings, etc.)

- Only a small number of photos required

- One or two forward-facing photos

- One or two higher-resolution photos

- Group photos provide consistent frontal views

- Often lower resolution

SLIDE 35

Experiments

How does resolution affect reconstruction quality?

SLIDE 36

Experiments

How does rotation affect reconstruction quality?

SLIDE 37

Experiments

Combining high-res rotation with low-res front-facing? +

SLIDE 38 Experiments

against liveness detection

SLIDE 39 Experiments

against liveness detection

motion consistency

SLIDE 40 Experiments

- “Seeing Your Face is Not Enough: An Inertial Sensor-Based Liveness

Detection for Face Authentication” (Li et al., ACM CCS’15)

- Device motion measured by inertial sensor data

- Head pose estimated from input video

- Train a classifier to identify

real data (correlated signals) versus spoofed video data

SLIDE 41

Experiments

Training Data (Pos. Data vs. Neg. Data)

T est Result (Accept Rate)

Real Face Video Spoof

VR Spoof

Real vs. Video

98.0% 1.0% 99.5%

SLIDE 42

Experiments

Training Data (Pos. Data vs. Neg. Data)

T est Result (Accept Rate)

Real Face Video Spoof

VR Spoof

Real vs. Video

98.0% 1.0% 99.5%

Real vs. Video +VR

67.0% 0.0% 50.0%

SLIDE 43 Experiments

Training Data (Pos. Data vs. Neg. Data)

T est Result (Accept Rate)

Real Face Video Spoof

VR Spoof

Real vs. Video

98.0% 1.0% 99.5%

Real vs. Video +VR

67.0% 0.0% 50.0% Real vs. VR 67.0%

SLIDE 44 Mitigations

- Alternative/additional hardware

- Infrared imaging (e.g. Windows Hello)

- Random structured light projection

image source

SLIDE 45 Mitigations

- Alternative/additional hardware

- Infrared imaging (e.g. Windows Hello)

- Random structured light projection

- Improved defense against

low-resolution synthetic textures

Original Downsized to 50px

SLIDE 46 Conclusion

VR-based attack on face authentication systems solely using publicly available photos of the victim

- This attack bypasses existing defenses of liveness detection and

motion consistency

- At a minimum, face authentication software must improve against VR-

based attacks with low-resolution textures

- The increasing ubiquity of VR will continue to challenge computer-

vision-based authentication systems

SLIDE 47

Thank you!

Questions?

SLIDE 48

SLIDE 49 Overview

- Face Authentication

- Virtual U: A

VR-based attack

- Evaluation

- Mitigations

- Conclusion

SLIDE 50 Evolution of Adversarial Models

- Attack: Still-image Spoofing

- Defense: Liveness Detection

- Attack:Video Spoofing

- Defense: Motion Consistency

- Attack: 3D-Printed Masks

- Defense: Texture Detection

SLIDE 51 3D Face Model

Expression Variation (e.g., frowning-to-smiling) Identity Variation (e.g., thin-to-heavyset)

SLIDE 52 3D Face Model

Expression Variation (e.g., frowning-to-smiling) Identity Variation (e.g., thin-to-heavyset)

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞 𝑇 𝐵𝑗𝑒 𝐵𝑓𝑦𝑞

SLIDE 53 𝑇

3D Face Model

𝑇 = 𝑇 + 𝐵𝑗𝑒𝛽𝑗𝑒 + 𝐵𝑓𝑦𝑞𝛽𝑓𝑦𝑞

Reprojection

min

𝑄,𝛽𝑗𝑒,𝛽𝑓𝑦𝑞 𝑗

𝑡𝑗 − 𝑄𝑇𝑗

2 + 𝛾𝑗𝑒 𝛽𝑗𝑒 2 + 𝛾𝑓𝑦𝑞 𝛽𝑓𝑦𝑞 2

Normalization Pose Summed over all landmarks

SLIDE 54

3D Face Model

SLIDE 55 Multi-Image Modeling

min

𝑄,𝛽𝑗𝑒,𝛽𝑓𝑦𝑞 𝑗

𝑡𝑗 − 𝑄𝑇𝑗

2 + 𝛾𝑗𝑒 𝛽𝑗𝑒 2 + 𝛾𝑓𝑦𝑞 𝛽𝑓𝑦𝑞 2

Single Image

min

𝑄,𝛽𝑗𝑒,𝛽𝑓𝑦𝑞 𝑛 𝑗

𝑡𝑛𝑗 − 𝑄

𝑛𝑇𝑛𝑗 2 + 𝛾𝑗𝑒 𝛽𝑗𝑒 2 + 𝛾𝑓𝑦𝑞 𝑛

𝛽𝑛

𝑓𝑦𝑞 2

Multiple Images

Sum over all images

SLIDE 56

Multi-Image Modeling

Corners of the eyes and mouth are stable landmarks Contour points are variable landmarks

SLIDE 57 Multi-Image Modeling

min

𝑄,𝛽𝑗𝑒,𝛽𝑓𝑦𝑞 𝑛 𝑗

𝑡𝑛𝑗 − 𝑄

𝑛𝑇𝑛𝑗 2 + 𝑜𝑝𝑠𝑛.

Multiple Images

min

𝑄,𝛽𝑗𝑒,𝛽𝑓𝑦𝑞 𝑛 𝑗

1 𝜏𝑗

𝑡 2 𝑡𝑛𝑗 − 𝑄 𝑛𝑇𝑛𝑗 2 + 𝑜𝑝𝑠𝑛.

Multiple Images with Landmark Weighting

Higher weighting for stable landmarks

SLIDE 58

SLIDE 59

SLIDE 60 Experiments

- 20 participants

- Aged 24 to 44

- 14 males, 6 females

- Various ethnicities

- Two tests

- Indoor photo of the subject in the same environment as registration

- Publicly accessible photos

- Anywhere from 3 to 27 photos per person

- Low-, medium-, and high-quality

- Potentially strong changes in appearance over time

SLIDE 61 Experiments

How does rotation affect reconstruction quality?

20 30 40 20 30 40

SLIDE 62

Experiments

Authentication Device VR System Google Cardboard