SLIDE 1

Unit 6: Introduction to linear regression

- 2. Outliers and inference for regression

STA 104 - Summer 2017

Duke University, Department of Statistical Science

- Prof. van den Boom

Slides posted at http://www2.stat.duke.edu/courses/Summer17/sta104.001-1/

Announcements ▶ PA 6 and PS 6 due tomorrow (Tuesday) 12.30 pm ▶ RA 7 (laste one!) tomorrow too at start of class ▶ PS 5 grades and feedback released ▶ Final is next Wednesday June 28: Sample exam is posted

1

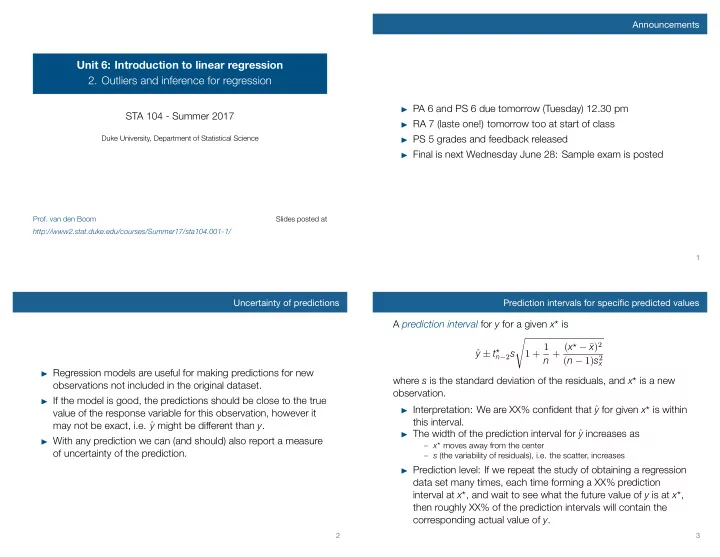

Uncertainty of predictions ▶ Regression models are useful for making predictions for new

- bservations not included in the original dataset.

▶ If the model is good, the predictions should be close to the true

value of the response variable for this observation, however it may not be exact, i.e. ˆ y might be different than y.

▶ With any prediction we can (and should) also report a measure

- f uncertainty of the prediction.

2

Prediction intervals for specific predicted values

A prediction interval for y for a given x⋆ is ˆ y ± t⋆

n−2s

√ 1 + 1 n + (x⋆ − ¯ x)2 (n − 1)s2

x

where s is the standard deviation of the residuals, and x⋆ is a new

- bservation.