Topics in Algorithms and Data Science Singular Value Decomposition (SVD)

Omid Etesami

Topics in Algorithms and Data Science Singular Value Decomposition - - PowerPoint PPT Presentation

Topics in Algorithms and Data Science Singular Value Decomposition (SVD) Omid Etesami The problem of best-fit subpace Best-fit subspace n points in d -dimensional Euclidean space are given. The best-fit subspace of dimension k minimizes the sum

Omid Etesami

n points in d-dimensional Euclidean space are given. The best-fit subspace of dimension k minimizes the sum of squared distances from the points to the subspace.

For the best-fit affine subspace, translate so that the center of mass of the points lies at the origin. Then find best-fit linear subspace.

their center of mass.

subspace l.

subspace.

ai

S0 = {0} for i = 1 to k do Si = best-fit i-dimensional subspace among those that contain Si-1

S0 S2 S1 S3

Instead of minimizing sum of squared distances we maximize sum of squared lengths of projections onto the line.

ai

1st right singular vector = v1 = argmax|v|=1 |Av| 1st singular value = |Av1|

v1

{v1,…,vi} is an orthonormal basis for Si because sum of squared lengths of projections on Si is |A vi| + sum of squared lengths of projections on Si-1. vi is the i‘th right singular vector and ơi(A) = |A vi| is the i‘th singular vector.

S0 S2 S1 S3 v1 v2

Proof by induction on k: Consider a k-dimensional subspace Tk. It has a unit vector wk orthogonal to Sk-1. Let Tk = Tk-1 + wk, such that wk is orthogonal to Tk-1.

Sk Tk v1 w2

We only consider non-zero singular values, i.e. i’th singular value is defined only for 1 ≤ i ≤ r = rank(A).

Lemma.

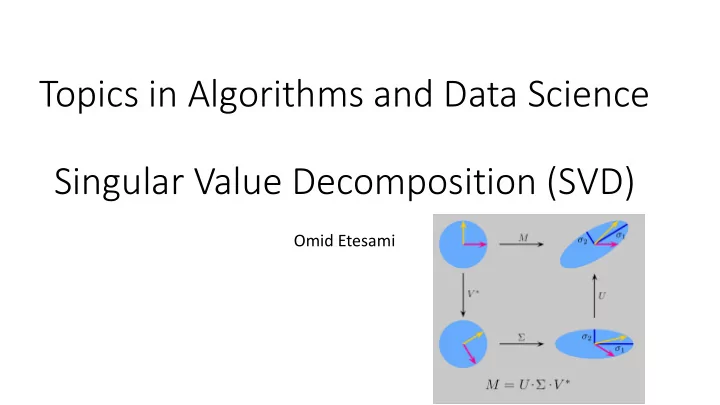

Left singular vector ui = A vi / ơi D diagonal with diagonal entries ơi U with columns ui V with columns vi

Proof:

u1 u3 u2

v2 v1 v’2 v’1 v’3 v3

ơ1 = ơ2 v’3 = -v3

Ak is the best rank-k approximation to A under Frobenius norm.

I like football John likes basketball Doc 1 1 1 2 Doc 2 2 1 1 Doc 3 1 1 1

n×d term-document matrix:

each term

When many queries, we preprocess and get We can now answer queries in O(kd + kn) time:

A u1,…,uk,v1,…,vk Ak x

preprocessing answering queries

Good when k << d, n

Spectral norm of M = ơ1

rank-4 approximation

Therefore eigenvalues of B are square of singular values of A, and eigenvectors of B are right singular vectors of A.

absolute value of eigenvalues of A are singular values of A, and eigenvectors of A are right singular vectors of A.

If ơ1 ≠ ơ2 , then Bk tends to ơ1

2k vi vi T.

Estimate of v1 = a normalized column of Bk

E.g. A may be 108 × 108 but we represent it by its say 109 nonzero entries. B may have 1016 nonzero entries, so big not even possible to write.

Use matrix-vector multiplication instead of matrix-matrix multiplication Algorithm:

Proof:

2kδ.

n costumers, d movies matrix A where aij= rating of user i for movie j

e.g. “amount of comedy”, “novelty of story”, …

user

recommend a movie or target an ad based on previous purchases

then apply SVD to recover missing entries

thus, assume stochastic models of data.

A class of stochastic models are mixture models, e.g. mixture of spherical Gaussians F = w1 p1 + … + wk pk

Given n i.i.d. samples drawn according to F, fit a mixture of k Gaussians to them. Possible solution:

(by choosing empirical mean and variance)

deviation apart, clustering unambiguous.

the same Gaussian, then |x – y|2 = 2 (d1/2 ± O(1))2 σ2

two Gaussians at distance Δ, then |x – y|2 = 2 (d1/2 ± O(1))2 σ2 + Δ2 Thus, to distinguish the two cases, we need inter-center distance Δ ≥ Ω (σ d1/4).

If we knew the subspace spanned by the k centers, we could project points on that subspace. Inter-center distances would not change, and the samples are still spherical Gaussians but now in k-space, so now a separation of Θ (σ k1/4) is enough.

for points sampled according to the mixture distribution passes through the centers. Thus, we can find the subspace by SVD

Green points = sample points

passes through the center.

is any subspace that passes through the center.

is simultaneously best for all Gaussians.

Given documents in a collection, how do we rank documents according to their relevance to the collection?

documents onto the first right singular vector.

I like football John likes basketball Doc 1 1 1 2 Doc 2 2 1 1 Doc 3 1 1 1

(with many pointers from hubs)

(with many pointers to hubs) Looks like a “circular” definition.

Begin with a random v. Iteratively set u := Av, v := AT u. Rank authorities according to v. This is same as computing 1st singular vector through power method.

Another ranking algorithm but based on random walks.

Given a directed graph, partition vertices into two sets S, T such that max number of edges from S to T are cut. It is NP-hard. Equivalently, we want to find 0-1 vector x such that xT A (1-x) is maximized.

Dark edges are cut

For constant k, maximize xT Ak (1-x) instead of xT A (1-x).

rank-4 approximation

rank-4 approximation

This is NP-hard even for k = 1. (Reduction from Set Partition problem) We approximate instead.