SLIDE 1

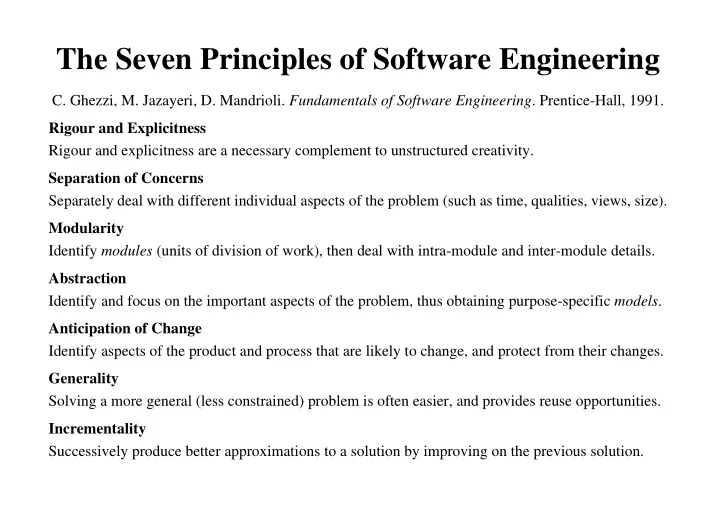

The Seven Principles of Software Engineering

- C. Ghezzi, M. Jazayeri, D. Mandrioli. Fundamentals of Software Engineering. Prentice-Hall, 1991.