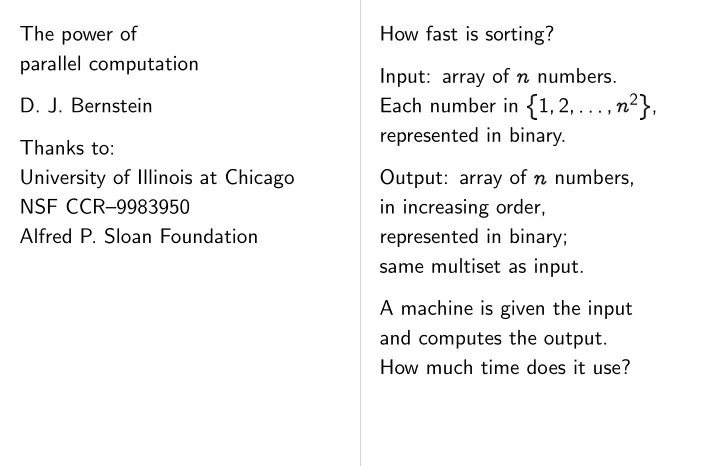

SLIDE 1 The power of parallel computation

Thanks to: University of Illinois at Chicago NSF CCR–9983950 Alfred P. Sloan Foundation How fast is sorting? Input: array of

Each number in 1

✁ 2 ✁ ✂ ✂ ✂ ✁ 2 ,

represented in binary. Output: array of

in increasing order, represented in binary; same multiset as input. A machine is given the input and computes the output. How much time does it use?

SLIDE 2 computation Illinois at Chicago CCR–9983950 Foundation How fast is sorting? Input: array of

Each number in 1

✁ 2 ✁ ✂ ✂ ✂ ✁ 2 ,

represented in binary. Output: array of

in increasing order, represented in binary; same multiset as input. A machine is given the input and computes the output. How much time does it use? The answer depends how the machine w Possibility 1: The “1-tape Turing machine using selection sort.” Specifically: The machine a 1-dimensional arra containing Θ(

) “cells.”

Each cell stores Θ(lg

stored in these cells.

SLIDE 3 How fast is sorting? Input: array of

Each number in 1

✁ 2 ✁ ✂ ✂ ✂ ✁ 2 ,

represented in binary. Output: array of

in increasing order, represented in binary; same multiset as input. A machine is given the input and computes the output. How much time does it use? The answer depends on how the machine works. Possibility 1: The machine is a “1-tape Turing machine using selection sort.” Specifically: The machine has a 1-dimensional array containing Θ(

) “cells.”

Each cell stores Θ(lg

) bits.

Input and output are stored in these cells.

SLIDE 4 rting?

1

✁ 2 ✁ ✂ ✂ ✂ ✁ 2 ,

binary.

rder, binary; input. given the input the output. does it use? The answer depends on how the machine works. Possibility 1: The machine is a “1-tape Turing machine using selection sort.” Specifically: The machine has a 1-dimensional array containing Θ(

) “cells.”

Each cell stores Θ(lg

) bits.

Input and output are stored in these cells. The machine also “head” moving through Head contains Θ(1) Head can see the cell its current array position; perform arithmetic move to adjacent a Selection sort: Head looks at each array picks up the largest moves it to the end picks up the second etc.

SLIDE 5

The answer depends on how the machine works. Possibility 1: The machine is a “1-tape Turing machine using selection sort.” Specifically: The machine has a 1-dimensional array containing Θ(

) “cells.”

Each cell stores Θ(lg

) bits.

Input and output are stored in these cells. The machine also has a “head” moving through array. Head contains Θ(1) cells. Head can see the cell at its current array position; perform arithmetic etc.; move to adjacent array position. Selection sort: Head looks at each array position, picks up the largest number, moves it to the end of the array, picks up the second largest, etc.

SLIDE 6 ends on machine works. The machine is a machine sort.” machine has array

Θ(lg

) bits.

cells. The machine also has a “head” moving through array. Head contains Θ(1) cells. Head can see the cell at its current array position; perform arithmetic etc.; move to adjacent array position. Selection sort: Head looks at each array position, picks up the largest number, moves it to the end of the array, picks up the second largest, etc. Moving to adjacent takes

Moving a number takes

1+ (1) seconds.

Same for comparisons Total sorting time:

2+ (1) seconds.

Cost of machine:

1+ (1) Euros

for

1+ (1) cells.

Negligible extra cost

SLIDE 7 The machine also has a “head” moving through array. Head contains Θ(1) cells. Head can see the cell at its current array position; perform arithmetic etc.; move to adjacent array position. Selection sort: Head looks at each array position, picks up the largest number, moves it to the end of the array, picks up the second largest, etc. Moving to adjacent array position takes

Moving a number to end of array takes

1+ (1) seconds.

Same for comparisons etc. Total sorting time:

2+ (1) seconds.

Cost of machine:

1+ (1) Euros

for

1+ (1) cells.

Negligible extra cost for head.

SLIDE 8 also has a through array. Θ(1) cells. the cell at position; rithmetic etc.; adjacent array position. Head rray position, rgest number, end of the array, second largest, Moving to adjacent array position takes

Moving a number to end of array takes

1+ (1) seconds.

Same for comparisons etc. Total sorting time:

2+ (1) seconds.

Cost of machine:

1+ (1) Euros

for

1+ (1) cells.

Negligible extra cost for head. Possibility 2: The “2-dimensional RAM using merge sort.” Machine has Θ(

)

in a 2-dimensional Θ(

) rows, Θ(

Merge sort: Head sorts first

✁ numb

sorts last

✂

✄ numb

merges the sorted

SLIDE 9 Moving to adjacent array position takes

Moving a number to end of array takes

1+ (1) seconds.

Same for comparisons etc. Total sorting time:

2+ (1) seconds.

Cost of machine:

1+ (1) Euros

for

1+ (1) cells.

Negligible extra cost for head. Possibility 2: The machine is a “2-dimensional RAM using merge sort.” Machine has Θ(

) cells

in a 2-dimensional array: Θ(

) rows, Θ( ) columns.

Machine also has a head. Merge sort: Head recursively sorts first

✁ numbers;

sorts last

✂

✄ numbers;

merges the sorted lists.

SLIDE 10 adjacent array position

er to end of array

risons etc. time:

machine:

cost for head. Possibility 2: The machine is a “2-dimensional RAM using merge sort.” Machine has Θ(

) cells

in a 2-dimensional array: Θ(

) rows, Θ( ) columns.

Machine also has a head. Merge sort: Head recursively sorts first

✁ numbers;

sorts last

✂

✄ numbers;

merges the sorted lists. Merging requires

Average jump:

Each move takes

1 5+ (1) seconds.

Cost of machine: once

1+ (1) Euros.

SLIDE 11 Possibility 2: The machine is a “2-dimensional RAM using merge sort.” Machine has Θ(

) cells

in a 2-dimensional array: Θ(

) rows, Θ( ) columns.

Machine also has a head. Merge sort: Head recursively sorts first

✁ numbers;

sorts last

✂

✄ numbers;

merges the sorted lists. Merging requires

1+ (1) jumps

to “random” array positions. Average jump:

5+ (1) moves

to adjacent array positions. Each move takes

Total sorting time:

1 5+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

SLIDE 12 The machine is a RAM rt.”

) cells

2-dimensional array:

) columns.

has a head. Head recursively

✂

rted lists. Merging requires

1+ (1) jumps

to “random” array positions. Average jump:

5+ (1) moves

to adjacent array positions. Each move takes

Total sorting time:

1 5+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

Possibility 3: The “pipelined 2-dimensional using radix-2 sort.” Machine has Θ(

)

in a 2-dimensional Each cell in the arra network links to the cells in the same column. Each cell in the top network links to the cells in the top row. Machine also has a attached to top-left

SLIDE 13 Merging requires

1+ (1) jumps

to “random” array positions. Average jump:

5+ (1) moves

to adjacent array positions. Each move takes

Total sorting time:

1 5+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

Possibility 3: The machine is a “pipelined 2-dimensional RAM using radix-2 sort.” Machine has Θ(

) cells

in a 2-dimensional array. Each cell in the array has network links to the 2 adjacent cells in the same column. Each cell in the top row has network links to the 2 adjacent cells in the top row. Machine also has a CPU attached to top-left cell.

SLIDE 14 1+ (1) jumps

rray positions.

5+ (1) moves

y positions. es

time:

machine: once again

- Possibility 3: The machine is a

“pipelined 2-dimensional RAM using radix-2 sort.” Machine has Θ(

) cells

in a 2-dimensional array. Each cell in the array has network links to the 2 adjacent cells in the same column. Each cell in the top row has network links to the 2 adjacent cells in the top row. Machine also has a CPU attached to top-left cell. Radix-2 sort: CPU shuffles array using even numbers befo 3 1 4 1 5 9 2 6

Then using bit 1: 4 1 1 5 9 2 6 3. Then using bit 2: 1 1 9 2 3 4 5 6. Then using bit 3: 1 1 2 3 4 5 6 9.

) bits.

SLIDE 15 Possibility 3: The machine is a “pipelined 2-dimensional RAM using radix-2 sort.” Machine has Θ(

) cells

in a 2-dimensional array. Each cell in the array has network links to the 2 adjacent cells in the same column. Each cell in the top row has network links to the 2 adjacent cells in the top row. Machine also has a CPU attached to top-left cell. Radix-2 sort: CPU shuffles array using bit 0, even numbers before odd. 3 1 4 1 5 9 2 6

Then using bit 1: 4 1 1 5 9 2 6 3. Then using bit 2: 1 1 9 2 3 4 5 6. Then using bit 3: 1 1 2 3 4 5 6 9.

) bits.

SLIDE 16 The machine is a 2-dimensional RAM rt.”

) cells

2-dimensional array. array has the 2 adjacent column. top row has the 2 adjacent row. has a CPU top-left cell. Radix-2 sort: CPU shuffles array using bit 0, even numbers before odd. 3 1 4 1 5 9 2 6

Then using bit 1: 4 1 1 5 9 2 6 3. Then using bit 2: 1 1 9 2 3 4 5 6. Then using bit 3: 1 1 2 3 4 5 6 9.

) bits.

CPU can read/write sending request through Does not need to w before sending next CPU can read an entire

5+ (1) cells

in

5+ (1) seconds.

Sends all requests, then receives responses. Total sorting time:

1+ (1) seconds.

Cost of machine: once

1+ (1) Euros.

SLIDE 17 Radix-2 sort: CPU shuffles array using bit 0, even numbers before odd. 3 1 4 1 5 9 2 6

Then using bit 1: 4 1 1 5 9 2 6 3. Then using bit 2: 1 1 9 2 3 4 5 6. Then using bit 3: 1 1 2 3 4 5 6 9.

) bits.

CPU can read/write any cell by sending request through network. Does not need to wait for response before sending next request. CPU can read an entire row

5+ (1) cells

in

5+ (1) seconds.

Sends all requests, then receives responses. Total sorting time:

1+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

SLIDE 18 CPU using bit 0, efore odd.

2: 3:

CPU can read/write any cell by sending request through network. Does not need to wait for response before sending next request. CPU can read an entire row

5+ (1) cells

in

5+ (1) seconds.

Sends all requests, then receives responses. Total sorting time:

1+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

Possibility 4: The “2-dimensional mesh using Schimmler so Machine has Θ(

)

in a 2-dimensional Each cell has netw to the 4 adjacent cells. Machine also has a attached to top-left CPU broadcasts instructions to all of the cells, but cells do most of the

SLIDE 19 CPU can read/write any cell by sending request through network. Does not need to wait for response before sending next request. CPU can read an entire row

5+ (1) cells

in

5+ (1) seconds.

Sends all requests, then receives responses. Total sorting time:

1+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

Possibility 4: The machine is a “2-dimensional mesh using Schimmler sort.” Machine has Θ(

) cells

in a 2-dimensional array. Each cell has network links to the 4 adjacent cells. Machine also has a CPU attached to top-left cell. CPU broadcasts instructions to all of the cells, but cells do most of the processing.

SLIDE 20 read/write any cell by through network. to wait for response next request. an entire row

requests, responses. time:

machine: once again

- Possibility 4: The machine is a

“2-dimensional mesh using Schimmler sort.” Machine has Θ(

) cells

in a 2-dimensional array. Each cell has network links to the 4 adjacent cells. Machine also has a CPU attached to top-left cell. CPU broadcasts instructions to all of the cells, but cells do most of the processing. Schimmler sort: Recursively sort quadrants in parallel. Then four Sort each column in Sort each row in pa Sort each column in Sort each row in pa With proper choice left-to-right/right-to-left for each row, can p that this sorts whole

SLIDE 21

Possibility 4: The machine is a “2-dimensional mesh using Schimmler sort.” Machine has Θ(

) cells

in a 2-dimensional array. Each cell has network links to the 4 adjacent cells. Machine also has a CPU attached to top-left cell. CPU broadcasts instructions to all of the cells, but cells do most of the processing. Schimmler sort: Recursively sort quadrants in parallel. Then four steps: Sort each column in parallel. Sort each row in parallel. Sort each column in parallel. Sort each row in parallel. With proper choice of left-to-right/right-to-left for each row, can prove that this sorts whole array.

SLIDE 22 The machine is a mesh sort.”

) cells

2-dimensional array. network links adjacent cells. has a CPU top-left cell. instructions cells, but the processing. Schimmler sort: Recursively sort quadrants in parallel. Then four steps: Sort each column in parallel. Sort each row in parallel. Sort each column in parallel. Sort each row in parallel. With proper choice of left-to-right/right-to-left for each row, can prove that this sorts whole array. To sort one row: Sort each pair in pa 3 1 4 1 5 9 2 6

Sort alternate pairs 1 3 1 4 5 9 2 6

Repeat. Can prove that row when number of steps equals row length.

SLIDE 23 Schimmler sort: Recursively sort quadrants in parallel. Then four steps: Sort each column in parallel. Sort each row in parallel. Sort each column in parallel. Sort each row in parallel. With proper choice of left-to-right/right-to-left for each row, can prove that this sorts whole array. To sort one row: Sort each pair in parallel. 3 1 4 1 5 9 2 6

Sort alternate pairs in parallel. 1 3 1 4 5 9 2 6

Repeat. Can prove that row is sorted when number of steps equals row length.

SLIDE 24 quadrants four steps: column in parallel. parallel. column in parallel. parallel. choice of left-to-right/right-to-left can prove whole array. To sort one row: Sort each pair in parallel. 3 1 4 1 5 9 2 6

Sort alternate pairs in parallel. 1 3 1 4 5 9 2 6

Repeat. Can prove that row is sorted when number of steps equals row length. Sort one row in

5+ (1) seconds.

All rows in parallel:

5+ (1) seconds.

Total sorting time:

5+ (1) seconds.

Cost of machine: once

1+ (1) Euros.

SLIDE 25 To sort one row: Sort each pair in parallel. 3 1 4 1 5 9 2 6

Sort alternate pairs in parallel. 1 3 1 4 5 9 2 6

Repeat. Can prove that row is sorted when number of steps equals row length. Sort one row in

5+ (1) seconds.

All rows in parallel:

5+ (1) seconds.

Total sorting time:

5+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

SLIDE 26 parallel.

- pairs in parallel.

- row is sorted

steps length. Sort one row in

5+ (1) seconds.

All rows in parallel:

5+ (1) seconds.

Total sorting time:

5+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

Some philosophical 1-tape Turing machines, RAMs, 2-dimensional compute the same Prove this by proving each machine can computations on the (We believe that every reasonable model of can be simulated b 1-tape Turing machine. “Church-Turing thesis.”)

SLIDE 27

Sort one row in

5+ (1) seconds.

All rows in parallel:

5+ (1) seconds.

Total sorting time:

5+ (1) seconds.

Cost of machine: once again

1+ (1) Euros.

Some philosophical notes 1-tape Turing machines, RAMs, 2-dimensional meshes compute the same functions. Prove this by proving that each machine can simulate computations on the others. (We believe that every reasonable model of computation can be simulated by a 1-tape Turing machine. “Church-Turing thesis.”)

SLIDE 28

rallel:

time:

machine: once again

1-tape Turing machines, RAMs, 2-dimensional meshes compute the same functions. Prove this by proving that each machine can simulate computations on the others. (We believe that every reasonable model of computation can be simulated by a 1-tape Turing machine. “Church-Turing thesis.”) 1-tape Turing machines, RAMs, 2-dimensional compute the same in polynomial time at polynomial cost. Prove this by proving simulations are polynomial. (Is this true for every reasonable model of Consider quantum

SLIDE 29

Some philosophical notes 1-tape Turing machines, RAMs, 2-dimensional meshes compute the same functions. Prove this by proving that each machine can simulate computations on the others. (We believe that every reasonable model of computation can be simulated by a 1-tape Turing machine. “Church-Turing thesis.”) 1-tape Turing machines, RAMs, 2-dimensional meshes compute the same functions in polynomial time at polynomial cost. Prove this by proving that simulations are polynomial. (Is this true for every reasonable model of computation? Consider quantum computers.)

SLIDE 30 philosophical notes machines, 2-dimensional meshes same functions. roving that can simulate the others. every del of computation by a machine. thesis.”) 1-tape Turing machines, RAMs, 2-dimensional meshes compute the same functions in polynomial time at polynomial cost. Prove this by proving that simulations are polynomial. (Is this true for every reasonable model of computation? Consider quantum computers.) 1-tape Turing machines, RAMs, 2-dimensional do not compute the same functions within, e.g., time

1+ (1).

Example: 1-tape T cannot sort in time

Example: 2-dimensional cannot sort in time

SLIDE 31

1-tape Turing machines, RAMs, 2-dimensional meshes compute the same functions in polynomial time at polynomial cost. Prove this by proving that simulations are polynomial. (Is this true for every reasonable model of computation? Consider quantum computers.) 1-tape Turing machines, RAMs, 2-dimensional meshes do not compute the same functions within, e.g., time

1+ (1)

and cost

1+ (1).

Example: 1-tape Turing machine cannot sort in time

1+ (1).

Too local! Example: 2-dimensional RAM cannot sort in time

5+ (1).

Too sequential!

SLIDE 32 machines, 2-dimensional meshes same functions time cost. roving that

every del of computation? quantum computers.) 1-tape Turing machines, RAMs, 2-dimensional meshes do not compute the same functions within, e.g., time

1+ (1)

and cost

1+ (1).

Example: 1-tape Turing machine cannot sort in time

1+ (1).

Too local! Example: 2-dimensional RAM cannot sort in time

5+ (1).

Too sequential!

(1) is asymptotic.

Speedup factor such

for small values of

RAM might seem to sensible machine design. But, for large

,

having a huge memo waiting for a single is a silly machine design.

SLIDE 33 1-tape Turing machines, RAMs, 2-dimensional meshes do not compute the same functions within, e.g., time

1+ (1)

and cost

1+ (1).

Example: 1-tape Turing machine cannot sort in time

1+ (1).

Too local! Example: 2-dimensional RAM cannot sort in time

5+ (1).

Too sequential!

(1) is asymptotic.

Speedup factor such as

5+ (1)

might not be a speedup for small values of

.

When

RAM might seem to be a sensible machine design. But, for large

,

having a huge memory waiting for a single CPU is a silly machine design.

SLIDE 34 machines, 2-dimensional meshes functions time

1+ (1)

Turing machine time

1+ (1).

2-dimensional RAM time

5+ (1). (1) is asymptotic.

Speedup factor such as

5+ (1)

might not be a speedup for small values of

.

When

RAM might seem to be a sensible machine design. But, for large

,

having a huge memory waiting for a single CPU is a silly machine design. Myth: Parallel computation improve price-perfo parallel computers may reduce time b but increase cost b Reality: Can often a large serial computer into small parallel so cost does not increase by factor

SLIDE 35 (1) is asymptotic.

Speedup factor such as

5+ (1)

might not be a speedup for small values of

.

When

RAM might seem to be a sensible machine design. But, for large

,

having a huge memory waiting for a single CPU is a silly machine design. Myth: Parallel computation cannot improve price-performance ratio; parallel computers may reduce time by factor but increase cost by factor . Reality: Can often convert a large serial computer into small parallel cells, so cost does not increase by factor .

SLIDE 36

such as

5+ (1)

speedup

.

to be a design.

single CPU machine design. Myth: Parallel computation cannot improve price-performance ratio; parallel computers may reduce time by factor but increase cost by factor . Reality: Can often convert a large serial computer into small parallel cells, so cost does not increase by factor . Myth: Designing a cannot produce mo small constant-facto compared to, e.g., What matters is sp streamlining, such instruction-decoding Reality: In 1997, DES was 1000 times faster set of Pentiums at What matters is pa

SLIDE 37

Myth: Parallel computation cannot improve price-performance ratio; parallel computers may reduce time by factor but increase cost by factor . Reality: Can often convert a large serial computer into small parallel cells, so cost does not increase by factor . Myth: Designing a new machine cannot produce more than a small constant-factor improvement compared to, e.g., a Pentium. What matters is special-purpose streamlining, such as reducing instruction-decoding costs. Reality: In 1997, DES Cracker was 1000 times faster than a set of Pentiums at the same price. What matters is parallelism.

SLIDE 38 computation cannot erformance ratio; computers by factor cost by factor .

computer rallel cells, r . Myth: Designing a new machine cannot produce more than a small constant-factor improvement compared to, e.g., a Pentium. What matters is special-purpose streamlining, such as reducing instruction-decoding costs. Reality: In 1997, DES Cracker was 1000 times faster than a set of Pentiums at the same price. What matters is parallelism. Future computers massively parallel meshes. Computer designers today’s RAM-style just as we laugh at a 1-tape Turing machine. Algorithm experts today’s dominant st algorithm analysis, count CPU “operations” view memory access

SLIDE 39

Myth: Designing a new machine cannot produce more than a small constant-factor improvement compared to, e.g., a Pentium. What matters is special-purpose streamlining, such as reducing instruction-decoding costs. Reality: In 1997, DES Cracker was 1000 times faster than a set of Pentiums at the same price. What matters is parallelism. Future computers will be massively parallel meshes. Computer designers will laugh at today’s RAM-style machines, just as we laugh at a 1-tape Turing machine. Algorithm experts will laugh at today’s dominant style of algorithm analysis, where we count CPU “operations” and view memory access as free.

SLIDE 40 a new machine more than a constant-factor improvement e.g., a Pentium. special-purpose such as reducing ding costs. 1997, DES Cracker faster than a at the same price. parallelism. Future computers will be massively parallel meshes. Computer designers will laugh at today’s RAM-style machines, just as we laugh at a 1-tape Turing machine. Algorithm experts will laugh at today’s dominant style of algorithm analysis, where we count CPU “operations” and view memory access as free. Brute-force searches For each 128-bit AES define ( ) = AES

given ( ); want to Cryptanalyst builds parallel AES circuits, each guessing

for a total of

Time:

Cost: AES circuits. Success chance:

SLIDE 41 Future computers will be massively parallel meshes. Computer designers will laugh at today’s RAM-style machines, just as we laugh at a 1-tape Turing machine. Algorithm experts will laugh at today’s dominant style of algorithm analysis, where we count CPU “operations” and view memory access as free. Brute-force searches For each 128-bit AES key define ( ) = AES

(0).

Typical known-plaintext attack: given ( ); want to find . Cryptanalyst builds machine with parallel AES circuits, each guessing

for a total of

Time:

Cost: AES circuits. Success chance:

SLIDE 42 computers will be rallel meshes. designers will laugh at yle machines, at machine. erts will laugh at dominant style of analysis, where we erations” and access as free. Brute-force searches For each 128-bit AES key define ( ) = AES

(0).

Typical known-plaintext attack: given ( ); want to find . Cryptanalyst builds machine with parallel AES circuits, each guessing

for a total of

Time:

Cost: AES circuits. Success chance:

Cryptanalyst is actually attacking many AES Wants to find

1

✁ ✁ ✂ ✂ ✂

given ( 1)

✁

( 2

✁ ✂ ✂ ✂

Rivest’s “time-memo using distinguished merges these computations. For any 128-bit

:

(

) ✁

( (

)) ✁ ✂ ✂ ✂

finding string that with 30 zero bits. Call that string (

SLIDE 43 Brute-force searches For each 128-bit AES key define ( ) = AES

(0).

Typical known-plaintext attack: given ( ); want to find . Cryptanalyst builds machine with parallel AES circuits, each guessing

for a total of

Time:

Cost: AES circuits. Success chance:

Cryptanalyst is actually attacking many AES keys. Wants to find

1

✁

2

✁ ✂ ✂ ✂

given ( 1)

✁

( 2)

✁ ✂ ✂ ✂ .

Rivest’s “time-memory tradeoff using distinguished points” merges these computations. For any 128-bit

: Compute

(

) ✁

( (

)) ✁ ✂ ✂ ✂ until

finding string that begins with 30 zero bits. Call that string (

).

SLIDE 44 rches AES key AES

(0).

wn-plaintext attack: ant to find . builds machine with circuits,

circuits.

Cryptanalyst is actually attacking many AES keys. Wants to find

1

✁

2

✁ ✂ ✂ ✂

given ( 1)

✁

( 2)

✁ ✂ ✂ ✂ .

Rivest’s “time-memory tradeoff using distinguished points” merges these computations. For any 128-bit

: Compute

(

) ✁

( (

)) ✁ ✂ ✂ ✂ until

finding string that begins with 30 zero bits. Call that string (

).

Given ( 1)

✁

( 2

✁ ✂ ✂ ✂ ✁

1 ✁

✂ ✂ ✂ ✁

(

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

Compute each (

✁

look up ( (

✁ ))

If ( (

✁ )) =

(

check whether (

✁

any of (

✁

(

✁ ✂ ✂ ✂

Details: avoid infinite handle multiple collisions.

SLIDE 45 Cryptanalyst is actually attacking many AES keys. Wants to find

1

✁

2

✁ ✂ ✂ ✂

given ( 1)

✁

( 2)

✁ ✂ ✂ ✂ .

Rivest’s “time-memory tradeoff using distinguished points” merges these computations. For any 128-bit

: Compute

(

) ✁

( (

)) ✁ ✂ ✂ ✂ until

finding string that begins with 30 zero bits. Call that string (

).

Given ( 1)

✁

( 2)

✁ ✂ ✂ ✂ ✁

(

):

Choose random

1 ✁ 2 ✁ ✂ ✂ ✂ ✁

Store (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

(

in an array in RAM. Compute each ( (

✁ ));

look up ( (

✁ )) in the array.

If ( (

✁ )) =

(

check whether (

✁ ) matches

any of (

✁

( (

✁ ✂ ✂ ✂ .

Details: avoid infinite loops; handle multiple collisions.

SLIDE 46 actually AES keys.

✁

2

✁ ✂ ✂ ✂ ✁

2)

✁ ✂ ✂ ✂ .

“time-memory tradeoff distinguished points” computations.

: Compute

✂ ✂ ✂ until

that begins bits. (

).

Given ( 1)

✁

( 2)

✁ ✂ ✂ ✂ ✁

(

):

Choose random

1 ✁ 2 ✁ ✂ ✂ ✂ ✁

Store (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

(

in an array in RAM. Compute each ( (

✁ ));

look up ( (

✁ )) in the array.

If ( (

✁ )) =

(

check whether (

✁ ) matches

any of (

✁

( (

✁ ✂ ✂ ✂ .

Details: avoid infinite loops; handle multiple collisions. Heuristic analysis: (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

230 outputs If any of the inputs then we’ll find

1.

Chance 230 2128 Same for

2

✁

3

✁ ✂ ✂ ✂

Total chance 230

On a serial computer, 231 AES evaluations. Cost: 128 bits

SLIDE 47 Given ( 1)

✁

( 2)

✁ ✂ ✂ ✂ ✁

(

):

Choose random

1 ✁ 2 ✁ ✂ ✂ ✂ ✁

Store (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

(

in an array in RAM. Compute each ( (

✁ ));

look up ( (

✁ )) in the array.

If ( (

✁ )) =

(

check whether (

✁ ) matches

any of (

✁

( (

✁ ✂ ✂ ✂ .

Details: avoid infinite loops; handle multiple collisions. Heuristic analysis: Computing (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

(

involves 230 outputs of . If any of the inputs match

1

then we’ll find

1.

Chance 230 2128. Same for

2

✁

3

✁ ✂ ✂ ✂ .

Total chance 230 2 2128

- f finding at least one key.

On a serial computer, 231 AES evaluations. Cost: 128 bits of memory.

SLIDE 48 ✁

2)

✁ ✂ ✂ ✂ ✁

(

): 1 ✁ 2 ✁ ✂ ✂ ✂ ✁

2) ✁ ✂ ✂ ✂ ✁

(

RAM. ( (

✁ )); ✁ )) in the array. ✁

(

(

✁ ) matches

✁

( (

✁ ✂ ✂ ✂ .

infinite loops; collisions. Heuristic analysis: Computing (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

(

involves 230 outputs of . If any of the inputs match

1

then we’ll find

1.

Chance 230 2128. Same for

2

✁

3

✁ ✂ ✂ ✂ .

Total chance 230 2 2128

- f finding at least one key.

On a serial computer, 231 AES evaluations. Cost: 128 bits of memory. Much better: Massive Compute all values using AES circuits. Use Schimmler sort collisions ( (

✁ ))

Time: 231 AES plus 8 Schimmler About times faster Cost: AES circuits, plus network links. Maybe 100 times mo than serial. Can reduce

SLIDE 49 Heuristic analysis: Computing (

1) ✁

(

2) ✁ ✂ ✂ ✂ ✁

(

involves 230 outputs of . If any of the inputs match

1

then we’ll find

1.

Chance 230 2128. Same for

2

✁

3

✁ ✂ ✂ ✂ .

Total chance 230 2 2128

- f finding at least one key.

On a serial computer, 231 AES evaluations. Cost: 128 bits of memory. Much better: Massive parallelism. Compute all values in parallel, using AES circuits. Use Schimmler sort to find collisions ( (

✁ )) =

(

Time: 231 AES evaluations, plus 8 Schimmler steps. About times faster than serial. Cost: AES circuits, plus network links. Maybe 100 times more expensive than serial. Can reduce the 100.

SLIDE 50 analysis: Computing

✂ ✂ ✂ ✁

(

. inputs match

1

. 2128.

✁ ✁ ✂ ✂ ✂ .

230 2 2128 least one key. computer, evaluations. bits of memory. Much better: Massive parallelism. Compute all values in parallel, using AES circuits. Use Schimmler sort to find collisions ( (

✁ )) =

(

Time: 231 AES evaluations, plus 8 Schimmler steps. About times faster than serial. Cost: AES circuits, plus network links. Maybe 100 times more expensive than serial. Can reduce the 100. Sieving The “number-field is today’s fastest metho to factor a big RSA

find small prime diviso

✁✁ + 2 ✁ ✂ ✂ ✂ ✁✂

1000002: divisible 1000003: 1000004: divisible 1000005: divisible 1000006: divisible

SLIDE 51 Much better: Massive parallelism. Compute all values in parallel, using AES circuits. Use Schimmler sort to find collisions ( (

✁ )) =

(

Time: 231 AES evaluations, plus 8 Schimmler steps. About times faster than serial. Cost: AES circuits, plus network links. Maybe 100 times more expensive than serial. Can reduce the 100. Sieving The “number-field sieve” (NFS) is today’s fastest method to factor a big RSA key

.

Most important NFS bottleneck: find small prime divisors

✁✁ + 2 ✁ ✂ ✂ ✂ ✁✂ + .

1000002: divisible by 2 3 1000003: 1000004: divisible by 2 2 1000005: divisible by 3 5 1000006: divisible by 2 7

SLIDE 52 Massive parallelism. values in parallel, circuits. sort to find

✁ )) =

(

AES evaluations, Schimmler steps. faster than serial. circuits, links. times more expensive reduce the 100. Sieving The “number-field sieve” (NFS) is today’s fastest method to factor a big RSA key

.

Most important NFS bottleneck: find small prime divisors

✁✁ + 2 ✁ ✂ ✂ ✂ ✁✂ + .

1000002: divisible by 2 3 1000003: 1000004: divisible by 2 2 1000005: divisible by 3 5 1000006: divisible by 2 7 Conventional sieving/TWINKLE (e.g. 2000 Silverman, 2000 Lenstra Shamir): Generate pairs (2

✁ 1000002),

(2

✁ 1000004), (2 ✁ 1000006), ✂ ✂ ✂

(3

✁ 1000002), (3 ✁ 1000005), ✂ ✂ ✂

etc. Use distribution so to sort by second comp

1+

(1) pairs.

Sorting time

1+

(1)

machine cost

1+

SLIDE 53 Sieving The “number-field sieve” (NFS) is today’s fastest method to factor a big RSA key

.

Most important NFS bottleneck: find small prime divisors

✁✁ + 2 ✁ ✂ ✂ ✂ ✁✂ + .

1000002: divisible by 2 3 1000003: 1000004: divisible by 2 2 1000005: divisible by 3 5 1000006: divisible by 2 7 Conventional sieving/TWINKLE (e.g. 2000 Silverman, 2000 Lenstra Shamir): Generate pairs (2

✁ 1000002),

(2

✁ 1000004), (2 ✁ 1000006), ✂ ✂ ✂ ,

(3

✁ 1000002), (3 ✁ 1000005), ✂ ✂ ✂ ,

etc. Use distribution sort to sort by second component.

1+

(1) pairs.

Sorting time

1+

(1);

machine cost

1+

(1).

SLIDE 54 er-field sieve” (NFS) fastest method RSA key

.

NFS bottleneck: divisors

✁✁

2

✁ ✂ ✂ ✂ ✁✂ + .

divisible by 2 3 divisible by 2 2 divisible by 3 5 divisible by 2 7 Conventional sieving/TWINKLE (e.g. 2000 Silverman, 2000 Lenstra Shamir): Generate pairs (2

✁ 1000002),

(2

✁ 1000004), (2 ✁ 1000006), ✂ ✂ ✂ ,

(3

✁ 1000002), (3 ✁ 1000005), ✂ ✂ ✂ ,

etc. Use distribution sort to sort by second component.

1+

(1) pairs.

Sorting time

1+

(1);

machine cost

1+

(1).

For same machine achieve much higher by massive parallelism. e.g. Schimmler sort: sorting time

5+

1+

- This drastically reduces

- verall NFS time

for sufficiently large

SLIDE 55 Conventional sieving/TWINKLE (e.g. 2000 Silverman, 2000 Lenstra Shamir): Generate pairs (2

✁ 1000002),

(2

✁ 1000004), (2 ✁ 1000006), ✂ ✂ ✂ ,

(3

✁ 1000002), (3 ✁ 1000005), ✂ ✂ ✂ ,

etc. Use distribution sort to sort by second component.

1+

(1) pairs.

Sorting time

1+

(1);

machine cost

1+

(1).

For same machine cost, achieve much higher speed by massive parallelism. e.g. Schimmler sort: sorting time

5+ (1);

machine cost

1+

(1).

This drastically reduces

for sufficiently large

.

(2001 Bernstein)

SLIDE 56 sieving/TWINKLE Silverman, Shamir): (2

✁ 1000002), ✁ ✁ 1000006), ✂ ✂ ✂ , ✁ ✁ 1000005), ✂ ✂ ✂ ,

sort second component.

(1).

For same machine cost, achieve much higher speed by massive parallelism. e.g. Schimmler sort: sorting time

5+ (1);

machine cost

1+

(1).

This drastically reduces

for sufficiently large

.

(2001 Bernstein) Can do even better low-memory small-diviso algorithms, such as elliptic-curve metho Time only

0+

(1);

machine cost

1+

- This further reduces

- verall NFS time

for sufficiently large

Can also save time bottleneck, “linear less important. (2001

SLIDE 57 For same machine cost, achieve much higher speed by massive parallelism. e.g. Schimmler sort: sorting time

5+ (1);

machine cost

1+

(1).

This drastically reduces

for sufficiently large

.

(2001 Bernstein) Can do even better with low-memory small-divisor algorithms, such as the elliptic-curve method (ECM). Time only

0+

(1);

machine cost

1+

(1).

This further reduces

for sufficiently large

.

(2001 Bernstein) Can also save time in another bottleneck, “linear algebra”; less important. (2001 Bernstein)

SLIDE 58 machine cost, higher speed rallelism. sort:

5+ (1); (1).

reduces rge

.

Bernstein) Can do even better with low-memory small-divisor algorithms, such as the elliptic-curve method (ECM). Time only

0+

(1);

machine cost

1+

(1).

This further reduces

for sufficiently large

.

(2001 Bernstein) Can also save time in another bottleneck, “linear algebra”; less important. (2001 Bernstein) NFS price-performance exp(( +

(1)) 3 (log

sieving linear algeb RAM RAM

✂ ✂ ✂ ✂

RAM RAM

✂ ✂ ✂ ✂

Schimmler RAM

✂ ✂ ✂ ✂

Schimmler Schimmler

✂ ✂ ✂ ✂

ECM RAM

✂ ✂ ✂ ✂

ECM Schimmler

✂ ✂ ✂ ✂

(RAM 2

✂ 85: standa

2

✂ 37, 1 ✂ 97: 2001.11

RAM 2

✂ 76: 2002.04

SLIDE 59 Can do even better with low-memory small-divisor algorithms, such as the elliptic-curve method (ECM). Time only

0+

(1);

machine cost

1+

(1).

This further reduces

for sufficiently large

.

(2001 Bernstein) Can also save time in another bottleneck, “linear algebra”; less important. (2001 Bernstein) NFS price-performance ratio is exp(( +

(1)) 3 (log )(log log )2)

assuming standard conjectures. sieving linear algebra RAM RAM 2

✂ 85 ✂ ✂ ✂

RAM RAM 2

✂ 76 ✂ ✂ ✂

Schimmler RAM 2

✂ 37 ✂ ✂ ✂

Schimmler Schimmler 2

✂ 36 ✂ ✂ ✂

ECM RAM 2

✂ 08 ✂ ✂ ✂

ECM Schimmler 1

✂ 97 ✂ ✂ ✂

(RAM 2

✂ 85: standard;

2

✂ 37, 1 ✂ 97: 2001.11 Bernstein;

RAM 2

✂ 76: 2002.04 Pomerance)

SLIDE 60 etter with small-divisor as the method (ECM).

(1); (1).

reduces rge

.

Bernstein) time in another “linear algebra”; (2001 Bernstein) NFS price-performance ratio is exp(( +

(1)) 3 (log )(log log )2)

assuming standard conjectures. sieving linear algebra RAM RAM 2

✂ 85 ✂ ✂ ✂

RAM RAM 2

✂ 76 ✂ ✂ ✂

Schimmler RAM 2

✂ 37 ✂ ✂ ✂

Schimmler Schimmler 2

✂ 36 ✂ ✂ ✂

ECM RAM 2

✂ 08 ✂ ✂ ✂

ECM Schimmler 1

✂ 97 ✂ ✂ ✂

(RAM 2

✂ 85: standard;

2

✂ 37, 1 ✂ 97: 2001.11 Bernstein;

RAM 2

✂ 76: 2002.04 Pomerance)

Switching from RAM massively parallel machine produces gigantic NFS for sufficiently large

RAM factorization,

✂ ✂ ✂ ✂

to best machine,

✂ ✂ ✂ ✂

corresponds to multiplying number of digits of

✂ 009 ✂ ✂ ✂ + (1).

SLIDE 61 NFS price-performance ratio is exp(( +

(1)) 3 (log )(log log )2)

assuming standard conjectures. sieving linear algebra RAM RAM 2

✂ 85 ✂ ✂ ✂

RAM RAM 2

✂ 76 ✂ ✂ ✂

Schimmler RAM 2

✂ 37 ✂ ✂ ✂

Schimmler Schimmler 2

✂ 36 ✂ ✂ ✂

ECM RAM 2

✂ 08 ✂ ✂ ✂

ECM Schimmler 1

✂ 97 ✂ ✂ ✂

(RAM 2

✂ 85: standard;

2

✂ 37, 1 ✂ 97: 2001.11 Bernstein;

RAM 2

✂ 76: 2002.04 Pomerance)

Switching from RAM to a massively parallel machine produces gigantic NFS speedups for sufficiently large

.

Improvement from conventional RAM factorization, = 2

✂ 85 ✂ ✂ ✂ ,

to best machine, = 1

✂ 97 ✂ ✂ ✂ ,

corresponds to multiplying number of digits of

✂ 009 ✂ ✂ ✂ + (1).

SLIDE 62 rmance ratio is

)(log log )2)

rd conjectures. r algebra 2

✂ 85 ✂ ✂ ✂

2

✂ 76 ✂ ✂ ✂

2

✂ 37 ✂ ✂ ✂

Schimmler 2

✂ 36 ✂ ✂ ✂

2

✂ 08 ✂ ✂ ✂

Schimmler 1

✂ 97 ✂ ✂ ✂ ✂

standard;

✂ ✂

2001.11 Bernstein;

✂

2002.04 Pomerance) Switching from RAM to a massively parallel machine produces gigantic NFS speedups for sufficiently large

.

Improvement from conventional RAM factorization, = 2

✂ 85 ✂ ✂ ✂ ,

to best machine, = 1

✂ 97 ✂ ✂ ✂ ,

corresponds to multiplying number of digits of

✂ 009 ✂ ✂ ✂ + (1).

As always,

(1) is

Situation for small

How expensive is it factor 1024-bit RSA We still don’t know. Can now find many making wild predictions. None of the predictions can be taken seriously!

SLIDE 63 Switching from RAM to a massively parallel machine produces gigantic NFS speedups for sufficiently large

.

Improvement from conventional RAM factorization, = 2

✂ 85 ✂ ✂ ✂ ,

to best machine, = 1

✂ 97 ✂ ✂ ✂ ,

corresponds to multiplying number of digits of

✂ 009 ✂ ✂ ✂ + (1).

As always,

(1) is asymptotic.

Situation for small

How expensive is it to factor 1024-bit RSA keys? We still don’t know. Can now find many papers making wild predictions. None of the predictions can be taken seriously!

SLIDE 64 RAM to a rallel machine gigantic NFS speedups rge

.

from conventional rization, = 2

✂ 85 ✂ ✂ ✂ ,

machine, = 1

✂ 97 ✂ ✂ ✂ ,

multiplying

✂ ✂ ✂ (1).

As always,

(1) is asymptotic.

Situation for small

How expensive is it to factor 1024-bit RSA keys? We still don’t know. Can now find many papers making wild predictions. None of the predictions can be taken seriously! NFS speed is complicated. Example: NFS facto

Number of polynomial is huge. Effect of p takes time to compute. Some papers don’t effort into polynomial so they underestimate Some papers make

so they overestimate

SLIDE 65 As always,

(1) is asymptotic.

Situation for small

How expensive is it to factor 1024-bit RSA keys? We still don’t know. Can now find many papers making wild predictions. None of the predictions can be taken seriously! NFS speed is complicated. Example: NFS factors

- using an auxiliary polynomial.

Number of polynomial choices is huge. Effect of polynomial takes time to compute. Some papers don’t put enough effort into polynomial choice, so they underestimate NFS speed. Some papers make unjustified

- ptimal-polynomial extrapolations,

so they overestimate NFS speed.

SLIDE 66

small

is it to RSA keys? know. many papers redictions. redictions seriously! NFS speed is complicated. Example: NFS factors

- using an auxiliary polynomial.

Number of polynomial choices is huge. Effect of polynomial takes time to compute. Some papers don’t put enough effort into polynomial choice, so they underestimate NFS speed. Some papers make unjustified

- ptimal-polynomial extrapolations,

so they overestimate NFS speed. At a lower level, to massively parallel computers are much less streamlined than today’s Pentiums. Computer market will Massive parallelism become the de-facto and will be tuned ca How much speed will Today it’s hard to But we’ll find out!

SLIDE 67 NFS speed is complicated. Example: NFS factors

- using an auxiliary polynomial.

Number of polynomial choices is huge. Effect of polynomial takes time to compute. Some papers don’t put enough effort into polynomial choice, so they underestimate NFS speed. Some papers make unjustified

- ptimal-polynomial extrapolations,

so they overestimate NFS speed. At a lower level, today’s massively parallel computers are much less streamlined than today’s Pentiums. Computer market will evolve. Massive parallelism will become the de-facto standard, and will be tuned carefully. How much speed will we gain? Today it’s hard to say. But we’ll find out!