1

Lecture 10: Sorting algorithms

2

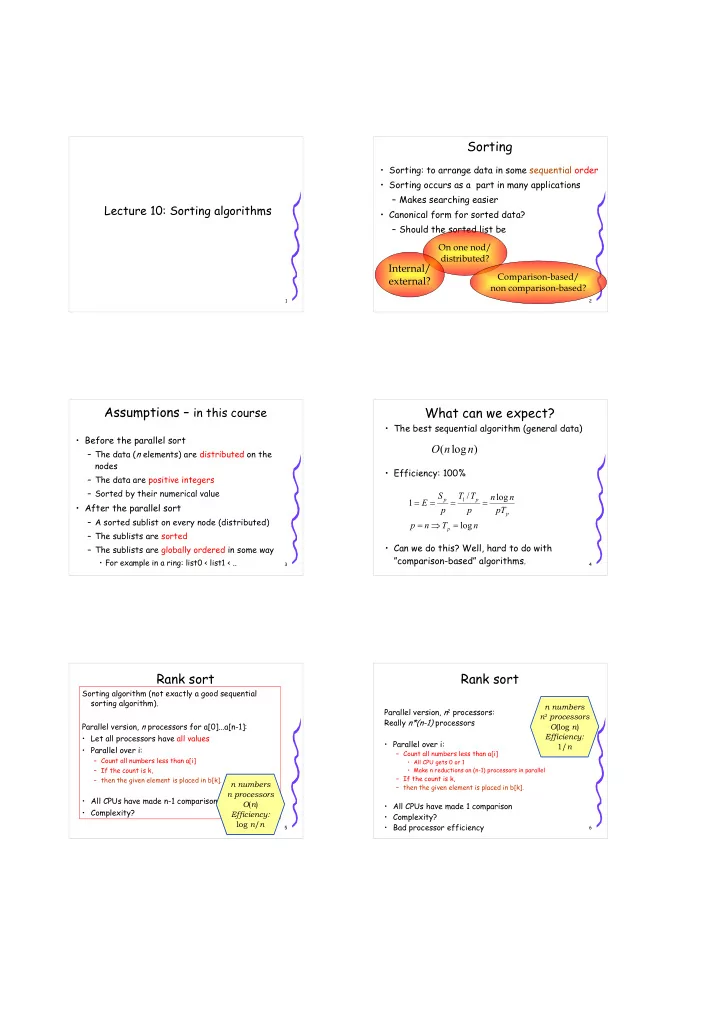

Sorting

- Sorting: to arrange data in some sequential order

- Sorting occurs as a part in many applications

– Makes searching easier

- Canonical form for sorted data?

– Should the sorted list be On one nod/ distributed?

Internal/ external?

Comparison-based/ non comparison-based?

3

Assumptions – in this course

- Before the parallel sort

– The data (n elements) are distributed on the nodes – The data are positive integers – Sorted by their numerical value

- After the parallel sort

– A sorted sublist on every node (distributed) – The sublists are sorted – The sublists are globally ordered in some way

- For example in a ring: list0 < list1 < ..

4

What can we expect?

- The best sequential algorithm (general data)

- Efficiency: 100%

- Can we do this? Well, hard to do with

”comparison-based” algorithms.

) log ( n n O

n T n p pT n n p T T p S E

p p p p

log log / 1

1

= ⇒ = = = = =

5

Rank sort

Sorting algorithm (not exactly a good sequential sorting algorithm). Parallel version, n processors for a[0]...a[n-1]:

- Let all processors have all values

- Parallel over i:

– Count all numbers less than a[i] – If the count is k, – then the given element is placed in b[k].

- All CPUs have made n-1 comparisons

- Complexity?

n numbers n processors O(n) Efficiency: log n/n

6

Rank sort

Parallel version, n2 processors: Really n*(n-1) processors

- Parallel over i:

– Count all numbers less than a[i]

- All CPU gets 0 or 1

- Make n reductions on (n-1) processors in parallel

– If the count is k, – then the given element is placed in b[k].

- All CPUs have made 1 comparison

- Complexity?

- Bad processor efficiency