7

Mike Hughes - Tufts COMP 135 - Fall 2020

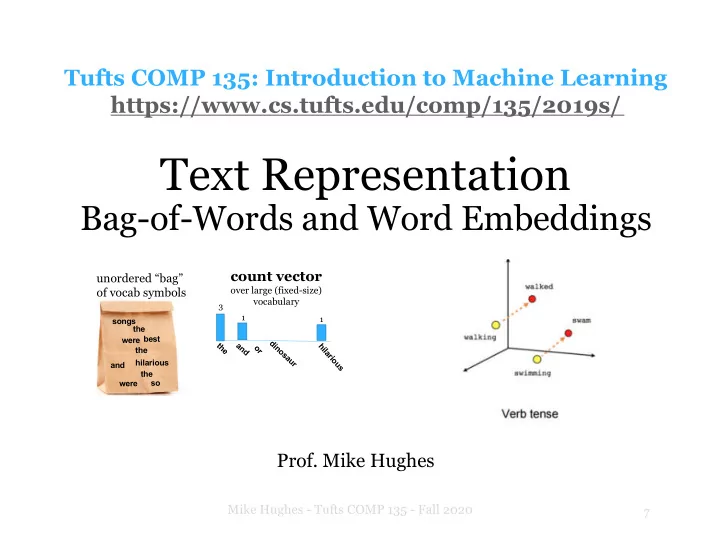

Text Representation

Bag-of-Words and Word Embeddings

Tufts COMP 135: Introduction to Machine Learning https://www.cs.tufts.edu/comp/135/2019s/

- Prof. Mike Hughes

count vector

- ver large (fixed-size)

vocabulary

songs the were the a n d

- r

hilarious dinosaur the

unordered “bag”

- f vocab symbols

best and were the 3 1 1 so hilarious