SLIDE 1

Coming Up With Better Policies

We can interleave policy evaluation with policy improvement as before. ⇡0

E

- ! Q⇡0 I

- ! ⇡1

E

- ! · · · I

- ! ⇡⇤ E

- ! Q⇤

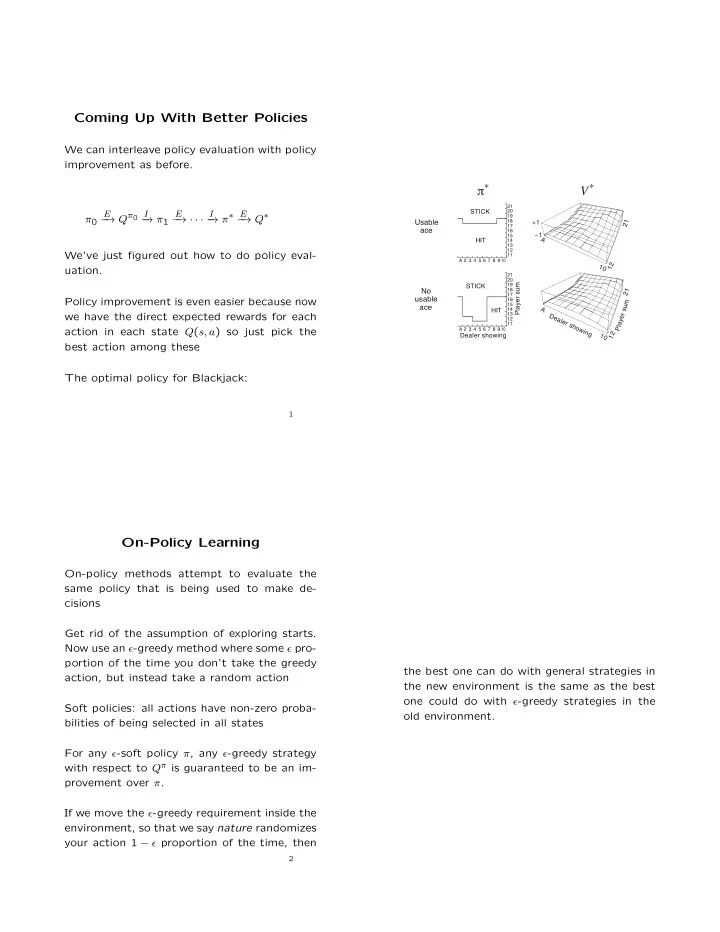

We’ve just figured out how to do policy eval- uation. Policy improvement is even easier because now we have the direct expected rewards for each action in each state Q(s, a) so just pick the best action among these The optimal policy for Blackjack:

1 Usable ace No usable ace

20 10 A 2 3 4 5 6 7 8 9

Dealer showing Player sum HIT STICK

19 21 11 12 13 14 15 16 17 18

π*

10 A 2 3 4 5 6 7 8 9

HIT STICK

20 19 21 11 12 13 14 15 16 17 18

V*

21 1 12 A Dealer showing P l a y e r s u m 1 A 12 21 +1 −1

On-Policy Learning

On-policy methods attempt to evaluate the same policy that is being used to make de- cisions Get rid of the assumption of exploring starts. Now use an ✏-greedy method where some ✏ pro- portion of the time you don’t take the greedy action, but instead take a random action Soft policies: all actions have non-zero proba- bilities of being selected in all states For any ✏-soft policy ⇡, any ✏-greedy strategy with respect to Q⇡ is guaranteed to be an im- provement over ⇡. If we move the ✏-greedy requirement inside the environment, so that we say nature randomizes your action 1 ✏ proportion of the time, then

2

the best one can do with general strategies in the new environment is the same as the best

- ne could do with ✏-greedy strategies in the

- ld environment.