SLIDE 12 Memory Networks (Fully Supervised)

75

Memories Tr Training ining

- It involves training the memory

representations and the scoring functions to generate answer

- We do so my minimizing the

following loss

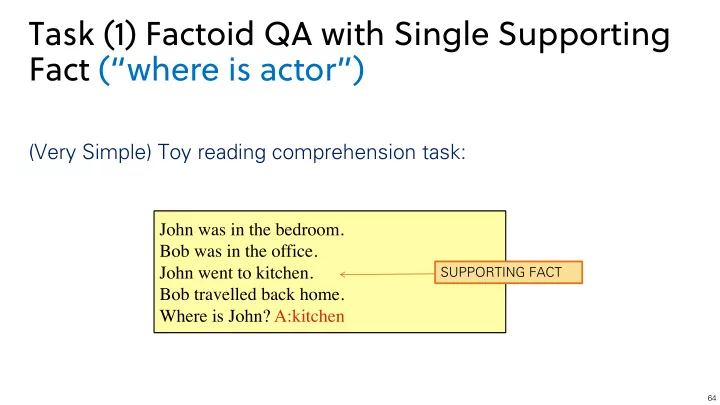

mi = f(John was in the bathroom.) mi+1 = f(Bob was in the office.) mi+2 = f(John went to the kitchen.) mi+3 = f(Bob travelled back home.)

Memories

x = f(Where is John?)

L = X

¯ f6=mo1

max(0, γ − So(x, mo1) + So(x, ¯ f))+ X

¯ r6=r

max(0, γ − Sr([x, mo1], r) + Sr([x, mo1], ¯ r))

We had access to true supporting fact during training that’s what we mean by “Fully Supervised”

So : scoring function for memories Sr : scoring function for responses This s was s the ca case se when we have a si single su supporting fact ct!