Table of contents

268

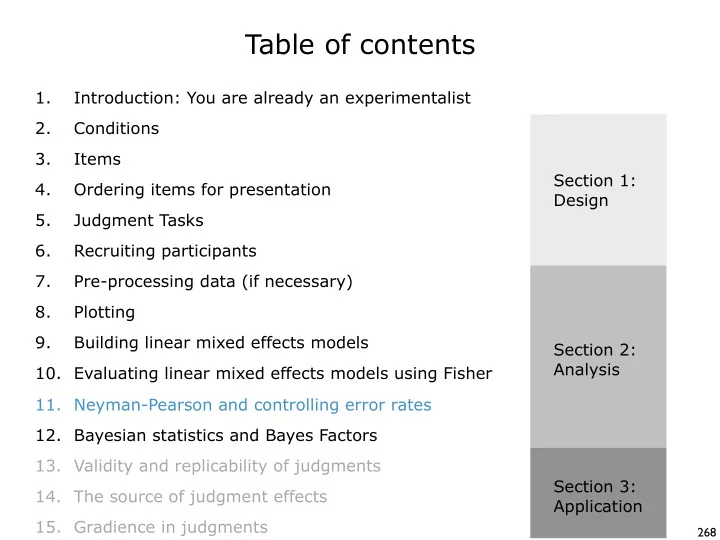

Table of contents 1. Introduction: You are already an - - PowerPoint PPT Presentation

Table of contents 1. Introduction: You are already an experimentalist 2. Conditions 3. Items Section 1: 4. Ordering items for presentation Design 5. Judgment Tasks 6. Recruiting participants 7. Pre-processing data (if necessary) 8.

268

269

270

271

272

273

274

275

277

278

280

281

282

283

284

285

286

287

289

290

291

293

294

295

296

297

298 Balluerka, N., Goméz, J., & Hidalgo, D. (2005). The controversy over null hypothesis significance testing revisited. Methodology, 1(2), 55-70. Cohen, J. (1994). The earth is round (p < .05). American Psychologist, 49, 997-1003. Gigerenzer, G. (2004). Mindless statistics. The Journal of Socio-Economics, 33, 587-606. Hubbard, R., & Lindsay, R. M. (2008). Why p values are not a useful measure of evidence in statistical significance testing. Theory and Psychology 18(1), 69-88. Nickerson, R. (2000). Null hypothesis significance testing: A review of an old and continuing

Hubbard, R & M. J. Bayarri. (2003) P Values are not Error Probabilities.